Are you a member of the Splunk Community?

- Find Answers

- :

- Splunk Administration

- :

- Monitoring Splunk

- :

- How much disk space is included with the standard ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We are currently considering migration to Splunk Cloud.

The retention period for some of our indexes are up to 14 months.

Considering the pricing on extra disk space, it is important to me to know how much disk space is included with the standard license.

Can anyone point me in a direction where I can find this information?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

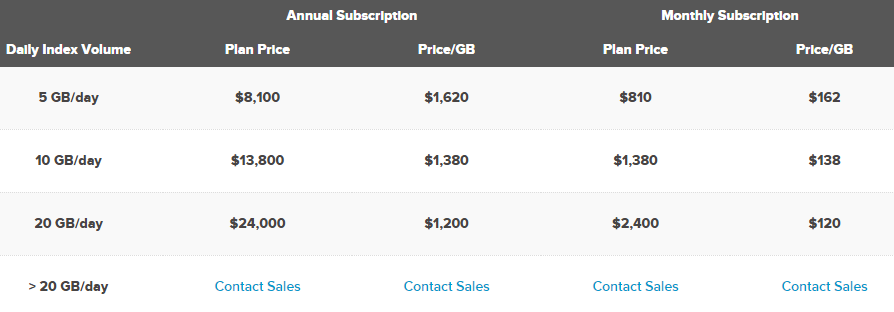

http://www.splunk.com/en_us/products/pricing.html#tabs/cloud

Splunk Cloud is priced based on maximum daily aggregate volume of uncompressed data indexed, expressed in gigabytes per day. Your Splunk Cloud subscription includes storage for 90 days of indexed data, additional storage is available.

So the default is 90 days retention. Check this algebra though:

5gb/day * 90 days = 450GB total uncompressed storage * 0.5 (average expected compression and indexing overhead ratio) = 225GB of storage expected

10gb/day * 90 days = 900GB total uncompressed storage * 0.5 (average expected compression and indexing overhead ratio) = 450GB of storage expected

20gb/day * 90 days = 1800GB total uncompressed storage * 0.5 (average expected compression and indexing overhead ratio) = 900GB of storage expected

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm with @polymorphic. I've been managing on-prem Splunk Enterprise instances for a number of years and have recently switched to managing a Splunk Cloud instance. The documentation is lacking for Splunk Cloud to ensure we don't experience data loss via bursts in volume or someone setting retention days well beyond the default 90 days and not realizing they may be dropping data earlier than expected in other indexes. I did ask for a copy of indexes.conf from Splunk support as I wanted see if Splunk Cloud uses volumes in their index configurations. They could not provide me a copy so I'm having to meticulous reverse engineer storage calculations and have lengthy conversations with our sales rep and sales engineer, and use whatever I can find on Splunk Answers.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Rob

This search might help:

| dbinspect index=* cached=t

| stats max(rawSize) AS raw_size max(sizeOnDiskMB) AS compresed_size BY bucketId, index | search index=*

| stats sum(compresed_size) as compresed_size sum(raw_size) as raw_size by index

| eval compresed_size = round(compresed_size / 1024 , 2)

| eval raw_size = round(raw_size / 1024 / 1024 / 1024 , 2)

| eval "Compression" = round((1-(compresed_size / raw_size)) * 100, 0)

| rename compresed_size as "Compressed GB", raw_size as "RAW GB"

| fields index "Compressed GB" "RAW GB" "Compression"

And maybe this:

| dbinspect index=* cached=t

| where NOT match(index, "^_")

| sort startEpoch

| stats first(startEpoch) as startEpoch by index

| join type=outer index [| rest splunk_server=idx*.splunkcloud.com /services/data/indexes-extended

| stats max(frozenTimePeriodInSecs) as retention by title

| eval Retention = round(retention / 86400, 0)

| rename title as index]

| eval FirstSample = strftime(startEpoch, "%d-%m-%Y %T")

| eval now = now()

| eval DaysOfData = round((now - startEpoch) / 60 / 60 / 24)

| eval diff = DaysOfData - Retention

| table index, DaysOfData, Retention, diff, FirstSample

Since my question we have migrated to SplunkCloud and i have several indexes with 420 days of retention and some with 150 days, and i see no issues what so ever.

My overall diskusage is 62%:

| dbinspect index=* cached=t

| stats max(sizeOnDiskMB) AS compresed_size BY bucketId, index | search index=*

| stats sum(compresed_size) as compresed_size

| eval storage = "Your disk space"

| eval pct = round((compresed_size / 1024 / storage) * 100, 0)

| fields pct

My raw uncompressed index size is above the advertised disk space:

| dbinspect index=* cached=t

| stats max(rawSize) AS raw_size BY bucketId, index | search index=*

| stats sum(raw_size) as raw_size

| eval GB = round(raw_size / 1024 / 1024 / 1024 , 2)

| fields GB

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @polymorphic - going to use these to build out dashboards/alerts. Hoping in a future version of the Cloud Monitoring Console they may have something that warns/predicts of early data loss.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Folks,

When you buy a license you will be allocated (by default) 90 days worth of storage.

So 100GB/day x 90 days = 9000 GB of raw space available, plus additional overflow room to account for spikes. You don't have to know what's going on under the hood, just know that Splunk Cloud has extra space available for spikes, and has the ability to expand elastically if you need to upgrade your storage.

You can buy additional space if you need it in 500GB chunks. Those chunks can be sliced and diced how every you want to using the index management app.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is actually pretty important to know whats going on under the hood, if you need retention for more than 90 days, given the prices on additional diskspace.

I have been told that the listprice for 12 month retention on 100GB/day is $37,950

In my case i need:

14 months retention on index1 (1.5GB/day)

5 months on index2 (21GB/day)

14 days on index3 (50GB/day)

But on my current Splunk installation indexed data in:

index1 is reduced by ~35%

index2 is reduced by ~70%

index3 is reduced by ~50%

This means that even though i need retention up to 14 months i need less than 3TB diskspace.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @Polymorphic,

Best to get out of the retention days game and think about raw storage. Figure out what your raw storage needs are and buy it.

Remember you have a base storage that amounts to your license GB * 90. Then purchase 0.5 TB chunks as needed.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Precisely! I see you figured out the compression in each index.

Khourihan [Splunk],

Can you confirm the replication factor on splunk cloud?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes. |dbinspect is a pretty nice tool for this!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Polymorphic yes indeed. Nice.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It depends on the license you select. The lowest option includes 450GB of storage. You can buy additional storage. See http://splunk.force.com/SplunkCloud?prdType=SplunkCloud.

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Rich's link shows the total disk space for each. I guess the cloud architects or sales team forgot the whole compression & overhead ratio OR compression is disabled in cloud.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually this link only show the free 15 days 5GB/day, 50GB/total storage option. Nothing else. 😞

Thats why im asking.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

http://www.splunk.com/en_us/products/pricing.html#tabs/cloud

Splunk Cloud is priced based on maximum daily aggregate volume of uncompressed data indexed, expressed in gigabytes per day. Your Splunk Cloud subscription includes storage for 90 days of indexed data, additional storage is available.

So the default is 90 days retention. Check this algebra though:

5gb/day * 90 days = 450GB total uncompressed storage * 0.5 (average expected compression and indexing overhead ratio) = 225GB of storage expected

10gb/day * 90 days = 900GB total uncompressed storage * 0.5 (average expected compression and indexing overhead ratio) = 450GB of storage expected

20gb/day * 90 days = 1800GB total uncompressed storage * 0.5 (average expected compression and indexing overhead ratio) = 900GB of storage expected

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So a 100GB/day license would give you 9000GB storage?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Correct but as the rep from splunk has mentioned, they "have room for spikes". I assume this is why they advertise the uncompressed space. This way if your data is all binary and cant be compressed at all, they still have enough for 90 days but for most people, the space would effectively allow them double the storage they anticipate.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I left out replication factor...