Are you a member of the Splunk Community?

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- "Corrupt csv header" : how to find the corrupted c...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I find on splunkd.log a lot of warnings as: "Corrupt csv header, contains empty value (col #3)" without any other details.

I have many .csv and many automated lookups in my Splunk. How can I find the corrupted one without opening all of them with a spreadsheet?

Thank you to all in advance

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Assuming that your OS is unix/linux, assuming that your CSV files use standard filenaming conventions (i.e. *.csv), assuming that your CSV files are standard with a header on the first line, assuming that the source files still exist, you can use the following CLI commands to identify problematic files:

find . -name "*.csv" -exec echo {} \; -exec grep -n ",," {} \; | grep -B 1 "^1:"

This variation also considers whitespace but may not be compatible with your version of grep:

find . -name "*.csv" -exec echo {} \; -exec grep -Pn ",\s*," {} \; | grep -B 1 "^1:"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have created a simple addon on Splunkbase that can identify bad CSV files in your environment: https://splunkbase.splunk.com/app/7497

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Something similar happened to me, we had an TA Addon arista and the app Arista operating in the Search Head, we found that there was a duplicate word "speed" in a CSV but there was no reference to what lookup it was.

Error

"09-19-2019 22:05:14.045 -0500 WARN SearchResultsCSVSerializer - Corrupt csv header, 2 columns with the same name 'speed' (col #3 and #0, #3 will be ignored)"

Solution - Find the speed word in the csv files of the splunk apps directory.

grep -Rw '/opt/splunk/etc/apps/' -e 'speed' --include=*.csv

Output

/opt/splunk/etc/apps/TA-arista/lookups/interface-speed.csv:speed,"speed_desc",Speed

/opt/splunk/etc/apps/aristanetworks/lookups/interface-speed.csv:speed,"speed_desc",speed

Then delete the app or duplicate csv (In my case delete the app contain csv duplicate)

/opt/splunk/bin/splunk stop

rm -rf /opt/splunk/etc/apps/aristanetworks

/opt/splunk/bin/splunk start

Validate log level (error, warn)

tail -f tail -f /opt/splunk/var/log/splunk/splunkd.log

OR Query SPL

index="_internal"

| search log_level!="info"

| stats values(component) count by log_level

🙂

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry to add another answer; but here is how you do it if your not on Linux or do not have OS access on the search head to run @woodcock 's command.

Using the Lookup Editor App https://splunkbase.splunk.com/app/1724/ you can check the lookup files and see the error straight away!

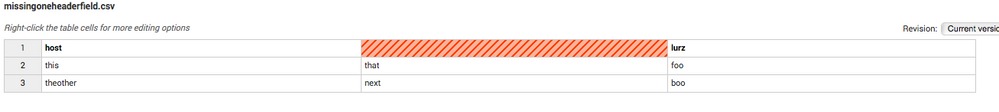

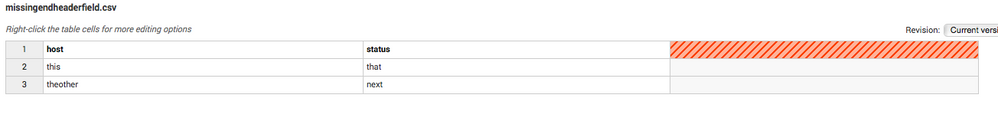

I uploaded two lookup files called missingoneheaderfield.csv which is missing one header field somewhere in the header and another lookup file called missingendheaderfield.csv which - surprise, surprise - misses one field at the end of the header.

Running the app and looking at the lookup files you can spot and fix the errors very easy.

missingoneheaderfield.csv

missingendheaderfield.csv

Hope this will help anyone out there to get rid of this annoying error

cheers, MuS

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

If you do not know which csv file is causing the issue, how do you manage to locate it?

As we have many lookups, but the error is not mentioning which is causing the error.

Many thanks,

Jaspal

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

another quick and easy solution if you know which lookup it is - use Splunk search commands to clean it:

| inputlookup lookupName | outputlookup lookupName

cheers, MuS

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This may drop rows or mis-align fields with headers, depending on the type of problem. I would rather hand-manage the cleanup.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is true, if the problematic header misses a field some where in the header and not at the end - but then you have other problems and were missing those fields anyway.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Just spent hours trying this; it was an error in the header BUT it was at the END of the header...that is the first line ended with a comma ",". Grep for this also:

find . -name "*.csv" -exec echo {} \; -exec grep -Pn ",$" {} \; | grep -B 1 "^1:"

Looks for a comma at the END of the line in the first line.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

and to make it match a missing field somewhere in the header AND at the end run this find

find . -name "*.csv" -exec echo {} \; -exec grep -Pn ',$|,,' {} \; | grep -B 1 "^1:"

Still have to figure out how this can be done by Splunk Admin without CLI OS access though......

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Assuming that your OS is unix/linux, assuming that your CSV files use standard filenaming conventions (i.e. *.csv), assuming that your CSV files are standard with a header on the first line, assuming that the source files still exist, you can use the following CLI commands to identify problematic files:

find . -name "*.csv" -exec echo {} \; -exec grep -n ",," {} \; | grep -B 1 "^1:"

This variation also considers whitespace but may not be compatible with your version of grep:

find . -name "*.csv" -exec echo {} \; -exec grep -Pn ",\s*," {} \; | grep -B 1 "^1:"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

good way! finded! thanks!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This finds everything in 1 search:

find . -name "*.csv" -exec echo {} \; -exec egrep -Pn "^\s*,|,\s*,|,\s*$" {} \; | grep -B 1 "^1:"