- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: calculates the availability of a service with ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

@rnowitzki

@renjith_nair

could you help me on the following question please:

I index every day at 6 p.m. splunk events from an ITRS database. each event is made up of a critical alert and an OK alert generated by ITRS. the events concern a pair of servers (Active and passive) I have to define a search with a condition like this:

if server1 K0

AND server2 K0

AND server1 0K after server2 is K0

then I calculate the time of the last K0 servers and the first 0K server

this corresponds to the downtime of my service

but only if both servers are KO'd at the same time.

i.e if server1 K0 at 3pm

AND server2 K0 at 3:30 p.m.

BUT server1 0K at 3:20 p.m.

then do nothing

i have this :

index=index (severity=2 OR severity=0 OR severity="-1" OR severity=1) server=server1 OR server=server2

| eval ID=Service+"_"+Env+"_"+Apps+"_"+Function+"_"+managed_entity+"_"+varname

| addinfo

| eval periode=info_max_time-info_min_time

| transaction ID startswith=(severity=2) maxevents=2

i dont now how to create condition

Thank you for your help

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @wcastillocruz,

Can you try sort ascending only before transaction command?

index=index_sqlprod-itrs_toc (managed_entity="vmc-neorc-20 - rec" OR managed_entity="vmc-neorc-19 - rec") rowname="ASC RecordingControl"

| eval ID=Service+"_"+Env+"_"+Apps+"_"+Function+"_"+varname

| addinfo

| sort _time asc

| eval peer_failed=if(severity=2,1,-1)

| streamstats sum(peer_failed) as failed_peers by ID

| eval failed_peers=if((failed_peers=1) AND (severity="0" OR severity="-1"),3,failed_peers)

| where NOT (failed_peers=1)

| sort - _time

| transaction ID startswith=(failed_peers=2) endswith=(failed_peers=3) maxevents=2- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @wcastillocruz,

Can you try sort ascending only before transaction command?

index=index_sqlprod-itrs_toc (managed_entity="vmc-neorc-20 - rec" OR managed_entity="vmc-neorc-19 - rec") rowname="ASC RecordingControl"

| eval ID=Service+"_"+Env+"_"+Apps+"_"+Function+"_"+varname

| addinfo

| sort _time asc

| eval peer_failed=if(severity=2,1,-1)

| streamstats sum(peer_failed) as failed_peers by ID

| eval failed_peers=if((failed_peers=1) AND (severity="0" OR severity="-1"),3,failed_peers)

| where NOT (failed_peers=1)

| sort - _time

| transaction ID startswith=(failed_peers=2) endswith=(failed_peers=3) maxevents=2- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @scelikok

it works!!!!!!!

thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great!, can you please mark as accepted solution for helping to other users.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @wcastillocruz,

The problem may be the sort order. Transaction command requires descending order. Please try sorting desc.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @scelikok,

Thank you for always being there despite the complexity of my questions.

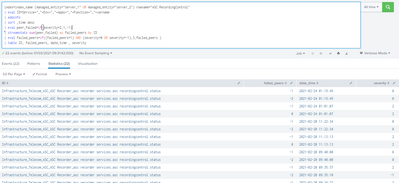

in my case i can't use the desc sort, because it disrupts my streamstats sum. here is a screenshot of what i get in my failed_peers with desc sort.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @wcastillocruz,

Since you did not mention the relation between severity and OK/OK, I assumed positive severity is KO, negative severity is OK. You can update peer_failed eval according to your definition of failure.

Please try below;

index=index (severity=2 OR severity=0 OR severity="-1" OR severity=1) server=server1 OR server=server2

| eval ID=Service+"_"+Env+"_"+Apps+"_"+Function+"_"+managed_entity+"_"+varname

| addinfo

| eval periode=info_max_time-info_min_time

| eval peer_failed=if(severity>0,1,-1)

| streamstats sum(peer_failed) as failed_peers by ID

| transaction ID startswith=(failed_peers=2) endswith=(failed_peers<2)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @scelikok ,

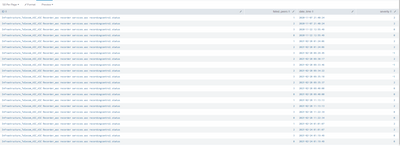

I don't know where the error is in my search, could you help me, I would like to form a transaction with each event 2 and 3 in the failed_peers column of my table in time order. but I get a "not found" result while events exist

this is my search :

index=index_sqlprod-itrs_toc (managed_entity="vmc-neorc-20 - rec" OR managed_entity="vmc-neorc-19 - rec") rowname="ASC RecordingControl"

| eval ID=Service+"_"+Env+"_"+Apps+"_"+Function+"_"+varname

| addinfo

|sort _time asc

| eval peer_failed=if(severity=2,1,-1)

| streamstats sum(peer_failed) as failed_peers by ID

| eval failed_peers=if((failed_peers=1) AND (severity="0" OR severity="-1"),3,failed_peers)

|where NOT (failed_peers=1)

|transaction ID startswith=(failed_peers=2) endswith=(failed_peers=3) maxevents=2

2 is my second critical alert 3 is my first OK alert after 2 critical alerts

here is the failed peers column whose values my search should follow in temporal order, but it's not like that.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @wcastillocruz ,

Isn't this the same use case that we discussed some weeks ago?

Did it not work for you?

Anyways:

What should be returned at the end, do you need the total duration of downtime, or a table where you see when the service was down?

In general, this should be a starting point:

| timechart span=5m latest(severity) by server

| filldown

| eval servicedown=if(server1=2 AND server2=2,"yes","no")

This will give you a table of timestamps where the service was down, because both of the servers had severity 2 at the same time.

To get the duration of the downtime, you could work with transaction or streamstats for example.

But please clarify what the information is that you need to report from this.

BR

Ralph

Karma and/or Solution tagging appreciated.