Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: Stats sum command experiencing strange behavio...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Folks;

So getting a very bizaare issue here after our upgrade to 7.2

index="app_rocket_dxs" sourcetype="fluentd_json" source="vbs-dxs-int*"

| where message like "%Summary%"

| eval temp=split(substr(message,64,250),":")

| eval DomainName=mvindex(temp,1)

| eval StartTime=mvindex(temp,3)

| eval EndTime=mvindex(temp,5)

| eval TopicName=mvindex(temp,7)

| eval MsgCount=mvindex(temp,9)

| convert num(MsgCount) as MsgCounts |convert timeformat="%Y-%m-%d" ctime(_time) AS date

| table StartTime,EndTime,MsgCounts,DomainName,TopicName,date

| stats sum(MsgCounts) as PublishedCount by date,TopicName

| sort date desc

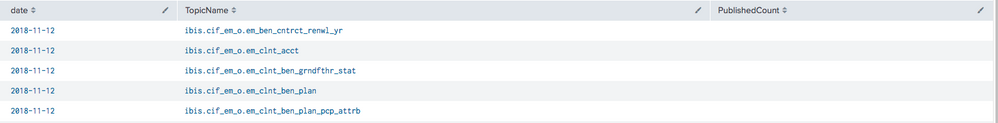

Here is the way the data looks as a table

However after applying the stats command the 'PublishedCount' is blank:

Sample Event (scrubbed)

{"docker":{"container_id":"8203837773d4f65d9a3382d381c97f64af01209f865463239e7d59e6ed2972ec"},"kubernetes":{"container_name":"coverageemclntbenplan","namespace_name":"vbs-dxs-int","pod_name":"covplan-1-m9g6x","pod_id":"a8004109-e37d-11e8-b28e-fa163e193d33","labels":{"app":"covenplan","appname":"Rocket","deployment":"covernplan-1","deploymentconfig":"coveeplan"},"host":"cilver.com","master_url":"https://kubernetes.default.svc.cluster.local","namespace_id":"23eecb03-7947-11e8-9035-fa163ee5bb62"},"message":"11-12 16:37 oraclepool.oraclekafka INFO Publisher Summary - Domain:coverage:Start_Bound:2018-11-12-11.33.26.421532 :End_Bound:2018-11-12-11.35.26.532198 :Topic Name:ibis.cif_em_o.em_clnt_ben_plan:count:0\n","level":"info","pipeline_metadata":{"collector":{"ipaddr4":"100.00.00.00","ipaddr6":"fe80::0000:0000:0000:a728","inputname":"fluent-plugin-systemd","name":"fluentd","received_at":"2018-11-12T16:37:21.820821+00:00","version":"0.12.43 1.6.0"}},"@timestamp":"2018-11-12T16:37:21.767889+00:00","viaq_index_name":"project.vbs-dxs-int.23eecb03-7947-11e8-9035-fa163ee5bb62.2018.11.12","viaq_msg_id":"NzM0OWEzZGEtMmJiNy00MDQ3LWI4ZjAtZTdkMGU1MzY0MzZj","kubernetes_node":"cilver.com"}

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Look at your message string in detail - it has a tailing line breaker \n. Your approach of splitting at colons will include the line breaker in your field value, breaking the sum. Sanitize your value using trim() or replace(), or use rex instead of splitting:

... | rex field=message ":count:(?<MsgCounts>\d+)"

Side note, add the word Summary to your initial search to reduce the number of events loaded off disk (scanCount in the job inspector).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Look at your message string in detail - it has a tailing line breaker \n. Your approach of splitting at colons will include the line breaker in your field value, breaking the sum. Sanitize your value using trim() or replace(), or use rex instead of splitting:

... | rex field=message ":count:(?<MsgCounts>\d+)"

Side note, add the word Summary to your initial search to reduce the number of events loaded off disk (scanCount in the job inspector).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

you sir, are a scholar. thanks!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do post a sample event.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Updated with sample!