Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Field extraction of log file -- Each line has diff...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Field extraction of log file -- Each line has different format, how can I include all format in one regex?

I am doing field extraction for a log file format as below:

line 1: field1, field2, field3, field4

line 2: field1, field2, field3, field5, field4

line 3: field1, field2, field3, field4

I can write separate regex1 for line 1 and regex 2 for line 2 format, but when I do field extraction, I can only use one regex, how can I put both regex in to cover all log format? Any suggestions?

Cheers

Sam

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for all the answers, what I am looking for is more on index / normalize the log when it injected rather than doing field extraction in the search query.

To achieve my goal, I end up have two field extraction Rex for this sourcetype, it seems give what I want. But I am wondering would that consume too much resource when I inject large mount of logs?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can use something like the following (the rex command is the part you are interested in, and the rest is the setup for showing that it works) :

| makeresults

| eval raw="line 1: field1, field2, field3, field4

line 2: field1, field2, field3, field5, field4

line 3: field1, field2, field3, field4"

| makemv raw delim="

"

| mvexpand raw

| rex field=raw "[^:]+:\s*(?P<field1>[^,]+),\s*(?P<field2>[^,]+),\s*(?P<field3>[^,]+),\s*((?P<field5>[^,]+?),\s*?)?+\s*(?P<field4>[^,]+$)"

You will probably have to make adjustments for your actual data, but this should get you started on a complete solution.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please check -

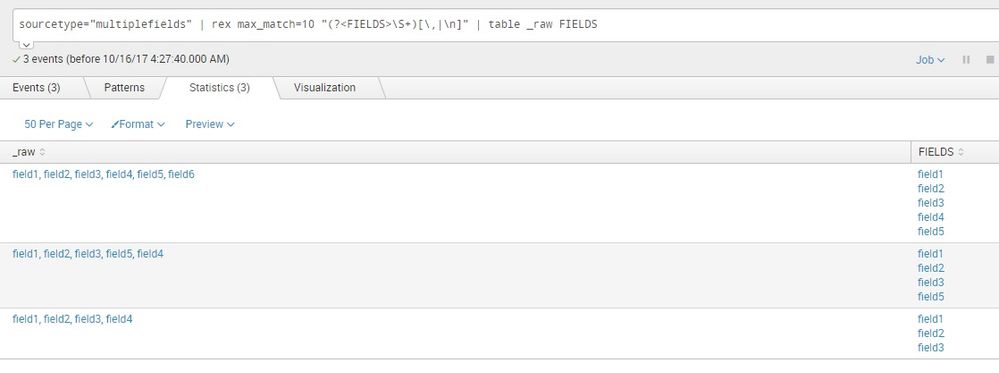

sourcetype="multiplefields" | rex max_match=0 "(?<FIELDS>\S+)[\,|\n]" | table _raw FIELDS

you just want to pull all fields and make a table like this photo or some other operations you want to do, please clarify -

(PS- on the photo, one or two fields are not picked up, that is due to my sample file.)

Sekar

PS - If this or any post helped you in any way, pls consider upvoting, thanks for reading !