Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Create a table with diferent values

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

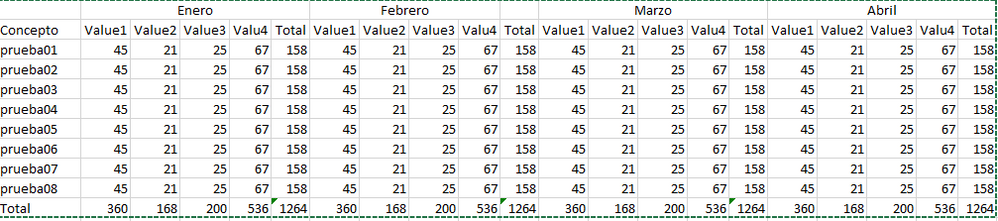

I need to create or design the following table.

Is posible in Splunk Enterprise?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is the best that you can do:

| makeresults | eval raw="time=1/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67"

| makemv raw

| mvexpand raw

| rename raw AS _raw

| kv

| eval _time = strptime(time, "%m/%d/%Y")

| fields - time

| rename COMMENT AS "Everything above generates sample event data; everything below is your solution."

| bin _time span=1mon

| stats sum(Val*) AS Val* BY Concepto _time

| addtotals Value* fieldname="ValueTotal"

| eval time = strftime(_time, "%b")

| sort 0 - _time

| streamstats dc(_time) AS spaces_count

| eval spaces_count = spaces_count - 1

| eval initialLength = len(time)

| eval time = " " . time

| eval paddedLength = len(time)

| eval time = substr(time, paddedLength - initialLength - spaces_count + 1)

| foreach Value* [ eval {time}:<<FIELD>> = <<FIELD>> ]

| fields - time Value*

| stats values(*) AS * BY Concepto

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is the best that you can do:

| makeresults | eval raw="time=1/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba01,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba02,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba03,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba04,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba05,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba06,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba07,Value1=45,Value2=21,Value3=25,Value4=67 time=1/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67 time=2/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67 time=3/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67 time=4/1/2018,Concepto=prueba08,Value1=45,Value2=21,Value3=25,Value4=67"

| makemv raw

| mvexpand raw

| rename raw AS _raw

| kv

| eval _time = strptime(time, "%m/%d/%Y")

| fields - time

| rename COMMENT AS "Everything above generates sample event data; everything below is your solution."

| bin _time span=1mon

| stats sum(Val*) AS Val* BY Concepto _time

| addtotals Value* fieldname="ValueTotal"

| eval time = strftime(_time, "%b")

| sort 0 - _time

| streamstats dc(_time) AS spaces_count

| eval spaces_count = spaces_count - 1

| eval initialLength = len(time)

| eval time = " " . time

| eval paddedLength = len(time)

| eval time = substr(time, paddedLength - initialLength - spaces_count + 1)

| foreach Value* [ eval {time}:<<FIELD>> = <<FIELD>> ]

| fields - time Value*

| stats values(*) AS * BY Concepto

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, you can do that. You just need to move the month name into the name of the fields.

concepto enero val1 enero val2 enero val3 enero val4 enero total febrero val1 febrero val2 ... etc

However, the business need can probably be solved more completely by reviewing how the user is going to use that information. It's not very easy in that report format to compare the results from, say, January to the results from April. Presenting the results as numbers doesn't seem to be the best way.

Consider using a dashboard with a trellis. You have three dimensions (month, test, and value) and a count for each combination. A trellis with a stacked bar chart for each value in a month, split out by test, seems like it would produce more information on how your values were changing over time for each test.

Take this run-anywhere sample, and set the visualization as a stacked bar chart, with the trellis set to use "Month" as its split-by field, and you will see what I mean.

| makeresults | eval mydata="01,prueba1,45,21,65,27!!!!02,prueba1,47,21,63,27!!!!03,prueba1,41,25,65,27!!!!04,prueba1,45,21,65,27!!!!01,prueba2,45,21,65,27!!!!02,prueba2,45,21,65,27!!!!03,prueba2,45,21,65,27!!!!04,prueba2,45,21,65,27"|makemv mydata delim="!!!!"| mvexpand mydata

| rex field=mydata "(?<month>\d+),(?<test>[^,]+),(?<val1>[^,]+),(?<val2>[^,]+),(?<val3>[^,]+),(?<val4>[^,]+)"

| eval Month="2019-".month

| stats sum(va*) as va* by Month test

Then switch to use test as the split-by field, for comparison. You might also consider an area chart as a possible viz for this, instead fo the stacked bar charts.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Splunk doesn't support multiline column headers, so you'd basically have to merge those two values into single field and that use in your chart or any aggregation command. More accurate suggestions can be given if you could share your sample data/search.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you show the sample dataset ? This type of result is possible with the use of commands like chart along with append or join but again depends on how your data is coming into Splunk. totals can be calculated using addtotals addcoltotals etc.