Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Re: retention policy

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

retention policy

Hi All,

I have tried looking over the documentation for this, but I am super confused. And really struggling to wrap my head around this.

I have an environment where Splunk is ingesting syslog from 2 firewalls. The logs are only audit / management related, and these need to be sent to a sperate server for compliance (hence splunk).

I want to configure a retention policy where this data is deleted after 1 year, as that is the specific requirement.

From what i can tell, i just need to add the "frozentimeinseconds" line to the index conf file for the "main" index (as this is where the events are going)

Current ingestion is ~150,000 events per day. And daily ingestion is ~30-35MB.However, this is subject to change in the future as more firewalls come online etc..

There is plenty of storage available. However the requirement is just 1 year of searchable data.

But I keep seeing things about hot/warm/cold/frozen etc.. and i just dont get it. All thats needed is 1 year of searchable data, anything older than (time.now() - 365 days) can be deleted.

Can someone please assist me with what i need to do to make this work 🙂

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

getting exact retention time for e.g. 1y in splunk could be almost mission impossible 😞

There is several parameters how splunk define when it removes those buckets, which has all events older than your defined retention time! You must understand than when splunk calculate retention in reality it's for all events in bucket! It's not event based, as a smallest storage unit is a bucket not an event. Practically this means that splunk can remove bucket, when all events in that bucket has older than your defined retention.

In your case, you have quite low event volume, which means that you could have one bucket, which contains events from several months max(15GB divide 30MB divided by #hot buckets for that index ). Usually you have several (default is 3) active hot buckets (per search peer) at same time, where splunk can write new events. Default for keeping a bucket as hot is 90d or when it's come full or when you restart splunk. There are also some other parameters which could affect this!

Here is some links where you could learn more how this is actually working:

- https://conf.splunk.com/files/2017/slides/splunk-data-life-cycle-determining-when-and-where-to-roll-...

- https://docs.splunk.com/Documentation/Splunk/9.1.2/Indexer/Bucketsandclusters

- https://community.splunk.com/t5/Splunk-Search/How-can-I-find-the-data-retention-and-indexers-involve...

- https://community.splunk.com/t5/Getting-Data-In/Indexes-configuration/m-p/564276

- https://community.splunk.com/t5/Splunk-Enterprise/Splunk-shows-only-9-months-270-days-data-How-do-I-...

- https://community.splunk.com/t5/Getting-Data-In/What-counts-for-Splunk-retention-time-if-events-come...

r. Ismo

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jbates58 ,

at first on the server containin the indexes, don't use the main index, but create a custom index (e.g. firewalls)

then for this new index define the retention you want (one year).

Then assign the new index name to the inputs that you should have on your Forwarders.

At least, when you'll ingest more logs, you should monitor your index to undertand if the dimension you configured is correct or if you need to enlarge it.

Ciao.

Giuseppe

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jbates58 Yes, at times the retention policy may give difficult times.

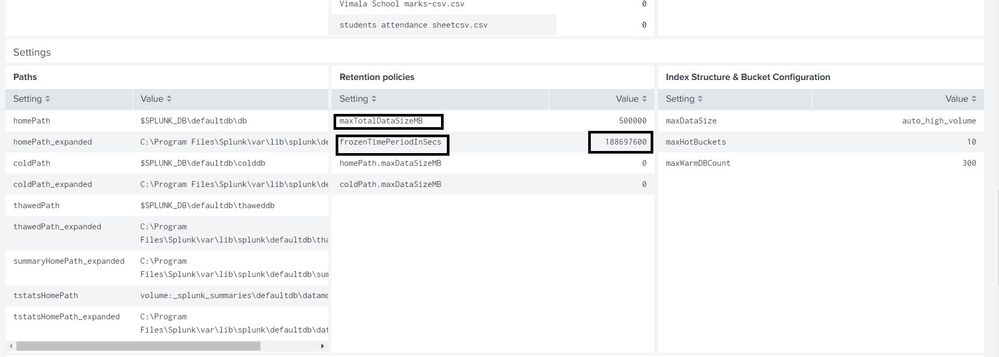

in DMC Server, Pls check this... Settings > Monitoring Console > Indexing > Indexes and Volumes > Index Detail: Instance

EDIT - Pls check the docs at https://docs.splunk.com/Documentation/Splu nk/9.1.2/Admin/Indexesconf

one thing to remember - frozenTimePeriodInSecs vs maxTotalDataSizeMB - can give confusion as well (i remember whichever comes first will work and take precedence over the other)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here is the contents of that page. I have redacted out a little bit of info relating to the environment.