Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- How to delete duplicate events?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to delete duplicate events?

Each event has been ingested twice with the same uuid.

i want to keep one event only for each uuid.

How to delete one event only for each uuid?

for searching index="okta*" | dedup uuid, it will show events with the unique uuid only

it will show half of total events that i want.

then i run index="okta*" | dedup uuid | delete , but this operation is not allowed

it will show "this command cannot be invoked after the command simpleresultcombiner"

Anyone have suggestion?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Firstly, there is not much point in "deleting" data - you already "paid" for it with your license if you're using volume-based licensing so it might as well just stay there 😉

But seriously, I'd start with improving the data onbooarding process so you can identify the duplicates and prevent them from being ingested in the first place. There is no point in indexing data only to have it filtered out all the time.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please go through this link i hope you may find the solution to it.

https://community.splunk.com/t5/Splunk-Search/How-to-delete-duplicate-events/m-p/70656/highlight/tru...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please, don't do it. If you can't copy-paste properly, don't do it! Especially that it's a potentially destructive command that can mean that you can lose your data.

It's not to make fun of you or anything it's just that if you don't understand what this search does and you're just copying it blindly, you can cause much harm!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And the subsearch maxout is 10000. Please suggest a way that can dedup all data from index without limit.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No. This is not a delete command.

Please suggest a way to dedup the data.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As Per my Understanding I gave you this Solution,

You Can Try if it Solves your Issue.

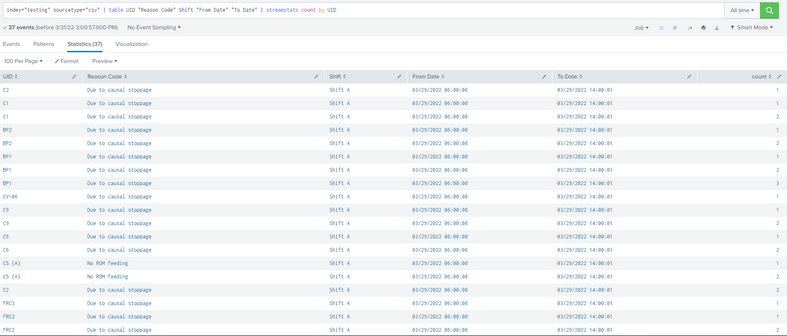

I hope Like this you have the Data, there are some Duplicates in the index in UID field.

I removed the duplicates with the below Query

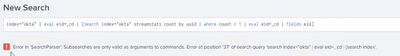

index="testing" sourcetype="csv" | table UID "Reason Code" Shift "From Date" "To Date" | streamstats count by UID | where count = 1 | fields - count

I don't know whether there is any limit of 10000 or not , You can try ,Hope it works.

Thankyou;

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

i will try this query thank you.

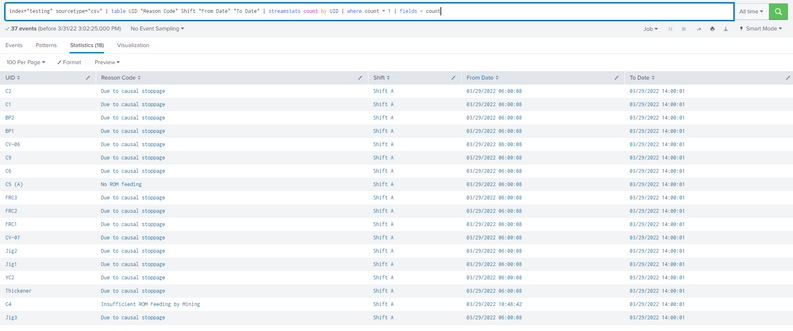

but can this query add "| delete" to delete data rather than just dedup when searching?

index="testing" sourcetype="csv" | table UID "Reason Code" Shift "From Date" "To Date" | streamstats count by UID | where count = 1 | fields - count | delete

because if i just dedup in searching , i just did "index="okta*" | dedup uuid" and fine.

but i want to delete from index rather than just dedup in searching every time.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, you cannot. It's kinda complicated and it's because of how splunk works and where and how various commands are performed. Streamstats is _not_ a distributable streaming command so after that command you cannot do | delete.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can sort your events and then do streamstats with a relatively short window (like 3 or so) to count events by raw event value giving you effectively each event with a value of either 1 or 2 as count. Then you would only need to filter to see the events with the count=1

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

i mentioned all the data are with uuid count=2.

so i want to cut it as half, do you have solution? thanks

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

i tried, it is not working