Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Re: Can [batch] read a partial file such that the ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am indexing very large files each day, each on the order of 20+GB. I am using [batch] and move_policy = sinkhole such that the file is read, indexed and intentionally deleted.

However, sometimes the # of events indexed are less than the # of events in the file.

Here is the inputs.conf segment that applies.

[batch:///my_path_to_the_file/*.import]

move_policy = sinkhole

sourcetype = my_sourcetype

index = my_index

crcSalt=<SOURCE>

disabled = false

These large files are being SFTP'd to the Heavy Forwarder / Dropbox and the transfer can take 15+ minutes to complete.

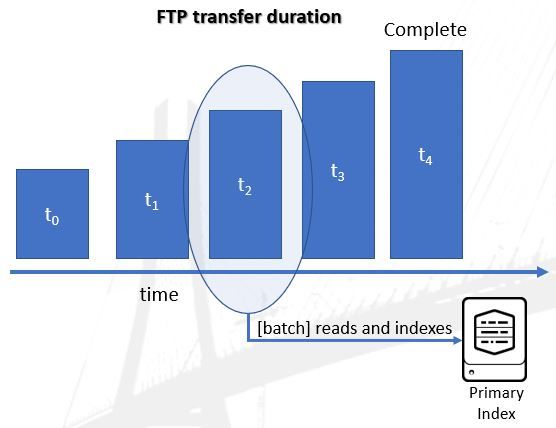

I am wondering whether the [batch] process will take a snapshot of the file and index it sometime after it arrives but before the transfer has completed. I am presuming that [batch] only looks at the file once.

Essentially, can what I attempt to show in the following image actually occur?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Perhaps it will help to tell Splunk to wait before it decides it's reached the end of the file.

time_before_close = 60Put this in inputs.conf

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you transfer the file to a non-monitored name/location on the HF and then rename/move the file after the transfer completes?

Check the props.conf settings for the file's sourcetype to make sure events are broken correctly.

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the response.

I am confident the events are being broken properly.

For development and integration we used the move/rename methodology and all was well.

However, for Production and full automation we moved away from this. Initially we did not see any issues (possibly unaware), but over time the differences in the number of events was noticed.

This led to my posting which I am hoping someone can answer regarding whether [batch] presumes that a file is complete and static (unchanging) and therefore only looks at the file once to read & index. If this is true, then it is possible that [batch] could read a partially transferred file, index and delete it ... even though the file transfer has not completed.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Perhaps it will help to tell Splunk to wait before it decides it's reached the end of the file.

time_before_close = 60Put this in inputs.conf

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Will definitely investigate this option for inputs.conf

Making the setting time_before_close = X where X is greater than the transfer time would seem to be a good fix.

Thank you.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Within inputs.conf, set the following parameter to > 15 minutes (in seconds) for my scenario

time_before_close = X where X = 15 min x 60 sec/min = 900

Other non-Splunk options include ...

https://winscp.net/eng/docs/script_locking_files_while_uploading

* If SFTP server supports locking, lock the file until the transfer has completed

* Transfer file with a non - .import file naming extension, then rename the file with extension *.import

- We originally used this renaming method and it worked but was not yet automated