Are you a member of the Splunk Community?

- Find Answers

- :

- Using Splunk

- :

- Dashboards & Visualizations

- :

- How to make transaction starts with and end with t...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all, does anyone knows if there's any way to make transaction start and end with the proper results.

I have a transaction URL startswith=STATUS=FAIL endswith=STATUS=PASS.

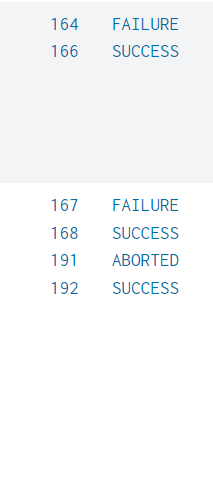

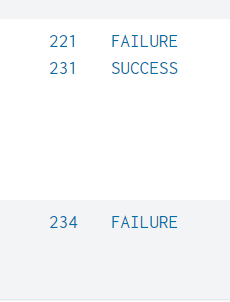

The data has pattern like FAIL,PASS,FAIL,PASS,PASS,FAIL,FAIL,FAIL,PASS...

The transaction command doesn't work well.

My requirement is to get the immediate PASS URL after the FAIL one.

In a situation like FAIL...... PASS will take the last part of FAIL, PASS. I want it to take FAIL..............PASS.

Does anyone know how to do this?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There might be a more efficient way to do this but this might work for you

| streamstats count as start reset_on_change=true by status

| where start=1

| streamstats count(eval(status=="FAIL")) as fails by status

| eval fails=if(fails=0,null(),fails)

| filldown fails

| stats values(*) as * by fails- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Try something like this to get the first fail of a group of fails and the passes

| streamstats count as start reset_on_change=true by status

| where start=1 OR status="PASS"- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tried this and it gives the last fail from the group of fails. I want the first fail of URL which are from the pattern FAIL...............PASS.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Perhaps the events need to be sorted by time?

| sort 0 _time

| streamstats count as start reset_on_change=true by status

| where start=1 OR status="PASS"- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you.. I was able to get the data.Now in the list all the URLs which are SUCCESS also comes. How can i avoid that ? How can i group the FAIL and its immediate SUCCESS URLs? Because want to use the data of those URLs.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There might be a more efficient way to do this but this might work for you

| streamstats count as start reset_on_change=true by status

| where start=1

| streamstats count(eval(status=="FAIL")) as fails by status

| eval fails=if(fails=0,null(),fails)

| filldown fails

| stats values(*) as * by fails- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks a lot.This is working. But one pair has the wrong data. The FAIL one is having the data of PASS url.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am not sure I understand - is it just that the data from both events are in "wrong" order sometimes? If so, use list instead of values

| stats list(*) as * by fails- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

| where status!="ABORTED"

| streamstats count as start reset_on_change=true by status

| where start=1

| streamstats count(eval(status=="FAILURE")) as fails by status

| eval fails=if(fails=0,null(),fails)

| filldown fails

| stats list(*) as * by fails- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

| where mvcount(status) = 2- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ITWhisperer , i have many URLs, so i want to get the details for all the URLs in the data. I tried like this, but it is not grouping same URLs.

| where status!="ABORTED"

| streamstats count as start reset_on_change=true by status URL

| where start=1

| streamstats count(eval(status=="FAILURE")) as fails by status URL

| eval fails=if(fails=0,null(),fails)

| filldown fails

| stats list(*) as * by fails URL| where mvcount(status) = 2

Can you please help me to find What is wrong in this query?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't understand what it is you are trying to do with these changes. Can you raise a new question explaining this new requirement?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you so much!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you please help me! @ITWhisperer