Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Other Using Splunk

- :

- Alerting

- :

- Staggering cron alerts?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Staggering cron alerts?

After nearly doubling the amount of scheduled (cron) alerts in my Splunk environment, I'm starting to see some performance issues.

The alerts run every five minutes, and look at the previous five minute's worth of data.

earliest = -5m@m

latest = now

cron expression: */5 * * * *

It has been recommended to me to stagger the scheduled alerts so that, for example, some are running at 12:01, others run at 12:02, others run at 12:03, etc.

Is this possible? I'm having difficulty finding an options in the 'edit alert' page to further fine tune the cron schedule so that I can set an actual start time.

Also, the advice I got is perplexing, because I added the alerts manually; I would assume they would already be staggered because of the fact that I didn’t wait until exactly 12:00 or 12:05 to hit the submit button when creating each alert. Is that an incorrect assumption?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You should just set Schedule window to Auto and leave it at */5 and Splunk will do the staggering for you.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hello there,

first i will recommend that if you have the alert run every 5 minutes, let it search from 6 or 7 minutes ago until 1 or 2 minutes ago or go further 15 -10 minutes ago. that will give you better search performance then searching till time=now

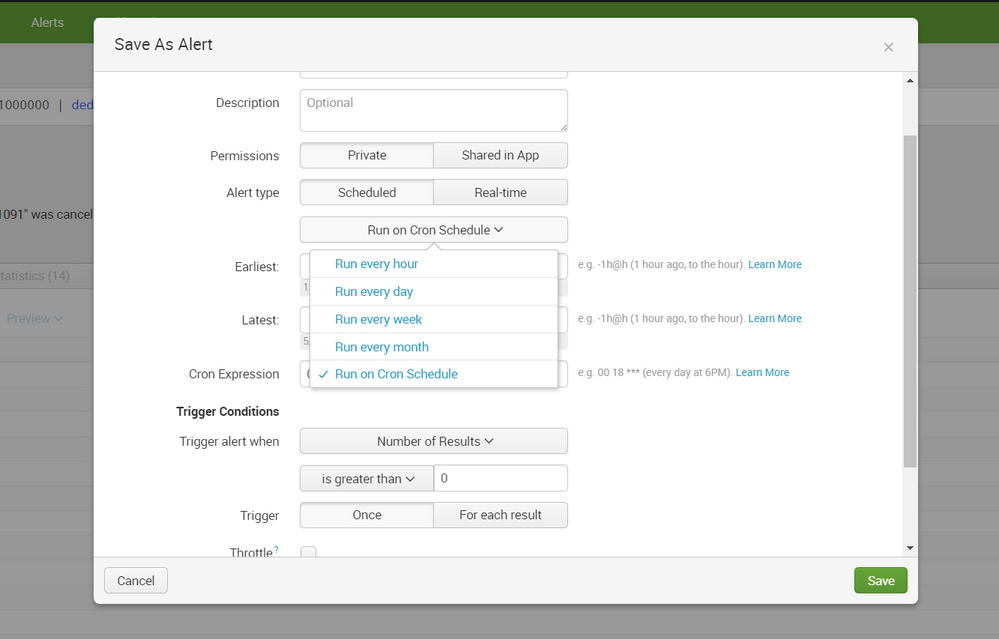

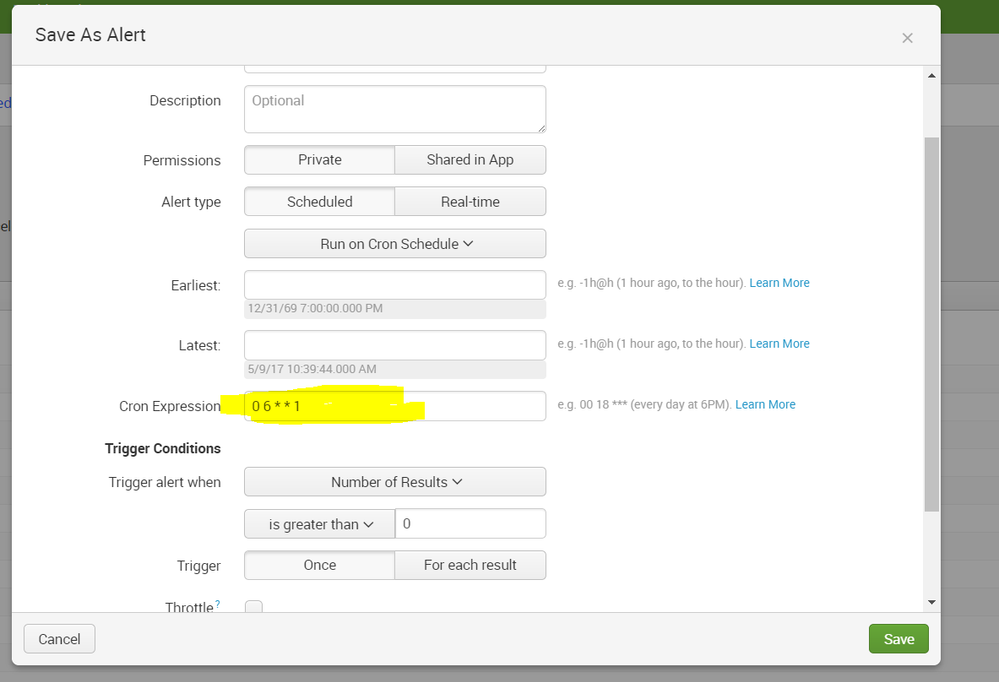

here is how to manually edit cron to whichever expression you would like under the save alert popoup:

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Also, in answer to OPs question about staggering the times...

cron expression: 1/5 * * * * ... every 5 minutes starting at 1 minute after the hour

cron expression: 2/5 * * * * ... every 5 minutes starting at 2 minutes after the hour

cron expression: 3/5 * * * * ... every 5 minutes starting at 3 minutes after the hour

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Would it be something like

1/5* * * *

or just

1/5

Sorry - I am not very experienced at cron / splunk.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

this tool explains it better then i do

https://crontab.guru/

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

First, thanks for your response.

You don't by chance know of any documentation that mentions why using the 'now' option is better for performance, do you? I saw in 'Alert scheduling tips' in the docs said that delaying the search will help ensure you capture all the results, but it doesn't mention performance. I'm curious to know more.