Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Re: Adding custom data from file

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Adding custom data from file

Hi all.

I am ingesting data into Splunk Enterprise from a file. This file contains a lot of information, and I would like Splunk to make the events start on the ##start_string

and end on the line before the next occurrence ##end_string

Within these blocks there are different fields with the form-> ##key = value

Here is an example of the file:

…..

##start_string

##Field = 1

##Field2 = 12

##Field3 = 1

##Field4 =

##end_string

.......

##start_string

##Field = 22

##Field2 = 12

##Field3 = field_value

##Field4 =

##Field8 = 1

##Field7 = 12

##Field6 = 1

##Field5 =

##end_string

……I have tried to create this sourcetype (with different regular expressions) but it creates only one event with all the lines:

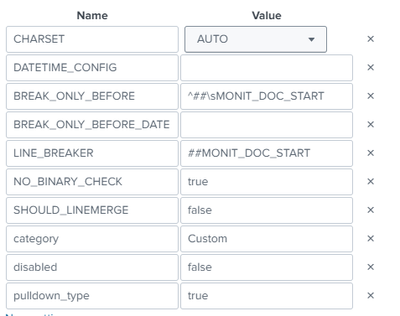

DATETIME_CONFIG =

LINE_BREAKER = ([\n\r]+)##start_string

##LINE_BREAKER = ([\n\r]+##start_string\s+(?<block>.*?)\s+## end_string

NO_BINARY_CHECK = true

SHOULD_LINEMERGE = true

category = Custom

description = Format custom logs

pulldown_type = 1

disabled = falseHow should I approach this case?

Any ideas or help would be welcome

Thanks in advanced

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I recommend setting SHOULD_LINEMERGE to false so that Splunk does not try to re-combine your events together.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tried it, but it didn't work.

splunk does not create the events with the information between the delimiters:

| ## MONIT_DOC_START .... ..... ## MONIT_DOC_END |

Any ideas?

I have also tried this (unsuccessful) :

BR

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

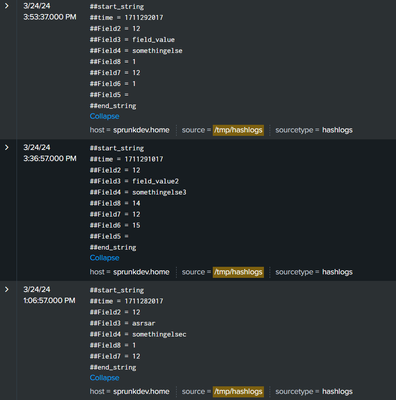

It should work. Here is how I have it set up:

log sample: (at /tmp/hashlogs)

##start_string

##time = 1711292017

##Field2 = 12

##Field3 = field_value

##Field4 = somethingelse

##Field8 = 1

##Field7 = 12

##Field6 = 1

##Field5 =

##end_string

##start_string

##time = 1711291017

##Field2 = 12

##Field3 = field_value2

##Field4 = somethingelse3

##Field8 = 14

##Field7 = 12

##Field6 = 15

##Field5 =

##end_string

##start_string

##time = 1711282017

##Field2 = 12

##Field3 = asrsar

##Field4 = somethingelsec

##Field8 = 1

##Field7 = 12

##end_string

inputs.conf (on forwarder machine)

[monitor:///tmp/hashlogs]

index=main

sourcetype=hashlogsprops.conf (on indexer machine)

[hashlogs]

SHOULD_LINEMERGE = false

LINE_BREAKER = ([\n\r]+)##start_string

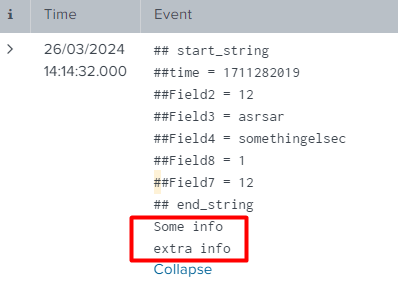

Result: (search is index=* sourcetype=hashlogs)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you @marnall

However, in my log (which has some line between event end markers and the next event start), something is wrong.

Some info

extra info

##start_string

##time = 1711292017

##Field2 = 12

##Field3 = field_value

##Field4 = somethingelse

##Field8 = 1

##Field7 = 12

##Field6 = 1

##Field5 =

##end_string

Some info

more info

extra info

##start_string

##time = 1711291017

##Field2 = 12

##Field3 = field_value2

##Field4 = somethingelse3

##Field8 = 14

##Field7 = 12

##Field6 = 15

##Field5 =

##end_string

SOme info

more info

info

extra info

##start_string

##time = 1711282017

##Field2 = 12

##Field3 = asrsar

##Field4 = somethingelsec

##Field8 = 1

##Field7 = 12

##end_string

Some info

extra info

Some idea to delimit events between the markers?

##start_string

##end_string

BR

JAR

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It would be nice to get the real log format in the first phase not after 1st version has resolved!

Do all valid log rows starting with ##? If so then you should add transforms.conf which drop away other lines. If there is not any way to recognise those without looking ##start_string and ##end_string then you probably must write some preprocessing or your own modular input. Splunk's normal input processing handling those lines one by one and it cannot keep track other lines and is there happening something or not.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you very much for the clarification.

Yes, valid rows start with ##. And each event is what is inside each ##start_string and ##end_string block.

From UI, is there any way to do the first step and remove the rows that do not start with ## ?

BR

JAR

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Then I propose you to use transforms.conf and send those lines into dev null. There are quite many examples on community and also on docs. See e.g. https://community.splunk.com/t5/Getting-Data-In/sending-specific-events-to-nullqueue-using-props-amp... just replace that REGEX to match your line or beginning of your line.

Basically SEDCMD do almost same. It just clears that line but it didn't remove it. Basically there are sill "empty" line on your log events, not removed line.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The difference is that with SEDCMD you can "blank" part of a multiline event. If you send to nullQueue, you'll discard whole event.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi .

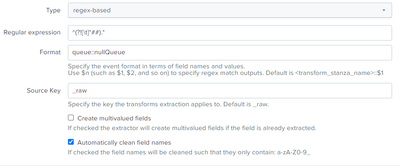

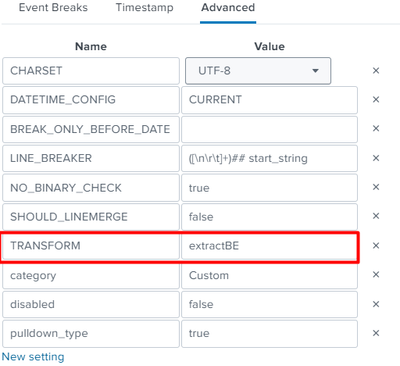

Trying with:

Field transformations:

And adding them to sourcetype:

But does not work

is there anything wrong?

Thank you all!!

BR

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can use SEDCMD to remove all lines not beginning with two hashes.

Something like

SEDCMD-remove-unhashsed = s/^([^#]|#[^#]).*$//

(Haven't tested it though, might need some tweaking).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content