- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: search with joins and append takes too long

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Goal is to determine, from specific vulnerabilities found in scans, the percentage that have been ‘fixed’, meaning they are not found in the latest scans. So first we get the vulns for all time, then check the ones related to the latest scans. So alltime – latest = fixed. And fixed / alltime * 100 = percentage fixed.

The search/query takes way too long. How to speed it up?

Here is the approach.

sourcetype=alpha

| eval comment=”the above gets the results of the scans All Time”

| eval comment=”cve is multivalue field, so split it up so we can lookup a specific CVE”

| makemv delim=";" cve | mvexpand cve | rename cve AS CVE

| eval comment=”now just look at the ones we care about”

| join CVE [|inputlookup PriorityCVE.csv]

| eval comment=”label these as ‘alltime’”

| eval ReportKey="alltime"

| eval comment=”now get the scans representing the latest info”

| append [search sourcetype=alpha | makemv delim=";" cve | mvexpand cve | rename cve AS CVE

| join CVE [|inputlookup PriorityCVE_test.csv]

| eval comment=”last_scan_finished is in terms of date not granularity of _time”

| convert timeformat="%F" ctime(_time) AS CurScan

| eval comment=”the beta source has events over time with last_scan_finished per asset”

| join asset_id, CurScan [search sourcetype=beta | dedup asset_id last_scan_finished

| fields asset_id last_scan_finished | sort 0 - last_scan_finished | dedup asset_id

| convert timeformat="%Y-%m-%d %H:%M:%S.%Q" mktime(last_scan_finished) AS LastScanTmp

| convert timeformat="%F" ctime(LastScanTmp) AS CurScan | fields asset_id CurScan]

| eval comment=”label those that match the last_scan_finished date as ‘nowtime’”

| eval ReportKey="nowtime”

| table asset_id CVE ReportKey

| eval comment=“get counts”

| stats values(ReportKey) as ReportKey by asset_id CVE

| eval comment=”if you only have the alltime label, you have been fixed”

| eval status=case(mvcount(ReportKey)=2, "Persistent", ReportKey="alltime", "Fixed", true(), "New")

| eval comment=”(since all scans are in alltime, there should be no ‘new’)”

| eval comment=”need to get the counts into variables for the equation”

| stats count AS stots BY status | fields stots | mvcombine delim="," stots

| nomv stots | rex field=stots "(?<tot_fix>.),(?<tot_persist>.)"

| eval comment=”now do the calculation”

| eval progress=(tot_fix / (tot_fix + tot_persist)) * 100

| table progress

I also tried an approach that put the last_scan_finished per asset in a lookup table, avoiding the redundant search, but that did not speed it up much (and would introduce the need to keep updating a lookup table).

Ideas?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm not going to attempt to follow a wall of search without knowing what your data looks like or what the job inspector output is, so here are some general pointers after skimming over:

- use the

commentmacro instead ofeval comment="...", should see a big drop inevaltime spent in the job inspector. Read http://docs.splunk.com/Documentation/Splunk/7.0.1/Search/Addcommentstosearches - don't use

| join field [inputlookup foo.csv], use| lookup foo.csv field - don't use

sourcetype=A | ... | append [search sourcetype=A], you're just loading the same data twice. Read https://answers.splunk.com/answers/129424/how-to-compare-fields-over-multiple-sourcetypes-without-jo... - restrict fields before

mvexpand

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

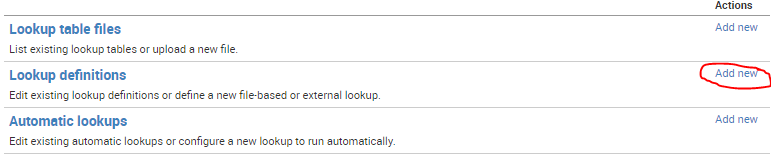

I'm adding this as an answer because I can't add a screenshot to a comment - but this is in response to your thought that you couldn't configure a lookup without file access to transforms.conf. You actually can achieve the same thing through the UI. Go to Settings > Lookups, and that will take you to this menu:

Under Add New, you'll find the settings page to configure your existing CSV as a full lookup, allowing you to use the lookup command.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for uploading the screenshot again!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@elliotproebstel, sorry I accidentally deleted the screenshot while trying to make it show 😞

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm not going to attempt to follow a wall of search without knowing what your data looks like or what the job inspector output is, so here are some general pointers after skimming over:

- use the

commentmacro instead ofeval comment="...", should see a big drop inevaltime spent in the job inspector. Read http://docs.splunk.com/Documentation/Splunk/7.0.1/Search/Addcommentstosearches - don't use

| join field [inputlookup foo.csv], use| lookup foo.csv field - don't use

sourcetype=A | ... | append [search sourcetype=A], you're just loading the same data twice. Read https://answers.splunk.com/answers/129424/how-to-compare-fields-over-multiple-sourcetypes-without-jo... - restrict fields before

mvexpand

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yeah, lookup doesn't filter on its own, but you can filter based on its output fields.

Additionally, if you extract your cve field as multivalue right away, you can automatically apply the lookup and filter based on a lookup output field in the generating search - before even the mvexpand.

Alternatively, if your lookup is a strong restriction (only few events match the lookup), you could consider this pattern: sourcetype=alpha [inputlookup PriorityCVE.csv | rename CVE as cve]

That will only load events that have a matching cve value, also requires extracting the cve field as multivalue right away.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I believe lookup does not filter out events, unless you use a where afterwards. And it is only for the lookup table (not the subsearch), and the table is small, so I think not much gain there.

I was able to eliminate the append search, which greatly sped the thing up, and that is somewhat related to the 129424 answer linked. I restricted fields before the mvexpand. I also changed the end calculation to account for possible zeroes (lack of a status type).

Here is the latest (final?) version with some abbreviation.

sourcetype=alpha | fields _time cve asset_id

| makemv delim=";" cve | mvexpand cve

| rename cve AS CVE | join CVE [|inputlookup PriorityCVE.csv]

| convert timeformat="%F" ctime(_time) AS CurScan

| dedup asset_id CVE CurScan

| join asset_id, CurScan type=outer [search sourcetype=beta|... obtain last_scan_finished date AS CurScan

| eval vulnstat="nowtime" | fields asset_id CurScan vulnstat]

| eval ReportKey=if(isnull(vulnstat),"alltime","nowtime")

| table asset_id CVE CurScan ReportKey

| stats values(ReportKey) as ReportKey, values(CVE) AS CVEs by asset_id CVE

| eval status=case(mvcount(ReportKey)=2, "Persistent", ReportKey="alltime", "Fixed", true(), "New")

| stats count(eval(status="Fixed")) AS tFixed, count(eval(status="New")) AS tNew, count(eval(status="Persistent")) AS tPersist

| eval progress=(tFixed / (tFixed + tNew + tPersist)) * 100

| table progress

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The macro takes zero computational effort per event because it's removed before the search is launched.

The macro can be placed virtually anywhere, not just between commands.

The macro is the documented approach, easing transition between environments or users.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just added the eval comment thing for this posting, I don't actually have it in the real search. But will keep the macro thing in mind for future posts.

When I read about "lookup", it said the table name had to be in transforms.conf, which I don't have access to.

Will take a look at the link about multiple sourcetypes, but I have read plenty of search-compare type articles and still haven't found the magic.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

or

| rename COMMENT as "since COMMENT doesn't exist, this takes almost no time"