Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Using CIDR in a lookup table

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Fellow Splunkers

I am building a query where I want to report on location based on source IP address. For example within our internal network the subnet 10.50.6.0/24 corresponds to Sydney, whereas 10.50.7.0/24 corresponds to Melbourne. Thus far I have been able to prove my approach using the cidrmatch function as follows:

eval location=case(cidrmatch("10.50.6.0/24",src_ip),"Sydney", cidrmatch("10.50.7.0/24",src_ip),"Melbourne")

Using a case statement doesn't scale when I have hundreds subnets spread across Australia and New Zealand. I was thinking of having a lookup table of the following format:

Subnet,Location

10.50.6.0/24,Sydney

10.50.7.0/24,Melbourne

My problem is that I have not been able to find a way to perform the appropriate lookup.

I have the following questions:

- Can any one suggest a way to execute CIDR-based query against a lookup table?

- Is there an alternative/better solution to the above that will allow me to match an internal source IP to location of my choosing?

Many thanks

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I realize that my answer may come a bit late, but you could do it through lookups. Perhaps these options were not available at the time of your posting.

Create a CSV lookup table with your subnets (in my example I've used a different type of classification for identifying potentially bad site users). We'll call this file knownips.csv and put it in /opt/splunk/etc/system/lookups.

clientip, honesty, comment

170.192.178.10/32, BAD, open_proxy

63.236.6.247/32, BAD, open_proxy

177.23.21.223/32, BAD, TOR_exit_node

27.35.0.0/16, OK, friendly_partner

27.96.0.0/16, BAD, hostile_competitor

195.69.252.0/24, BAD, suspicious_ISP

in transforms.conf

[checkip]

filename = knownips.csv

max_matches = 1

min_matches = 1

default_match = OK

match_type = CIDR(clientip)

in props.conf

[access_combined]

LOOKUP-check = checkip clientip OUTPUT honesty comment

This lets you run searches like;

sourcetype="access_combined" honesty=BAD | stats count by clientip, comment

with results like;

clientip comment count

170.192.178.10 open_proxy 78

177.23.21.223 TOR_exit_node 12

189.222.1.22 TOR_exit_node 26

195.69.252.22 suspicious_ISP 138

Hope this helps,

Kristian

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here is a detailed blog about how to do CIDR based lookups using the a KVStore: Splunk Spotlight - The Lookup Command: https://hodgkins.io/splunk-spotlight-the-lookup-command

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I realize that my answer may come a bit late, but you could do it through lookups. Perhaps these options were not available at the time of your posting.

Create a CSV lookup table with your subnets (in my example I've used a different type of classification for identifying potentially bad site users). We'll call this file knownips.csv and put it in /opt/splunk/etc/system/lookups.

clientip, honesty, comment

170.192.178.10/32, BAD, open_proxy

63.236.6.247/32, BAD, open_proxy

177.23.21.223/32, BAD, TOR_exit_node

27.35.0.0/16, OK, friendly_partner

27.96.0.0/16, BAD, hostile_competitor

195.69.252.0/24, BAD, suspicious_ISP

in transforms.conf

[checkip]

filename = knownips.csv

max_matches = 1

min_matches = 1

default_match = OK

match_type = CIDR(clientip)

in props.conf

[access_combined]

LOOKUP-check = checkip clientip OUTPUT honesty comment

This lets you run searches like;

sourcetype="access_combined" honesty=BAD | stats count by clientip, comment

with results like;

clientip comment count

170.192.178.10 open_proxy 78

177.23.21.223 TOR_exit_node 12

189.222.1.22 TOR_exit_node 26

195.69.252.22 suspicious_ISP 138

Hope this helps,

Kristian

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Since recent version, you can now do this from the WEBUI, it may worth an edit ?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

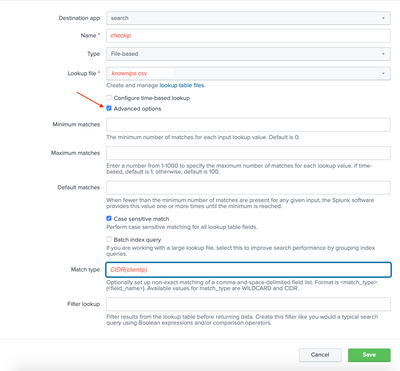

Yes, I agree that this is such a useful solution. The following are screenshots to replicate the exact effect as kristian_kolb's original intent:

0. Edit file knownips.csv as desired

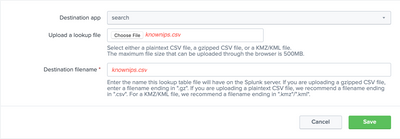

- Upload CSV file in "Lookups -> Lookup table files -> Add new". (Example file name: knownips.csv)

- Define lookup in "Looksup -> Lookup definitions -> Add new". Select the file you uploaded, e.g., knownips.csv. Check "Advanced options", scroll down to "Match type", enter CIDR(clientip), clientip being the field name used to match input. (Example lookup name: checkip.)

- Add automatic lookup in "Lookups -> Automatic lookups -> Add new". Select the lookup name you give above (the prompt is "Lookup table"), then type clientip as the first entry in "Lookup input fields", then type clientip after equal sign (=). Here, the first box is the field used for comparison in the table, the second box is the field used for lookup in input. (Example automatic lookup name: check; example sourcetype: access_combined)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Such a useful option, I'm wondering why isn't it described more evidently in documentation of lookup tables and why isn't there an option in web configuration of lookups to select matching type = */CIDR?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

http://docs.splunk.com/Documentation/Splunk/4.3.3/SearchReference/Lookup

Could this be what you want?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is it possible to add the "checkip" lookups from a web search?

something like: index="html" | lookup ipcheck ....

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As a matter of personal preference, I don't like using event types the way Stephen suggests, especially if there are lots of subnets.

Try this...

- Download this this script (hackish but functional) and put it in apps/search/bin:

http://www.southerington.com/redir.php?id=7

- Populate a CSV file with 3 columns, like this:

base,length,subnet_name 127.0.0.0,8,"Loopback" 10.50.6.0,24,"Name of Subnet Six" 10.50.7.0,24,"Name of Subnet Seven" ...Sort the CSV by base, and then in descending order by length so that the most precise match will come first. If you put the file in search/lookups, you can still use other searches to generate it, but be aware there are possible race conditions.

- Put the following in transforms.conf:

[subnetlookup] external_cmd = subnetlookup.py external_type = python fields_list = ip, subnet_name - Run your search, e.g:

src_ip=* | head 20 | lookup subnetlookup ip as src_ip OUTPUT subnet_name

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is a bug in the script as provided by southeringtonp. In the load_subnet_list function, the reverse sort needs to be on the second element of the list. I fixed this by adding "import operator" to the top of the file and replacing subnet.reverse() with subnets.sort(key=operator.itemgetter(1),reverse=True)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As a heads-up to others, I ran into the case where this worked fine for me when I included "| head n" in the stream, but not when that head was taken out. The issue is that I had installed the script on a search head, but the search was running on an indexer. Using "src_ip=* | localop | lookup subnetlookup [...]" worked -- localop forced the lookup to run on the search head. And thanks to the IRC folks on EFNet (via my local splunk admin) for the answer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For IP's not in the subnets.csv file, I can think of two approaches. One is to put "0.0.0.0,0,Unknown" as the last row in your file and then filter on subnet_name=unknown. The database guy in me recoils at that. The other is to try isnull(subnet_name).

To your first question, shouldn't it be src_ip=* ? The asterisk is missing in your post above.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's not clear to me how this works. I was able to configure everything to apparently work though.

When running this search:

host="servername*" AND src_ip=* | lookup subnetlookup ip as src_ip OUTPUT subnet_name

It only returns 196 matches, yet when I run a search on just one of the three subnets that I have in the subnets.csv file like, "123.123..", it returns thousands of results. why is it filtering it down to only a couple hundred?

Secondly, how do I do a search for IPs NOT in the subnets.csv file? I've tried adding "NOT" to various parts of the search string and it doesn't work.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As gkanapathy said, this is not possible with CSV lookup tables, but is feasible with external lookups. That said, I think that eventtypes are probably the right knowledge primitive to use with version 4.1 (and 4.2, when it comes out) to solve this problem. For example, in our internal web analytics app, we use eventtypes to "classify" events as being from bots, browsers, internal IPs and in turn pageviews are driven by eventtypes.

You can configure eventtypes.conf to enumerate the regions like:

[location-australia-sydney]

search = Subnet=10.50.6.0/24

[location-australia-melbourne]

search = Subnet=10.50.7.0/24

To retrieve, you can search for eventtype=location-australia-sydney. If you want all of Australia, you can search for eventtype=location-australia-*. Since these are eventtypes, events are tagged with these for later filtering or reporting.

If you just want to report on these locations, you can add to your search:

... | eval location = mvfilter(eventtype LIKE "location-%") | ...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Probably the searches for the eventtypes should be e.g., src_ip=10.50.6.0/24, not Subnet=..., to match against the field that is present in the original event.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No. CSV lookup tables in the current version (4.1.4) must have an exact string match (optionally case-insensitive) to the field.

But you could do this using a scripted lookup instead that executed the logic above. You can take a look at $SPLUNK_HOME/etc/system/bin/external_lookup.py or here.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content