Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Sorting inquiry

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

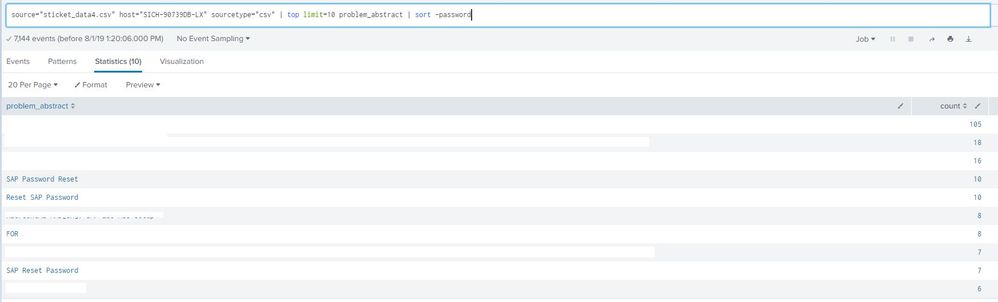

you dont have any fields call password to sort on.

Try

| sort problem_abstract

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You do that by normalizing the data.

... | eval problem_abstract=case(problem_abstract="SAP Password Reset", problem_abstract, problem_abstract="Reset SAP Password", "SAP Password Reset", problem_abstract="SAP Reset Password", "SAP Password Reset", 1=1, problem_abstract) | ...

but that means having a case entry for each possible problem.

Letting Splunk do that for you may work better, depending on your data.

... | cluster showcount=true countfield=count field=problem_abstract match=termset | top limit=10 count | sort - count | table problem_abstract count

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@richgalloway exactly where I was going with the cluster command. In fact if patterns are not know some other options like TFIDF, NLP etc.

| makeresults

| fields - _time

| eval problem_abstract="SAP reset Password=10,reset SAP Password=20,Password reset SAP=20,Other=100,Something Else=50"

| makemv problem_abstract delim=","

| mvexpand problem_abstract

| makemv problem_abstract delim="="

| eval count=mvindex(problem_abstract,1),problem_abstract=mvindex(problem_abstract,0)

| table problem_abstract count

| cluster field=problem_abstract t=0.3

| fields - cluster_label

Play around with t as per your need of creation of clusters. Refer to cluster command documentation.

| makeresults | eval message= "Happy Splunking!!!"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@richgalloway and @niketnilay - clustering is definitely an interesting option. it has to be termset or ngramset though, termlist , which is the 'match' parameter by default will yield inferior results.

But there is a risk - I tested with reset sap password & sap password reset with text like 'i care' and 'i don;t care' as dummy. It works well with termset and ngramset. But then i added a fourth line/phrase - please reset my sap password. Now, the game changes and the clustering fails to yield proper results.

@chinkeeparco - please go ahead with the clustering as suggested by rich and niket, you have to play around with the t value and the match term , to see what suits you best

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did explicitly mention match=termset in my answer as well as "depending on your data". You may have to combine the two approaches I offered - normalize some outliers then let cluster do the rest. Then again, some experimenting with various cluster options may yield acceptable results.

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@richgalloway @niketnilay Thank you so much for your advice!! 🙂 It was really helpful!

Also, @Sukisen1981 thank you so much as well! 🙂

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Sukisen1981 yes indeed I have mentioned TFIDF, NLP to be tried as well. But like @richgalloway has mentioned solution should be adopted as per the use case.

- Simplest use case is where we know all possible groups of field problem_abstract. The case statement provided by Rich can be prepared using a lookup as well, where such static combinations can be stored and updated.

- cluster command can do initial clustering based on strict and lenient pattern match using

toptions. - ML is the solid use case for such free form text pattern match where we are not aware of any possible combination/s of text. TFIDF or HashingVector for feature extraction with less compute and NLP for Natural Language Processing.

| makeresults | eval message= "Happy Splunking!!!"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

you dont have any fields call password to sort on.

Try

| sort problem_abstract