- Splunk Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Reduce the Expires of a job to 5 seconds

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Reduce the Expires of a job to 5 seconds

I have a dashboard that I have to do a lot of refreshing on.

This is causing a lot of jobs to happen on my SPunk install.

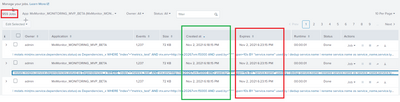

The first set of jobs run but they are kept by Splunk for 5 minutes (Image below)

How do I get the expired to go to 5 seconds?

I was trying some setting but they are not working for me. Any ideas woud be great thanks

default_save_ttl=5

ttl = 5

remote_ttl = 5

srtemp_dir_ttl = 5

cache_ttl=5

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I guess, It isn’t possible to set the job expiry to 5 seconds, by default it would be either 10 minutes or 7 days.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi - I think that is only fo saved search, i am looking for dashboards.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

On a saved search you can set TTL, however the TTL may be changed based oany n alert action.

The TTL within a dashboard would be the default TTL of adhoc searches which is normally 10 minutes.

That said I'm unsure if you can configure TTL for a job created by a dashboard...I mean you can likely hit the REST api to change TTL settings once the search runs or perhaps is running but that isn't an elegant solution... For this idea I would use the <done> if you cannot find a setting.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

At the moment we have written a script that will remove any jobs if we go over 10,000.

However, Splunk still holds onto jobs that I cant remove for a time.

cd $dispatch

count=`ls -lrt | wc -l`

#To get the Indexer machine name

host=`echo "$SPLUNK_HOME" | cut -d"/" -f2`

if [ $count -gt 10000 ]; then

find $dispatch -maxdepth 1 -mmin +3 2>/dev/null | while read job; do if [ ! -e "$job/save" ] ; then rm -rfv $job ; fi ; done > /dev/null 2>&1

find $dispatch -type d -empty -name alive.token -mmin +3 2>/dev/null | xargs -i rm -Rf {} > /dev/null 2>&1

find $splunkdir/var/run/splunk/ -type f -name "session-*" -mmin +3 2>/dev/null | xargs -i rm -Rf {} > /dev/null 2>&1

after_count=`ls -lrt | wc -l`

echo `date +"%Y-%m-%d %H:%M:%S"`"|$SPLUNK_HOME|$host|$count|$after_count|"

else