- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- How to prevent a user from running a high memory-u...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We've recently run into some users that have run searches which resulted in Splunk Indexers crashing. I'm looking for some suggestions to A. prevent a user from running a high memory usage search and B. understand what it is about this search that consumed that much memory.

We have 8 indexers clustered running CentOS and Splunk 6.5.3. Each one has 128GB ram and a 24 disk RAID10 array. During normal usage the indexers consume roughly 6GB of memory. The following search query consumed just under 120GB of ram on each indexer and crashed one due to running out of swap space.

sourcetype="infoblox:dns" NOT (query="*akamai*" OR query="*qwest*" OR query="*google*" OR query="*bing*" OR query="*cloudfront*" OR query="*amazonaws.com" OR query="*microsoft*" OR query="*mcafee*" OR query="*deere*" OR query="*.arpa") | eval domain= mvindex(split(query,"."),-3) + "." + mvindex(split(query,"."),-2) + "." + mvindex(split(query,"."),-1) | stats count by domain | sort - count domain | table domain

The indicators I see which may have contributed to this are:

- Not specifying an index, though the sourcetype infoblox:dns belongs to only 1 index. (not sure if this would matter)

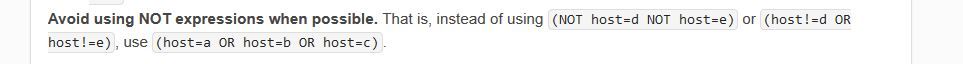

- Using NOT rather than AND ( I recall seeing somewhere that NOT is more resource intensive)

- Using wildcards in each NOT statement

- The EVAL statement

Is there a better way to formulate this search to reduce memory usage? What can I do in the future to prevent a user from repeating this and bringing down the system?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

All right, the first thing to do is to look at that sourcetype and see what values are in the query field.

Then, take ONE of those query="blahblah" clauses and figure out how a computer would have to compare in order to determine if a record should be included. The system is going to have to scan the entire record ten times, remembering all the places it might have to backtrack to.

Try this -

sourcetype="infoblox:dns"

| rex field=query "(?<rejectme>akamai|amazonaws\.com|bing|cloudfront|deere|google|mcafee|microsoft|qwest|\.arpa)"

| where isnull(rejectme)

| rename COMMENT as "Not sure whether the below is appropriate or not, without seeing sample data."

| eval query = split(query,".")

| eval domain= mvindex(query,-3) + "." + mvindex(query,-2) + "." + mvindex(query,-1)

| stats count by domain

| sort - count domain

| table domain

I suspect that there is a better way to determine the domain, but I'd need sample data to have a clue what it might be.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

All right, the first thing to do is to look at that sourcetype and see what values are in the query field.

Then, take ONE of those query="blahblah" clauses and figure out how a computer would have to compare in order to determine if a record should be included. The system is going to have to scan the entire record ten times, remembering all the places it might have to backtrack to.

Try this -

sourcetype="infoblox:dns"

| rex field=query "(?<rejectme>akamai|amazonaws\.com|bing|cloudfront|deere|google|mcafee|microsoft|qwest|\.arpa)"

| where isnull(rejectme)

| rename COMMENT as "Not sure whether the below is appropriate or not, without seeing sample data."

| eval query = split(query,".")

| eval domain= mvindex(query,-3) + "." + mvindex(query,-2) + "." + mvindex(query,-1)

| stats count by domain

| sort - count domain

| table domain

I suspect that there is a better way to determine the domain, but I'd need sample data to have a clue what it might be.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This seems to have done the trick. When I was testing yesterday running the search for the past hour crashed one of my indexers. I was able to use your suggestion and run a search for the past hour and memory usage on the indexers didn't go above 6%. I'm also not seeing my search on the high memory usage searches 🙂

I did make one tweak, I added (?i) to the regex so that it isn't case sensitive.

| rex field=query "(?i)(?<rejectme>akamai|amazonaws\.com|bing|cloudfront|deere|google|mcafee|microsoft|qwest|\.arpa)"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good job!

Normally I do a max_match on the rex, but in this case you don't care how many are matched - any one match results in rejecting the record.

Happy splunking!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content