Are you a member of the Splunk Community?

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- How to configure a time-based lookup - Temporal lo...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to configure a time-based lookup - Temporal lookup?

I'm trying to configure a time-based lookup (temporal lookup) but it doesn't seem to be working as expected. Any advice would be great. Thanks! I'm using the Expiration field to configure time-based lookups.

1) I've tried with and without the _offset parameters.

2) Table and definitions Permissions are set to App.

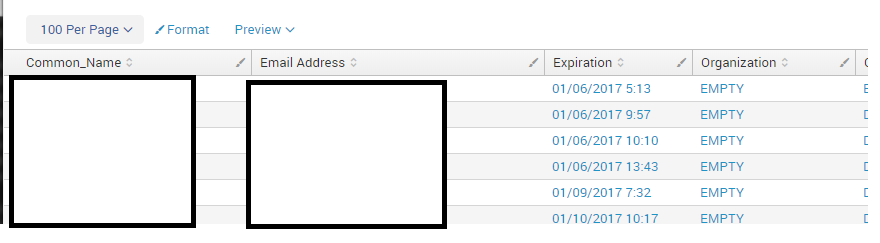

3) The definitions fields are: Expiration,Common_Name,Organization,Organization_Unit,Serial_Number,Email Address

4) The transforms.conf is on the SH

transforms.conf

[ICA_Definitions]

batch_index_query = 0

case_sensitive_match = 1

filename = 2018_ICA_Certs.csv

time_field = **Expiration**

time_format = %m/%d/%Y %H:%M

max_offset_secs = 10

min_offset_secs = 0

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

After looking at @rewritex's comments, I'm posting this new answer.

Lookups, even time-based lookups, don't (by default) enable searching by using the timepicker. Instead they are intended for a use case like the following:

server_purposes.csv:

start_time,host,purpose

2018-02-01,host01,all-in-one

2018-02-02,host01,searchhead

2018-02-02,host02,indexer

2018-02-03,host02,ageout-indexer

2018-02-03,host03,cluster-master

2018-02-04,host04,indexer

2018-02-04,host05,indexer

2018-02-04,host06,indexer

2018-02-05,host02,monitoring-console

transforms.conf:

[server_purposes]

filename = server_purposes.csv

time_field = start_time

time_format = %Y-%m-%d

search:

| makeresults

| eval host="host02", _time=strptime("2018-02-04", "%Y-%m-%d")

| lookup server_purposes host

results:

host=host2 purpose=ageout-indexer

However, if you have a time in your lookup file, you can somewhat fake what you may be looking for with a search like:

| inputlookup server_purposes

| eval _time=strptime(start_time, "%Y-%m-%d")

| addinfo

| where _time>=info_min_time and _time<info_max_time

The addinfo command adds your earliest/latest times as chosen by the timepicker and puts them in the fields info_min_time and info_max_time, after which you can use the where command to search for _time values within that range.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I ended up scrapping the lookup-table portion of the project and just uploaded the .csv into a new index. During the add-data wizard it found the expiration field and automatically mapped it. I corrected the timestamp pattern and now the timepicker is working for future and past timeframes.

The project was to track expiring ICA certificates

I exported a years worth of Internal Certificate Authority (ICA) expiring certificates and imported them into Splunk.

certutil -view -restrict "notAfter>=1/01/2018,notAfter<=2/01/2018" -out "Issued Common Name,Issued Organization,Certificate Expiration Date,Issued Email Address" >> 2018_jan

certutil -view -restrict "notAfter>=2/01/2018,notAfter<=3/01/2018" -out "Issued Common Name,Issued Organization,Certificate Expiration Date,Issued Email Address" >> 2018_feb

etc

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Was the issue that you couldn't use the time picker with your time-based lookup?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The temporal lookups in splunk assume that the timestamp is the earliest time that the lookup is valid (+/- the _offset_secs):

max_offset_secs = <integer>

* For temporal lookups, this is the maximum time (in seconds) that the event

timestamp can be later than the lookup entry time for a match to occur.

* Default is 2000000000 (no maximum, effectively).

min_offset_secs = <integer>

* For temporal lookups, this is the minimum time (in seconds) that the event

timestamp can be later than the lookup entry timestamp for a match to

occur.

* Defaults to 0.

Is it possible (based on the name of your field being Expiration) that you expect the opposite to be true (that the lookup is before until the timefield, not after it)?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the response. I've went ahead just tested a few different variations of the settings...

max=10/min=0, max=0/min=10,max/min=5, etc and adjusted the timepicker to a specific date and time with +/- 1 second ... still no luck. I still see all table results.

I search for the 01/06/2017 5:13 line item with a timepicker between 01/06/2017 5:12 and 01/06/2017 5:14 while updating the transforms.conf for different min/max settings. I also tried ... | extract reload=T and a reboot.