Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: Determine number of concurrent queries with a ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

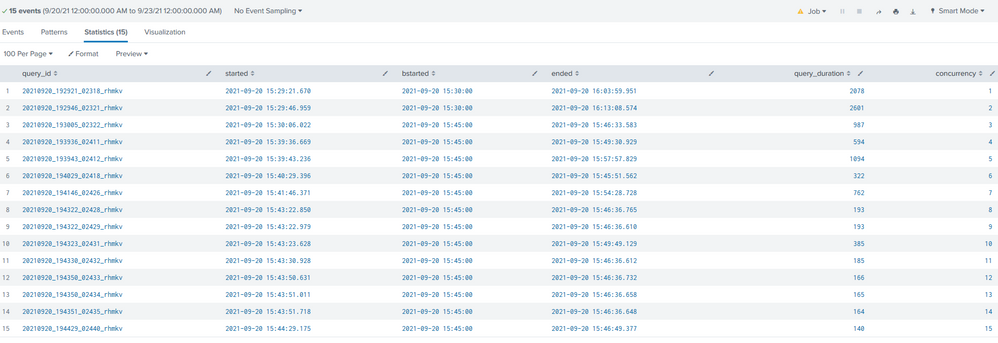

Attached screenshot

|where started <= "2021-09-20 15:45:00" AND ended >= "2021-09-20 15:45:00"| eval bstarted = strptime(started, "%Y-%m-%d %H:%M:%S.%3N")|bin span=15m bstarted | eval bstarted = bstarted+900| where strptime(started, "%Y-%m-%d %H:%M:%S.%3N") <= bstarted and strptime(ended, "%Y-%m-%d %H:%M:%S.%3N") >= bstarted|eval bstarted=strftime(bstarted,"%F %T")|eval st=strptime(started, "%Y-%m-%d %H:%M:%S.%3N") | concurrency duration=query_duration start=st|table query_id started bstarted ended query_duration concurrency

surprised to see that the concurrency values are a sequence from 1 to 15? Does not quite make sense.

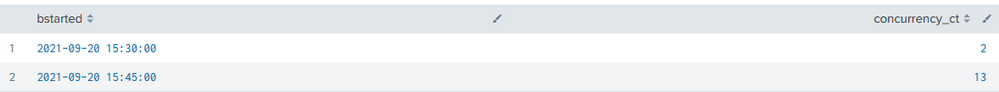

2 Tried with stats dc(query_id) as concurrency_ct by bstarted and did not get the desired results as per screenshot

"20210920_193943_02412_rhmkv","2021-09-20 15:39:43.236","2021-09-20 15:45:00","2021-09-20 15:57:57.829",1094,

"20210920_192921_02318_rhmkv","2021-09-20 15:29:21.670","2021-09-20 15:30:00","2021-09-20 16:03:59.951",2078,

"20210920_193005_02322_rhmkv","2021-09-20 15:30:06.022","2021-09-20 15:45:00","2021-09-20 15:46:33.583",987,

"20210920_192946_02321_rhmkv","2021-09-20 15:29:46.959","2021-09-20 15:30:00","2021-09-20 16:13:08.574",2601,

There are 4 queries_duration > 900 seconds, desired concurrency_cnt are:

"2021-09-20 15:30:00",2

"2021-09-20 15:45:00",15 (2 more from the 2078 and 2601 seconds duration values)

"2021-09-20 16:00:00",4 (4 more from the 2078 and 2601, 1094, 987 seconds duration values)

Was hoping concurrency command would give me the results. Not sure where is the mistake? Would appreciate some inputs, guidance. Thanks.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This question came up at Ask The Experts at .conf21

One thought was to use makecontinuous, to insert extra marker events around the data of the search starts, and then use concurrency across all to see them... e.g.

```

| inputlookup concurrent_queries.csv

| eval _time = strptime(started,"%F %T.%3Q")

| append [ makeresults | addinfo | rename info_max_time -> _time | fields _time ]

| makecontinuous _time span=5m

| fillnull query_duration

| concurrency start=_time duration=query_duration

| eval concurrency=if(isnotnull(query_id),concurrency,concurrency-1)

```

(where the inputlookup and eval are to get the data from above... would obviously be a search to create a start time and duration for each query... )

but looking at the synthetic checkpoints we should now be able to see concurrency at those points...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This question came up at Ask The Experts at .conf21

One thought was to use makecontinuous, to insert extra marker events around the data of the search starts, and then use concurrency across all to see them... e.g.

```

| inputlookup concurrent_queries.csv

| eval _time = strptime(started,"%F %T.%3Q")

| append [ makeresults | addinfo | rename info_max_time -> _time | fields _time ]

| makecontinuous _time span=5m

| fillnull query_duration

| concurrency start=_time duration=query_duration

| eval concurrency=if(isnotnull(query_id),concurrency,concurrency-1)

```

(where the inputlookup and eval are to get the data from above... would obviously be a search to create a start time and duration for each query... )

but looking at the synthetic checkpoints we should now be able to see concurrency at those points...