- Splunk Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Re: does Splunk UF has capability to mask data as ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

does Splunk UF has capability to mask data as it send to indexer?

Is it possible in Splunk to have one props.conf file on one server's Universal Forwarder (UF) for a specific app, and another props.conf file on a different server for the same app, but with one file masking a certain field and the other not?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @abi2023,

If you need to mask data pre-transit for whatever reason and the force_local_processing setting doesn't meet your requirements, you can use the unarchive_cmd props.conf setting to stream inputs through Perl, sed, or any command or script that reads input from stdin and writes output to stdout.

For example, to mask strings that might be IPv4 addresses in a log file using Perl:

/tmp/foo.log

This is 1.2.3.4.

Uh oh, 5.6.7.8 here.

Definitely not an IP address: a.1.b.4.

512.0.1.2 isn't an IP address. Oops.inputs.conf

[monitor:///tmp/foo.log]

sourcetype = fooprops.conf

[source::/tmp/foo.log]

unarchive_cmd = perl -pe 's/[0-9]{1,3}\.[0-9]{1,3}\.[0-9]{1,3}\.[0-9]{1,3}/*.*.*.*/'

sourcetype = preprocess-foo

NO_BINARY_CHECK = tru

[preprocess-foo]

invalid_cause = archive

is_valid = False

LEARN_MODEL = false

[foo]

DATETIME_CONFIG = NONE

SHOULD_LINEMERGE = false

LINE_BREAKER = ([\r\n]+)

EVENT_BREAKER_ENABLE = true

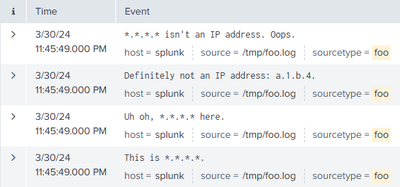

EVENT_BREAKER = ([\r\n]+)The transmitted events will be masked before they're sent to the receiver:

Regular expressions that work with SEDCMD should work with Perl without modifications.

The unarchive_cmd setting is a flexible alternative to scripted and modular inputs. The sources do not have to be archive files.

As others have noted, you can deploy different props.conf configurations to different forwarders. Your props.conf settings for line breaks, timestamp extraction, etc., should be deployed to the next downstream instance of Splunk Enterprise (heavy forwarder or indexer) or Splunk Cloud.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Small correction:

NO_BINARY_CHECK = true

(If I edit my original answer, the formatting will be mangled.)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can I do this buy source? and does source can take different props conf.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My previous answer used a single source example, but you can modify unarchive_cmd settings per-source as needed.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @abi2023 ,

as @marnall said, you can create different apps and deploy to the UFs using different serverclasses.

About data mascking, for my knowledge UFs enter only in the input phase, but the other phases (merge and parsing) are in the first full Splunk instance that data are passing through.

In other words, in the Indexers or (when present) in the first Heavy Forwarder, but not in the UFs.

If your doubt is that data are sent in clear mode, you can encrypt them between the UFs and the Indexers (or HFs), and then mask them on these other systems.

Ciao.

Giuseppe

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Would it fit your use case to set inputs.conf and outputs.conf such that the UF forwards the same logs to two different indexer servers, then those indexer servers have different props.conf which can mask and not mask the fields?

It seems like props.conf on the UF won't solve your problem.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

UFs are independent so it is possible to have different configurations on each.

If the UFs are managed by a Deployment Server, however, you cannot have different props.conf files in the same app. You would have to create separate apps and put them in different server classes for the UFs to have different props for the same sourcetype.

To answer the second part of the question, you *should* be able to put force_local_processing = true in the props.conf file to have the UF perform masking. Of course, you would also need SEDCMD settings to define the maskings themselves. I say "should" because I don't have experience with this and the documentation isn't clear about what the UF will do locally.

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yep. While the local processing on UF part is supposed to work _somehow_ it's indeed not very well docummented and not recommended.

If you want only some sources masked, you could use source-based or host-based stanzas in props.conf on your indexer(s) to selectively apply your SEDCMD or transform only to specific part of your data.