Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Re: Windows logs are not ingesting into splunk

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Windows logs are not ingesting into splunk

Dears,

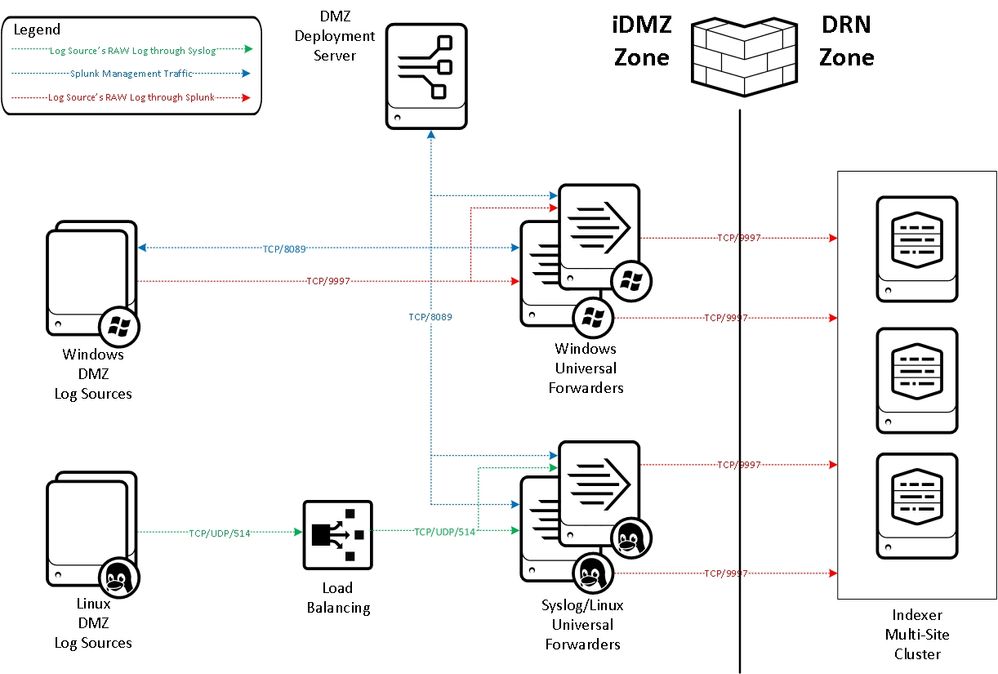

We have the deployment server in DMZ zone and indexers are in DRN zone. So windows team is pushing the packages using SCCM to our DMZ deployment servers and we can see those clients in our deployment servers but we are not seeing single logs in our splunk that means data is not indexing into our splunk.

Please find the attached architecture screenshot for your reference .

More details :

1. Deployment servers in DMZ zone

2. Indexers are in DRN zone

#################

The below one is for Windows DMZ log sources to windows universal forwarder

[root@********local]# cat outputs.conf

[tcpout]

defaultGroup = xxxx_idx_win_prod

indexAndForward = false

[indexAndForward]

index = false

[tcpout:xxxx_idx_win_prod]

autoLBVolume = 1048576

server = xxxxsplkwinfrwdr001.xxxxx.xx.xxxx:9997, xxxxsplkwinfrwdr002.xxxxx.xx.xxxx:9997

sslPassword = password

clientCert = $SPLUNK_HOME/etc/auth/server.pem

autoLBFrequency = 5

useACK = true

########################################

Deployment server configuration :- This will applicable for PROD DRN indexers - Forwarders to indexers

/opt/splunk/etc/deployment-apps/xx-xxxx_xxxx_idx_prod_outputs/local

cat outputs.conf

[tcpout]

defaultGroup = xxxx_idx_prod

indexAndForward = false

[indexAndForward]

index = false

[tcpout:xxxx_idx_prod]

autoLBVolume = 1048576

server = <all drn indexers ip address mentioned here with 9997 port>

sslPassword = password

clientCert = $SPLUNK_HOME/etc/auth/server.pem

autoLBFrequency = 5

useACK = true

#######################

inputs.conf

cat inputs.conf

[splunktcp-ssl:9997]

disabled=0

[SSL]

sslPassword = password

clientCert = $SPLUNK_HOME/etc/auth/server.pem

Kindly advise us on this.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are some typical steps you can troubleshoot in such situation:

1) Check what is the final configuration of your forwarders with btool

2) Check whether you do have network connectivity (if you use mutual authentication, which is a good thing, you should do it with a tool that supports TLS auth and check if you can authenticate with your crypto material)

3) Check the logs on both sides for any connection-related errors

4) Dump the network traffic and see how the connection tries go

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We can see the below errors in deployment servers

10-14-2021 12:22:42.659 +0300 WARN HttpListener - Socket error from 173.1.194.87:57778 while idling: Read Timeout

10-14-2021 12:30:39.833 +0300 WARN HttpListener - Socket error from 173.1.196.88:53694 while idling: Read Timeout

10-14-2021 15:43:05.850 +0300 WARN HttpListener - Socket error from 173.1.194.88:57415 while idling: Read Timeout

10-14-2021 15:48:27.204 +0300 WARN HttpListener - Socket error from 173.1.194.76:63420 while idling: Read Timeout

10-14-2021 15:57:15.789 +0300 WARN HttpListener - Socket error from 173.1.194.58:58735 while idling: Read Timeout

10-14-2021 16:07:40.478 +0300 WARN HttpListener - Socket error from 173.1.194.59:59241 while idling: Read Timeout

10-14-2021 16:15:52.728 +0300 WARN HttpListener - Socket error from 173.1.194.60:56266 while idling: Read Timeout

10-14-2021 16:42:01.798 +0300 WARN HttpListener - Socket error from 173.1.194.61:50263 while idling: Read Timeout

10-14-2021 16:52:24.384 +0300 WARN HttpListener - Socket error from 173.1.194.62:54696 while idling: Read Timeout

10-14-2021 17:04:25.910 +0300 WARN HttpListener - Socket error from 173.1.196.89:64325 while idling: Read Timeout

10-14-2021 17:11:19.243 +0300 WARN HttpListener - Socket error from 173.1.214.14:58889 while idling: Read Timeout

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This on its own should not mean anything serious. Just that some unused connections are getting timed-out. It probably means that there is some misconfiguration on network level because open connections should get properly closed if not used but it's not a big deal.

And deployment server on its own has nothing to do with sending logs from forwarders to indexers.

So check other points.