Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Why is there large data latency coming from syslog...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

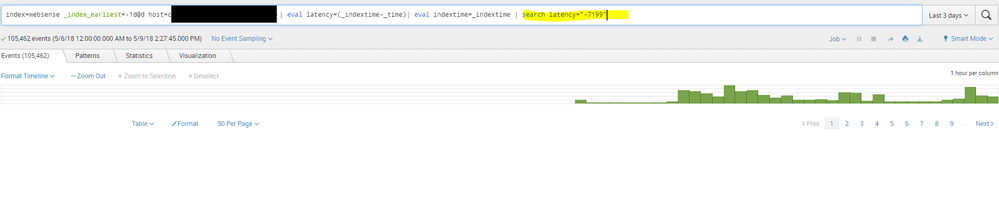

Why is there large data latency coming from syslog?

Hello, we have a proxy network appliance running Websense, sending its logs via syslog to Splunk,

We have a data latency alert configured to alert if latency is large,

search $search_args$ _index_earliest=-1d@d _index_latest=@d

| eval lag_sec = (_indextime-_time)

| eval lag_hrs = lag_sec/(60*60)

| eval delay_hrs = if( lag_hrs > 0.5, lag_hrs, "")

| eval future_sec = if( lag_sec < -1, -1*lag_sec, "")

| eval containsGap = if(delay_hrs!="" OR future_sec!="", "true", "false")

| stats max(delay_hrs),

max(future_sec),

count(eval(containsGap="true")) as countGaps,

count(_raw) as countEvents,

by splunk_server index host sourcetype source

| eval pecentGaps = countGaps / countEvents*100

| where pecentGaps>5

| sort host, sourcetype, source

We started to get large latency (2 hour (7200 seconds) gap between received events timestamp and when theyre indexed) in last few days, and I am trying to determine whats causing this,

We dont have a forwarder on this network device, and we arent seeing any additional network bottlenecks or traffic. Where can I look to troubleshoot data integrity latency?

Thanks

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is almost always due to incorrect interpretation of TimeZones (usually because there are no TZ values in the timestamps and there is no TZ= in any props.conf so each indexer uses the TZ value of its host OS (which shouldn't be, but might be, different on each indexer).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I checked the indexer, it has the host configured with the right TZ

[root@cgysplunk01 /opt/splunk]# cat ./etc/system/local/props.conf

[host::cgyxxpwcg02.xxxx]

TZ = America/Edmonton

The indexer itself is EST TZ

[root@cgysplunk01 /opt/splunk]# cat /etc/sysconfig/clock

ZONE="America/New_York"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you please show an example event?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The following can help - Data Latency: 4 things it can tell you about your Splunk data

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Latency is always 7199 seconds? This sounds more like an issue with a wrong timezone than actual latency...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

no, latency varies but all are above -7000s