Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Tail/Batch readers confusion

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Tail/Batch readers confusion

I'm having more strange situations with my UF ingesting many big files.

OK, I managed to make the UF read the current Exchange logs reasonably quickly (it seems that there were some age limits left ridiculously high by someone so there were many files to check). So now there are several dozens (or even hundreds) files tracked by splunkd but it seems to work somehow. The problem is that I also monitor another quite quickly growing file on this UF. And it's giving me headache.

Some time after the UF starts, if restarted mid-day, I get

TailReader - Enqueuing a very large file=\\<redacted> in the batch reader, with bytes_to_read=9565503150, reading of other large files could be delayed

OK, that's understandable - the batch reader is supposed to be more effective at reading a single big file at once, why not. But the trick is - the file is not getting ingested. I don't see any new events in the index. And I checked with procexp64.exe from SysInternals and handle64.exe - the file is not open by splunkd.exe at all.

So where is my file???

Other files are being monitored and the data is getting ingested.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

I suppose that you have read/are known by these https://community.splunk.com/t5/Getting-Data-In/File-not-being-read-by-Splunk-in-a-directory-while-o... Probably you also have updated thruput in limits.conf and also you have several pipelines defined?

What splunk list inputstatus told about this file?

r. Ismo

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

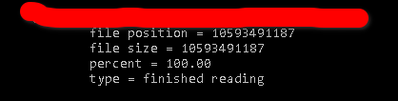

Inputstatus shows (In a section regarding TailingProcessor) ...

But does it mean that after UF switched to batch mode, it just treats the file as finished and will not tail it anymore?

Or does it mean that UF will run batch reader again some time in the future? (when I look in _internal for the occurrences of my source log name, I get several messages about enqueueing a very large file throughout the whole time after last restart.

So I don't quite get it what UF does after switching to batchreader.

I will have to contact the guys administering the server which provides me with the log because for now only the UF has access and I can even manually check the file contents. 😕

I suppose there might be something wrong on the source side after all.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As it said 100%, it don't read it again (unless you remove it by btprobe on UF side from the _fishbucket).

If you don't see it where you are expecting, then it's read and sent to somewhere else or it was hit by some rule which drop it.

How about those limits and pipelines? Have you already those in place or should you increase those?

r. Ismo

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My througput is raised quite significantly since the host is doing quite a lot of ingesting (like 32MBps or something like that). For now I have only one pipeline.

It's getting more and more confusing since the inputstatus is indeed - after last UF restart which I needed because of apparent lack of users defined on the UF - from the tail processor.

I'd still say there's something strange going on on the source side.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When UF reads that file, it keeps it open 3 after it has reached EOF.

time_before_close = <integer> * The amount of time, in seconds, that the file monitor must wait for modifications before closing a file after reaching an End-of-File (EOF) marker. * Tells the input not to close files that have been updated in the past 'time_before_close' seconds. * Default: 3

For that reason you couldn't see it as open after it has read the whole file. Usually UF first check if file has modified after last check and it node information has changed after that it start to read it and check from the content if there are something to read and send to indexer.

alwaysOpenFile = <boolean> * Opens a file to check whether it has already been indexed, by skipping the modification time/size checks. * Only useful for files that do not update modification time or size. * Only known to be needed when monitoring files on Windows, mostly for Internet Information Server logs. * Configuring this setting to "1" can increase load and slow indexing. Use it only as a last resort. * Default: 0

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ah, forgot to mention. My time_before_close was raised to 300 seconds already.

I'll check the always_open but I'm afraid that it will only check the CRC which should not change mid-flight. But it's worth a try. Thanks for the hint.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK. It seems that after setting alwaysOpenFile=1 and restarting the UF, it read the file up to

file position = 14925904619

file size = 14084100799

percent = 105.98

type = done reading (batch)

and stopped again.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK. Same thing again - after the file rotated at midnight, it started being properly tailed (at least that's what I suppose). Then I got

12-08-2021 06:01:27.167 +0100 WARN TailReader - Enqueuing a very large file=\\<redacted> in the batch reader, with bytes_to_read=224858513, reading of other large files could be delayed

And I've been geting this message every few minutes up to this point. And the forwarder shows the file as read up to the end and stopped.

So if I understand the behaviour correctly - if the forwarder switches from tailreader to batchreader, it's never going back to the tailreader for this file, right?

The only thing to check now is to raise the limit for the batchreader so the forwarder doesn't switch to batch reader so eagerly.