Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Re: How to determine what causes the unevenness of...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

there are plenty of things to check, below are the first steps i will suggest

start here, this will tell you how many events are indexed and how many unique hosts are sending data to each indexer

| tstats count as event_count dc(host) as u_host where index=* by splunk_server

if you have very uneven numbers there, start looking at outputs.conf and verify your hosts have the appropriate outputs.conf configurations

you can also start by checking load over time:

| tstats count as event_count where index=* by splunk_server _time span=1d | timechart span=1d max(event_count_ as total_events by splunk_server

hope it leads you in the right direction

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

there are plenty of things to check, below are the first steps i will suggest

start here, this will tell you how many events are indexed and how many unique hosts are sending data to each indexer

| tstats count as event_count dc(host) as u_host where index=* by splunk_server

if you have very uneven numbers there, start looking at outputs.conf and verify your hosts have the appropriate outputs.conf configurations

you can also start by checking load over time:

| tstats count as event_count where index=* by splunk_server _time span=1d | timechart span=1d max(event_count_ as total_events by splunk_server

hope it leads you in the right direction

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you @adonio.

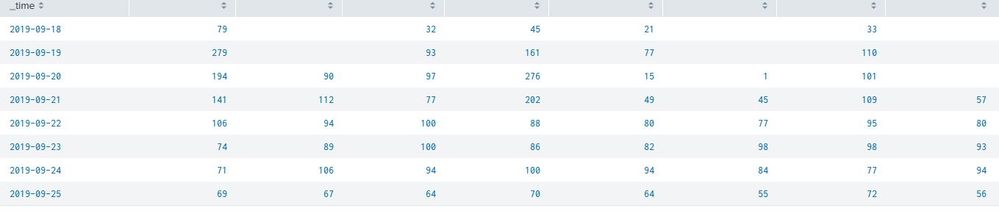

When running - | tstats count as event_count by splunk_server _time span=1d | timechart span=1d max(event_count) as total_events by splunk_server we see the following -

How come some of the cells are empty? These indexers were up and running every day...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

forgot to add the where clause to the tstats see my fixed above

also, if there is no data / events that day for that index, then its 0 / null

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Really really interesting @adonio - adding the where clause changed everything.