Join the Conversation

- News & Events

- :

- Blog & Announcements

- :

- Community Blog

- :

- Everything you Wanted to Know About Sending Logs t...

Everything you Wanted to Know About Sending Logs to Splunk (With the new OpenTelemetry Collector)

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark Topic

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

This blog post is part of an ongoing series on OpenTelemetry.

Curious about OpenTelemetry but more interested in logs than APM tracing or metrics? Look no further! This blog post will walk you through your first OpenTelemetry Logging pipeline...

WARNING: WE ARE DISCUSSING A CURRENTLY UNSUPPORTED CONFIGURATION. When sending data to Splunk Enterprise, we currently only support the use of the OpenTelemetry Collector in Kubernetes environments. As always, use of the Collector is fully supported to send data to Splunk Observability Cloud.

The OpenTelemetry project is the second largest project of the Cloud Native Computing Foundation (CNCF). The CNCF is a member of the Linux Foundation and besides OpenTelemetry, also hosts Kubernetes, Jaeger, Prometheus, and Helm among others.

OpenTelemetry defines a model to represent traces, metrics, and logs. Using this model, it orchestrates libraries in different programming languages to allow folks to collect this data. Just as important, the project delivers an executable named the OpenTelemetry Collector, which receives, processes, and exports data as a pipeline.

The OpenTelemetry Collector uses a component-based architecture, which allows folks to devise their own distribution by picking and choosing which components they want to support. Please see our official documentation to install the collector.

At Splunk, we manage the distribution of our version of the OpenTelemetry collector under this open-source repository. The repository contains our configuration and hardening parameters as well as examples.

The OpenTelemetry collector works using a configuration file written in YAML.

The collector defines metrics pipelines. For our case, we have defined a pipeline that reads from a file and sends its data to Splunk.

pipelines:

logs:

receivers: [filelog]

processors: [batch]

exporters: [splunk_hec/logs]

We use a processor named the batch processor to place multiple entries in one payload, so we avoid sending many messages at once to Splunk.

Note we have placed our pipeline under the name logs. That means we intend to use this pipeline to ingest log records. If you have multiple log pipelines, they must start with logs, followed by a slash, and a unique name, such as:

pipelines:

logs:

…

logs/other:

…

logs/anotherone:

…

This notation is also used for other components, such as filelog or splunk_hec in our example.

Going back to our pipeline, we have defined it to contain a receiver named "filelog". We have not written it down yet, so let’s write down our receivers:

receivers:

filelog:

include: [ /output/file.log ]

Our filelog receiver will follow the file /output/file.log and ingest its contents.

We also need to define our Splunk HEC exporter. Here is our exporters section:

exporters:

splunk_hec/logs:

token: "00000000-0000-0000-0000-0000000000000"

endpoint: "https://splunk:8088/services/collector"

source: "output"

index: "logs"

max_connections: 20

disable_compression: false

timeout: 10s

tls:

insecure_skip_verify: true

This exporter defines the configuration settings of a Splunk HEC endpoint. More documentation and examples are available as part of the OpenTelemetry Collector Contrib github repository.

This particular Splunk endpoint says it will send data to the logs index, under the source “output”, to a Splunk instance located under the Splunk hostname, with a HEC token that is just a set of zeroes.

We’re now going to set all the pieces in motion to deliver to you this example end to end.

First, we are going to define a program that outputs data to a file.

bash -c "while(true) do echo \"$$(date) new message\" >> /output/file.log ; sleep 1; done"

This bash script will send the current date, accompanied with "new message", every second, until told to stop.

Second, we prepare a simple Splunk Enterprise Docker container to run for this example.

We set up its logs index with a splunk.yml configuration file:

splunk:

conf:

indexes:

directory: /opt/splunk/etc/apps/search/local

content:

logs:

coldPath: $SPLUNK_DB/logs/colddb

datatype: event

homePath: $SPLUNK_DB/logs/db

maxTotalDataSizeMB: 512000

thawedPath: $SPLUNK_DB/logs/thaweddb

We load up this file by mounting as a volume. We also run the container to set up a default HEC token, open ports, accept the Splunk license, and set a default admin password. Obviously, this is only useful here for our demonstration. There are more interesting configuration possibilities if you follow along this Github repository for Splunk Docker, and be sure to check out Splunk Operator for larger, production-grade deployments.

All told, our Splunk server looks like this in our Docker Compose:

# Splunk Enterprise server:

splunk:

image: splunk/splunk:latest

container_name: splunk

environment:

- SPLUNK_START_ARGS=--accept-license

- SPLUNK_HEC_TOKEN=00000000-0000-0000-0000-0000000000000

- SPLUNK_PASSWORD=changeme

ports:

- 18000:8000

healthcheck:

test: ['CMD', 'curl', '-f', 'http://localhost:8000']

interval: 5s

timeout: 5s

retries: 20

volumes:

- ./splunk.yml:/tmp/defaults/default.yml

- /opt/splunk/var

- /opt/splunk/etc

With that, you are ready to try out our example.

To run this example, you will need at least 4 gigabytes of RAM, as well as git and Docker Desktop installed.

First, check out the repository using git clone:

git clone https://github.com/signalfx/splunk-otel-collector.git

Using a terminal window, navigate to the folder examples/otel-logs-splunk.

Type:

docker-compose up

This will start the OpenTelemetry Collector, our bash script generating data, and Splunk Enterprise.

Your terminal will display information as Splunk starts. Eventually, Splunk will display this table to let you know it is available:

splunk | Wednesday 04 May 2022 02:04:18 +0000 (0:00:00.818) 0:00:47.544 *********

splunk | ===============================================================================

splunk | splunk_common : Start Splunk via CLI ----------------------------------- 11.97s

splunk | splunk_common : Update Splunk directory owner --------------------------- 6.45s

splunk | splunk_common : Update /opt/splunk/etc ---------------------------------- 5.42s

splunk | splunk_common : Get Splunk status --------------------------------------- 2.50s

splunk | Gathering Facts --------------------------------------------------------- 1.67s

splunk | splunk_common : Hash the password --------------------------------------- 1.23s

splunk | splunk_common : Set options in logs ------------------------------------- 1.11s

splunk | splunk_common : Test basic https endpoint ------------------------------- 1.00s

splunk | Check for required restarts --------------------------------------------- 0.82s

splunk | splunk_standalone : Check for required restarts ------------------------- 0.77s

splunk | splunk_standalone : Update HEC token configuration ---------------------- 0.72s

splunk | splunk_standalone : Get existing HEC token ------------------------------ 0.69s

splunk | splunk_standalone : Setup global HEC ------------------------------------ 0.69s

splunk | splunk_common : Check for scloud ---------------------------------------- 0.66s

splunk | splunk_common : Generate user-seed.conf (Linux) ------------------------- 0.63s

splunk | splunk_common : Find manifests ------------------------------------------ 0.54s

splunk | splunk_common : Wait for splunkd management port ------------------------ 0.43s

splunk | splunk_common : Cleanup Splunk runtime files ---------------------------- 0.40s

splunk | splunk_common : Check for existing splunk secret ------------------------ 0.30s

splunk | splunk_common : Check for existing installation ------------------------- 0.30s

splunk | ===============================================================================

splunk |

splunk | Ansible playbook complete, will begin streaming splunkd_stderr.log

Now, you can open your web browser and navigate to http://localhost:18000. You can log in as admin/changeme.

You will be met with a few prompts as this is a new Splunk instance. Make sure to read and acknowledge them, and open the default search application.

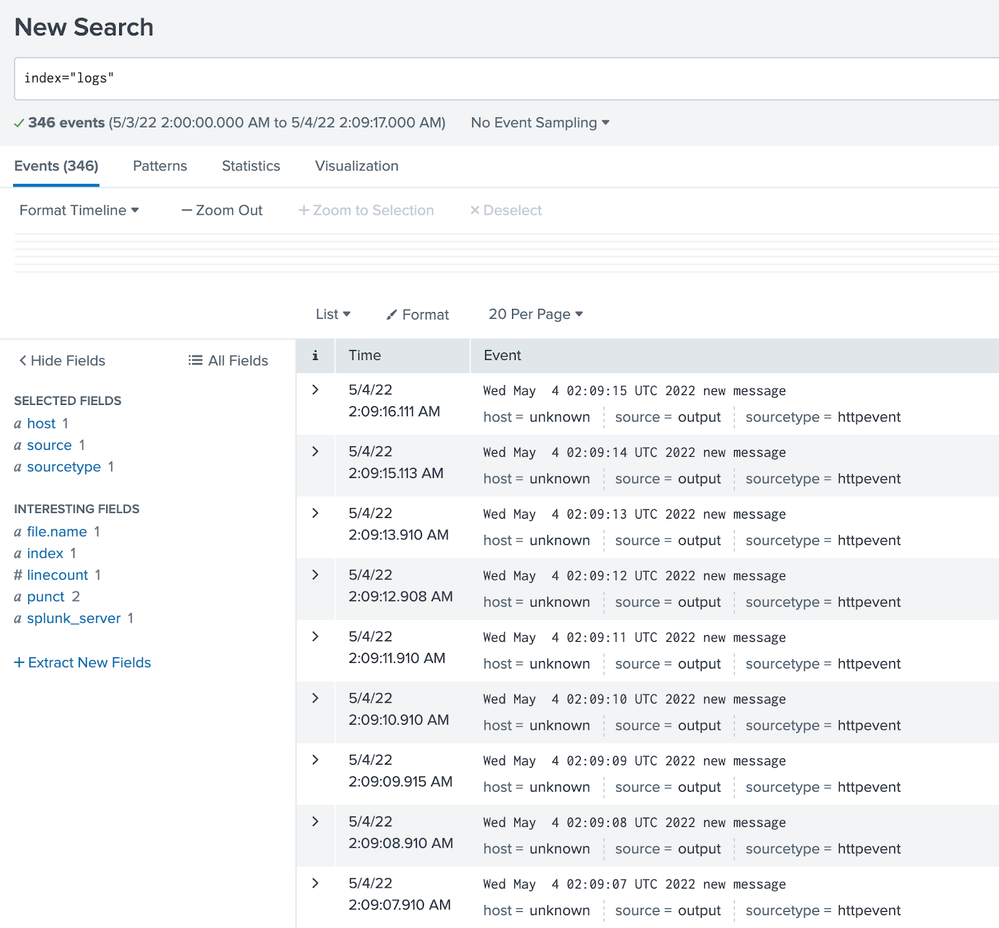

In this application, enter this search to look for logs:

index="logs"

The latest logs generated by the bash script will show:

After exploring this example, you can press Ctrl+C to exit from Docker Compose. Thank you for following along! With this example, you have deployed a simple pipeline to ingest the contents of a file into Splunk Enterprise.

If you found this example interesting, feel free to star the repository! Just click the star icon in the top right corner. Any ideas or comments? Please open an issue on the repository.

— Antoine Toulme, Senior Engineering Manager, Blockchain & DLT

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Accelerating Observability as Code with the Splunk AI Assistant

Integrating Splunk Search API and Quarto to Create Reproducible Investigation ...

Congratulations to the 2025-2026 SplunkTrust!

-

.conf25

31 -

App Dev

25 -

Career Report

9 -

Cisco Live

3 -

Cloud Platform

34 -

Community

56 -

Community Spotlight

23 -

Developer Spotlight

6 -

Enterprise

38 -

MVP

5 -

Observability

95 -

Office Hours

24 -

OpenTelemetry

48 -

Product Announcements

13 -

Security

47 -

Splunk Lantern

59 -

Splunk Love

7 -

SplunkTrust

31 -

Tech Talks

11 -

User Groups

7