Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

All Apps and Add-ons

×

Join the Conversation

Without signing in, you're just watching from the sidelines. Sign in or Register to connect, share, and be part of the Splunk Community.

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- All Apps and Add-ons

- :

- Why is the Kafka Messaging Modular Input not worki...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Why is the Kafka Messaging Modular Input not working on a Splunk 6.2.3 Universal Forwarder?

tpaulsen

Contributor

06-08-2015

02:01 AM

Hello,

I am currently trying to make Damien's Kafka Modular Input run on a Splunk Universal Forwarder 6.2.3 under RedHat 6.

Unfortunately i am getting the following errors in the splunkd.log:

06-02-2015 09:33:38.282 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" SLF4J: Failed to load class "org.slf4j.impl.StaticLoggerBinder".

06-02-2015 09:33:38.282 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" SLF4J: Defaulting to no-operation (NOP) logger implementation

06-02-2015 09:33:38.282 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" SLF4J: See http://www.slf4j.org/codes.html#StaticLoggerBinder for further details.

We are running Python 2.6 on the host. Recommended is Python 2.7 version. Could this be the root cause?

Here is our inputs.conf:

# Kafka Data Inputs {{{

[kafka://kafka-hostname]

additional_consumer_properties = group.id=7

auto_commit_interval_ms = 1000

group_id = 6

index = test

sourcetype = kafka-test

topic_name = logstash

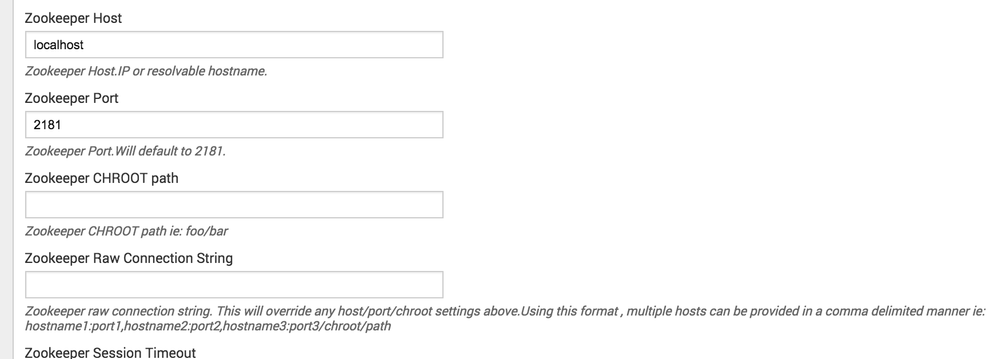

zookeeper_connect_host = zoo-hostname1,zoo-hostname2,zoo-hostname3,zoo-hostname4,zoo-hostname5

zookeeper_connect_port = 2181

zookeeper_session_timeout_ms = 400

zookeeper_sync_time_ms = 200

disabled = false

# }}}

When i use only one zoo-host and leave the following lines out:

additional_consumer_properties = group.id=7

auto_commit_interval_ms = 1000

group_id = 6

i get different error messages in the splunkd.log:

06-02-2015 11:06:15.242 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at kafka.consumer.ZookeeperConsumerConnector.kafka$consumer$ZookeeperConsumerConnector$$registerConsumerInZK(ZookeeperConsumerConnector.scala:226)

06-02-2015 11:06:15.242 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at kafka.consumer.ZookeeperConsumerConnector.consume(ZookeeperConsumerConnector.scala:211)

06-02-2015 11:06:15.242 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at kafka.javaapi.consumer.ZookeeperConsumerConnector.createMessageStreams(ZookeeperConsumerConnector.scala:80)

06-02-2015 11:06:15.242 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at kafka.javaapi.consumer.ZookeeperConsumerConnector.createMessageStreams(ZookeeperConsumerConnector.scala:92)

06-02-2015 11:06:15.243 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at com.splunk.modinput.kafka.KafkaModularInput$MessageReceiver.run(Unknown Source)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" Stanza kafka://kafka-dev-290687.lhotse.ov.otto.de : Error running message receiver : java.lang.IllegalArgumentException: Invalid path string "/consumers//ids/_kafka-dev-290687-1433235975245-76b9eb2e" caused by empty node name specified @11

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.apache.zookeeper.common.PathUtils.validatePath(PathUtils.java:99)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.apache.zookeeper.common.PathUtils.validatePath(PathUtils.java:35)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.apache.zookeeper.ZooKeeper.create(ZooKeeper.java:626)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.I0Itec.zkclient.ZkConnection.create(ZkConnection.java:87)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.I0Itec.zkclient.ZkClient$1.call(ZkClient.java:308)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.I0Itec.zkclient.ZkClient$1.call(ZkClient.java:304)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.I0Itec.zkclient.ZkClient.retryUntilConnected(ZkClient.java:675)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.I0Itec.zkclient.ZkClient.create(ZkClient.java:304)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at org.I0Itec.zkclient.ZkClient.createEphemeral(ZkClient.java:328)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at kafka.utils.ZkUtils$.createEphemeralPath(ZkUtils.scala:253)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at kafka.utils.ZkUtils$.createEphemeralPathExpectConflict(ZkUtils.scala:268)

06-02-2015 11:06:15.248 +0200 ERROR ExecProcessor - message from "python /var/opt/splunk/etc/apps/kafka_ta/bin/kafka.py" at kafka.utils.ZkUtils$.createEphemeralPathExpectConflictHandleZKBug(ZkUtils.scala:306)

What am i doing wrong in my configuration?

Please help.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Damien_Dallimor

Ultra Champion

09-04-2015

03:02 AM

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Damien_Dallimor

Ultra Champion

06-10-2015

08:38 AM

Do you get the error if you try with a single zookeeper host ?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

tpaulsen

Contributor

09-04-2015

01:57 AM

Not yet. My attempts got stopped by some other projectwork, involving Kafka and ELK. 😞

Got questions? Get answers!

Join the Splunk Community Slack to learn, troubleshoot, and make connections with fellow Splunk practitioners in real time!

Meet up IRL or virtually!

Join Splunk User Groups to connect and learn in-person by region or remotely by topic or industry.

Get Updates on the Splunk Community!

Why Splunk Customers Should Attend Cisco Live 2026 Las Vegas

Why Splunk Customers Should Attend Cisco Live 2026 Las Vegas

Cisco Live 2026 is almost here, and this ...

What Is the Name of the USB Key Inserted by Bob Smith? (BOTS Hint, Not the Answer)

Hello Splunkers,

So you searched, “what is the name of the usb key inserted by bob smith?”

Not gonna lie… ...

Automating Threat Operations and Threat Hunting with Recorded Future

Automating Threat Operations and Threat Hunting

with Recorded Future

June 29, 2026 | Register

Is your ...