Are you a member of the Splunk Community?

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- TrackMe - Data source monitoring - Outliers not co...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Last week I started with TrackMe App and so far I'm really impressed with all prebuild functionality.

In the last days I was going through configurations step by step and applied them on data. Today I found some alerts due to outliers in sourcetypes, my problem is that in some cases I don't understand, why the eventcount in the outlierdetection got that high, because searching for index data in that time range is telling me everything is normal and the count is not that high as "detected".

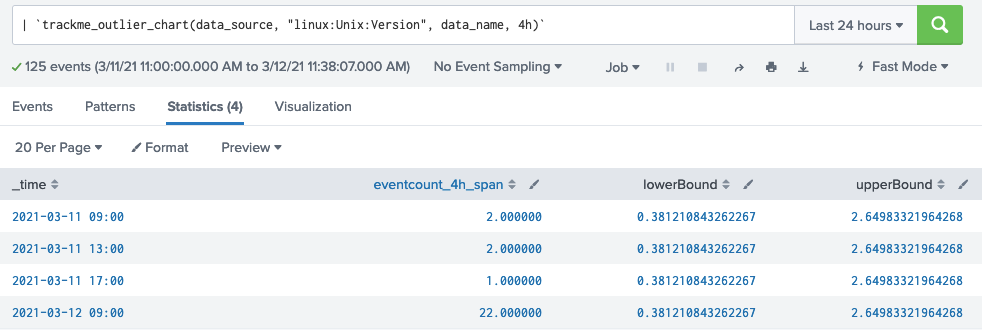

Below is the detected outlier with a count of 22:

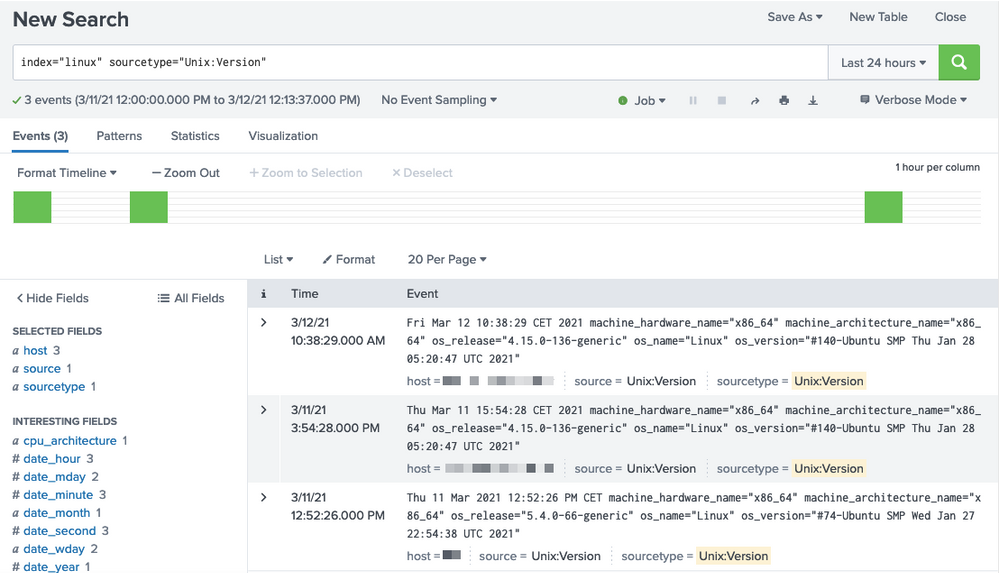

But indexed data is still at an eventcount of 1:

Where is the count of 22 coming from?

How to investigate on this, is there something that I maybe configured the wrong way?

Many thanks and happy splunking,

Sara

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@SaraO

This use case is totally relevant and addressed in TrackMe, in different ways.

- Has data stopped being indexed for a source?

--> This is the purpose of one the main KPIs, called event lagging in TrackMe, basically the difference between now (when the tracker runs) and the latest event in the scope of the data source (from the _time point of view)

- Unsual volume?

--> Is the scope of outliers too, so no pb with that.

I was essentially saying this source wasn't a great candidate because of the very few events, but still that remains valid

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @SaraO

Thank you 😉 Glad you like the richness of TrackMe!

Document reference:

https://trackme.readthedocs.io/en/latest/userguide.html#outliers-detection-and-behaviour-analytic

To answer:

- The outliers eventcount is a per 4 hour count, so it will not exactly match what you would see in Splunk unless you reproduce the way it the outlier calculation works

- Not very sure where the 22 came from based on your screenshots, because of the time rounding you should look a bit more than the last 24 hours to check

- This data source is unlikely to be a great candidate for outliers detections, the features is very much designed for continous and real time data flow more than this very specific use case that is going to very sporidically generate a single event per server, not saying you cannot get value from the outliers this case, you can, but it's certainly not the most valuable case

- Not that the outliers detection workflow in TrackMe does not alert for the upper outliers by default, only the lower bound threshold by default, upper threshold is something you enabled on a per entity basis if you wish to do so

Let me know if anymore questions 😉

Guilhem

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @guilmxm ,

Thank you for your response 🙂

Do you maybe have a recommendation how to configure outliers detection for data sources giving data every 12/24 hours?

I would like to monitor upon all data sources unusual volume behavior; either data is not coming anymore for a source or data is coming way much more than usually (due to some changes, unexpected activity, ...)

Regards

Sara

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@SaraO

This use case is totally relevant and addressed in TrackMe, in different ways.

- Has data stopped being indexed for a source?

--> This is the purpose of one the main KPIs, called event lagging in TrackMe, basically the difference between now (when the tracker runs) and the latest event in the scope of the data source (from the _time point of view)

- Unsual volume?

--> Is the scope of outliers too, so no pb with that.

I was essentially saying this source wasn't a great candidate because of the very few events, but still that remains valid