Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

All Apps and Add-ons

×

Are you a member of the Splunk Community?

Sign in or Register with your Splunk account to get your questions answered, access valuable resources and connect with experts!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- Setting host and sourcetype in elasticsearch-data-...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Setting host and sourcetype in elasticsearch-data-integrator

robertlynch2020

Influencer

11-04-2019

05:49 AM

Hi @larmesto

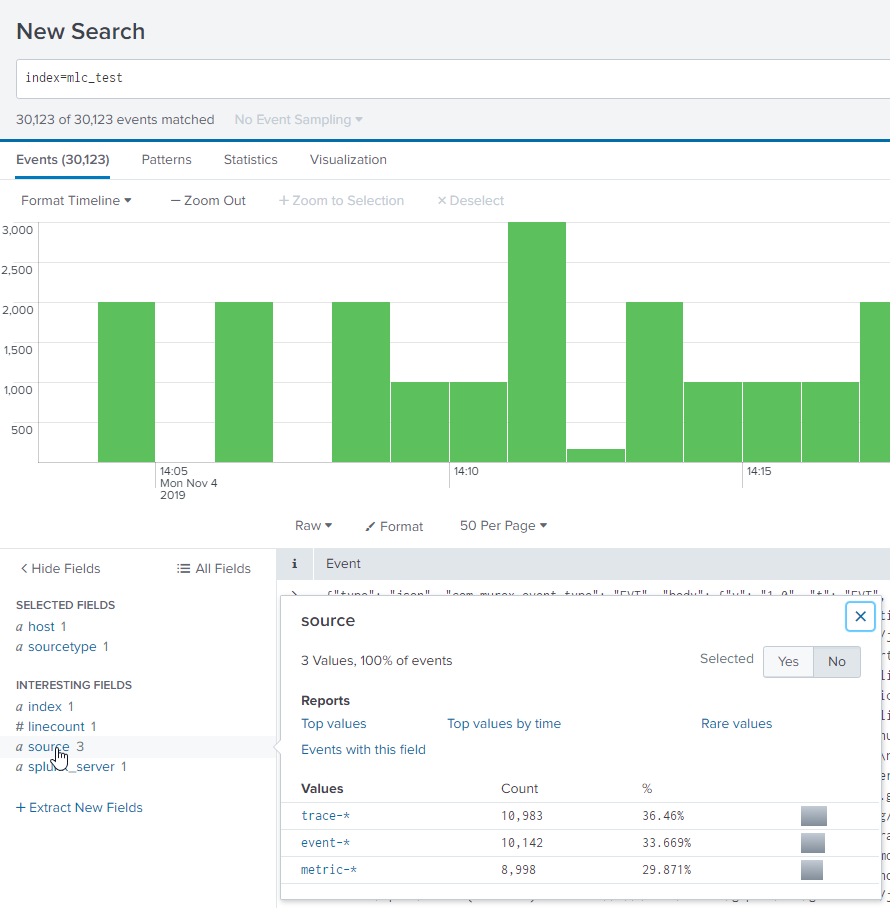

I have the following configuration that is working and taking in data into SPLUNK from elasticsearch via TA-elasticsearch-data-integrator.

The issues is, i cant specify the host or the sourcetype..

By deafult Sourcetype= JSON and host is the name of the Splunk machine. I want to change this as i need to connect to many elasticsearch .

[elasticsearch_json://metric]

date_field_name = timestamp

elasticsearch_indice = metric-*

elasticsearch_instance_url = http://mx12405vm

greater_or_equal = 2019-01-01

index = mlc_test

interval = 60

lower_or_equal = now

port = 10212

use_ssl = False

verify_certs = False

user =

secret =

sourcetype = Metric_Elastic

disabled = 0

I am getting an error, however the data is going into the correct index. So not sure if related.

2019-11-04 14:41:59,052 INFO pid=11049 tid=MainThread file=base.py:log_request_success:118 | DELETE http://mx12405vm:10212/_search/scroll [status:200 request:0.014s]

2019-11-04 14:41:59,053 ERROR pid=11049 tid=MainThread file=base_modinput.py:log_error:307 | Get error when collecting events.

Traceback (most recent call last):

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/ta_elasticsearch_data_integrator_modular_input/modinput_wrapper/base_modinput.py", line 127, in stream_events

self.collect_events(ew)

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/elasticsearch_json.py", line 104, in collect_events

input_module.collect_events(self, ew)

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/input_module_elasticsearch_json.py", line 83, in collect_events

for doc in res:

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/ta_elasticsearch_data_integrator_modular_input/elasticsearch/helpers/actions.py", line 458, in scan

resp = client.scroll(**scroll_kwargs)

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/ta_elasticsearch_data_integrator_modular_input/elasticsearch/client/utils.py", line 84, in _wrapped

return func(*args, params=params, **kwargs)

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/ta_elasticsearch_data_integrator_modular_input/elasticsearch/client/__init__.py", line 1315, in scroll

"GET", "/_search/scroll", params=params, body=body

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/ta_elasticsearch_data_integrator_modular_input/elasticsearch/transport.py", line 353, in perform_request

timeout=timeout,

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/ta_elasticsearch_data_integrator_modular_input/elasticsearch/connection/http_urllib3.py", line 251, in perform_request

self._raise_error(response.status, raw_data)

File "/hp737srv2/apps/splunk/etc/apps/TA-elasticsearch-data-integrator---modular-input/bin/ta_elasticsearch_data_integrator_modular_input/elasticsearch/connection/base.py", line 178, in _raise_error

status_code, error_message, additional_info

NotFoundError: NotFoundError(404, u'index_not_found_exception', u'no such index', bad-request, index_or_alias)

^C

You have new mail in /var/spool/mail/autoengine

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

BigCosta

Path Finder

11-14-2019

07:15 AM

Hi!

I think the inability to set your own sourcetype is a bug. But there is workaround. Use source for set sourcetype and host. Insert TRANSFORMS in your props.conf and add the transformation to transforms.conf

props.conf

[source::metric-*]

SHOULD_LINEMERGE = 0

KV_MODE = json

TIME_PREFIX = "@timestamp":

MAX_TIMESTAMP_LOOKAHEAD = 40

TRANSFORMS-host_sourcetype_override = elk_host_override, elk_sourcetype_override

transforms.conf

[elk_host_override]

REGEX = "beat":\s*{[^}]*?"hostname":\s*"([^"]+)

DEST_KEY = MetaData:Host

FORMAT = host::$1

[elk_sourcetype_override]

REGEX = .

DEST_KEY = MetaData:Sourcetype

FORMAT = sourcetype::Metric_Elastic

Get Updates on the Splunk Community!

CX Day is Coming!

Customer Experience (CX) Day is on October 7th!!

We're so excited to bring back another day full of wonderful ...

Strengthen Your Future: A Look Back at Splunk 10 Innovations and .conf25 Highlights!

The Big One: Splunk 10 is Here!

The moment many of you have been waiting for has arrived! We are thrilled to ...

Now Offering the AI Assistant Usage Dashboard in Cloud Monitoring Console

Today, we’re excited to announce the release of a brand new AI assistant usage dashboard in Cloud Monitoring ...