Join the Conversation

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- Regex data parsing using Delimiter comma "has exce...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

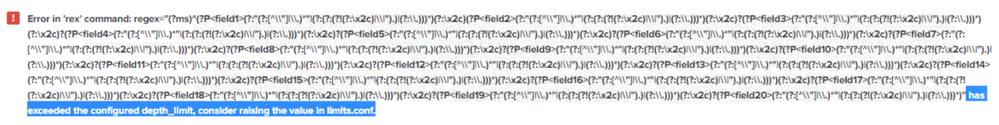

while data parsing i'm using the delimiter section to parse my data at that time i get the error

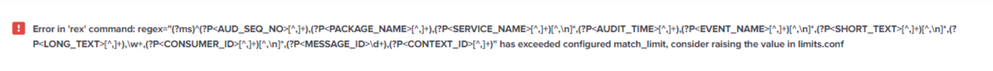

when i try to extract the same log using the "Regular" option i get the following error

please advice

Regards,

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If it is indeed just comma separated data, then why use regular expressions? And just out of curiosity, how did you end up with those regular expressions that were causing issues, did you let Splunk generate those somehow?

Just configure DELIMS based extractions along these lines:

props.conf

[yoursourcetype]

REPORT-comma-separated-fields = comma-separated-fields

transforms.conf

[comma-separated-fields]

DELIMS = ","

FIELDS = AUD_SEQ_NO,PACKAGE_NAME,SERVICE_NAME,AUDIT_TIME,EVENT_NAME,SHORT_TEXT,LONG_TEXT,AUDIT_DATA,CONSUMER_ID,MESSAGE_ID,CONTEXT_ID,USER_NAME,USER_ID,USER_CONTEXT,COMPANY_ID,VERSION,SESSION_ID,CHANNEL_ID,BUSINESSUNIT_ID,SERVER_NAME

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If it is indeed just comma separated data, then why use regular expressions? And just out of curiosity, how did you end up with those regular expressions that were causing issues, did you let Splunk generate those somehow?

Just configure DELIMS based extractions along these lines:

props.conf

[yoursourcetype]

REPORT-comma-separated-fields = comma-separated-fields

transforms.conf

[comma-separated-fields]

DELIMS = ","

FIELDS = AUD_SEQ_NO,PACKAGE_NAME,SERVICE_NAME,AUDIT_TIME,EVENT_NAME,SHORT_TEXT,LONG_TEXT,AUDIT_DATA,CONSUMER_ID,MESSAGE_ID,CONTEXT_ID,USER_NAME,USER_ID,USER_CONTEXT,COMPANY_ID,VERSION,SESSION_ID,CHANNEL_ID,BUSINESSUNIT_ID,SERVER_NAME

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Frank,

Added to the above query, i would like to know about the limit.conf file you have shared me before, the file you have shared and the file i'm having in the splunk has the same values. So can you please advice me that which value i should change to increase the limit of regex in the system.

Regards,

Vigneshprasanna R

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't think you want to change those values, unless you understand exactly why you are hitting those limits and increasing the limits is the only solution. Hitting those limits typically means there is something wrong with your configuration, which you should fix, rather than stretching the limits.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Frank,

Once Again Thanks for the effort, Ya i added the data to the splunk and went to a log and used the option of "Extract Fields" with there i got two options to extract the fields they are "Delimiter " & "Regular" i tried both but ended up with the error i have posted above.

I'm new to splunk, so i have a some more doubts in .conf files, can you please guide me where i should create this .conf files you have added in the previous answer ??

And one more doubt is that i have many types of log in my system how to specify that this conf is for a specific index type ??

Thanks & Regards,

Vigneshprasanna R

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tested the GUI field extraction method using the Delimiter method and that works fine on the sample you shared. Can you perhaps share one or more screenshots showing how you performed those steps that led to the error?

The props.conf and transforms.conf should in this case go onto your search head(s). You could put them under etc/system/local (or add the config to the files already there). Better solution would be to put them in your own custom app directory under etc/apps/.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks like you're running into the limits of below 2 settings (from limits.conf spec):

[rex]

match_limit = <integer>

* Limits the amount of resources that are spent by PCRE

when running patterns that will not match.

* Use this to set an upper bound on how many times PCRE calls an internal

function, match(). If set too low, PCRE might fail to correctly match a pattern.

* Default: 100000

depth_limit = <integer>

* Limits the amount of resources that are spent by PCRE

when running patterns that will not match.

* Use this to limit the depth of nested backtracking in an internal PCRE

function, match(). If set too low, PCRE might fail to correctly match a pattern.

* Default: 1000

For your second case, I'm guessing the multiple [^,\n]* bits are causing some issues. Perhaps you can share some sample data and explain what you want to achieve, such that we can help tune your regex?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

how can we extract for two delims? Can we do we as below

DELIMS = "|", ":"

Do we need to specify the field names or it extracts the key as field name here? My data sample is as below

name:"James Bond"|profession:"Actor"|Movie:"Tomorrow Never Dies"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Frank,

Thanks in advance 🙂

Sample Message :

1162340588,xx_xxx,xx.yy.eded.aasa.mka,2018-05-30 19:49:54.477,End,Service TokenService Operation getTokenList completed successfully.,NULL,NULL,user1,Id1njk23nj13jma,NULL,NULL,NULL,UNKNOWN,BS0020001,v1,NULL,NULL,NULL,ppoansdo12-st34.metest.local

Heading need to be provided:

AUD_SEQ_NO PACKAGE_NAME SERVICE_NAME AUDIT_TIME EVENT_NAME SHORT_TEXT LONG_TEXT AUDIT_DATA CONSUMER_ID MESSAGE_ID CONTEXT_ID USER_NAME USER_ID USER_CONTEXT COMPANY_ID VERSION SESSION_ID CHANNEL_ID BUSINESSUNIT_ID SERVER_NAME