- Splunk Answers

- :

- Using Splunk

- :

- Alerting

- :

- Re: Splunk alert no results

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Splunk alert no results

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The only thing that I can think of is that your events are expiring between when the alert hits and when you double-check. This should tell you what the oldest event still in the index is. It should be weeks, if not months old but maybe it is hours or days old.

|metadata type=sourcetypes | search sourcetype=log4net

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Usually when I have stuff that "tests OK" in an ad-hoc search but fails in a scheduled search it is due to pipeline latency. Check out the values of _indextime - _time for your events. These should be positive and no more than 300ish.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

And what do you recommend after checking the values of _indextime - _time

_indextime - _time is less than 0 to my indexed data. What should i do?

_indextime - _time is around -9.581

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Since the magnatude is so low, the problem is surely that your forwarders and/or indexers are not using NTP and have drifted from true. To see if it is your indexers, try this:

| rest /services/server/info

| eval updated_t=round(strptime(updated, "%Y-%m-%dT%H:%M:%S%z"))

| eval delta_t=now()-updated_t

| eval delta=tostring(abs(delta_t), "duration")

| table serverName, updated, updated_t, delta, delta_t

If delta is anything other than about 00:00:01 (which is easy to account for when processing a lot of indexers), you have clock skew and are a naughty boy because you should have setup NTP on your indexers.

NOTE: this IS a problem, but it is not the problem that you were asking about.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

delta is 00:00:00 and _indextime - _time is around 9.581 it is positive

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In that case never mind this whole answer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

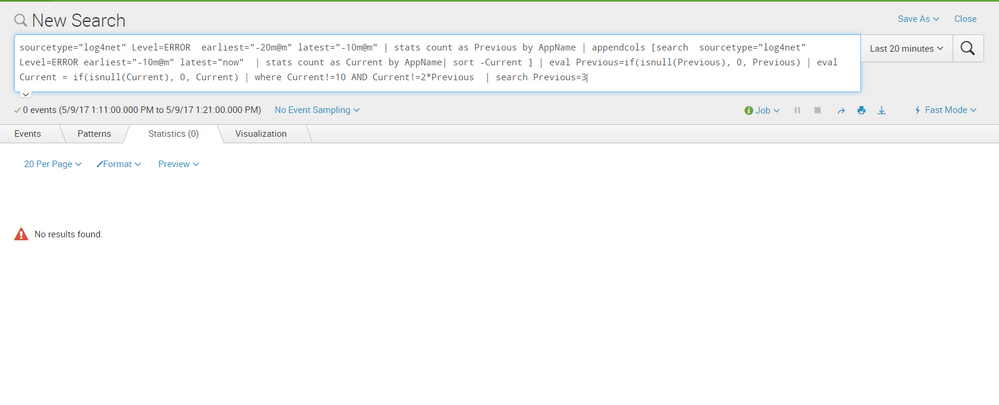

can you help me about my problem why i dont see results in splunk?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Your trigger times in the capture show 12:27 to 12:34 but your search shows 1:11 to 1:21. Is it possible that there were no triggered events between 1:11 and 1:21? What if you change your search time frame to the 12:27-12:34?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I tried but no result again