Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Other Using Splunk

- :

- Alerting

- :

- Is it possible to delay triggering an alert for X ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I'm creating an alert that should trigger an email to me whenever a specific message appears in one of my application logs. The query is very simple:

*SourceName="MyAppLog" Message="Data Sync Error"*

However, I want to add a delay to this alert due to the nature of the error. The error is caused by data syncs falling behind, when that happens the app will spit that error out to the log every 15 seconds or so, however 99% of the time the error will clear by itself within 5 minutes, it just needs a little time for the syncing to catch up.

Is it possible to modify this query to get the behavior I'm describing here? Any help is appreciated. Basically, I only want this to trigger an email to me if the error keeps happening beyond a 5-minute window. If it happens for less than 5 minutes, I don't need to know about it.

Thanks,

Jeff

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I figured out how to do this using timecharts, here's the solution:

SourceName="MyAppLog" Message="Data Sync Error" earliest=-10m@m latest=now

| timechart span=5m count

| where _time>=relative_time(now(),"-10m@m") AND _time < relative_time(now(),"-5m@m")

This returns 3 rows, each representing 5-minute interval over the past 10 minutes.

Assuming this triggered at 00:00:00:

Row1) 00:00:00

Row2) 00:05:00

Row3) 00:10:00 (now)

Then I used the where _time statement to filter out rows 1 and 3.

The result is row 2, which contains the errors that occurred during the 2nd half of the 5 minute window. If errors only occurred during the first 5 minute window and not the 2nd, I would never be alerted because the where _time statement won't return anything at all in that case. I will only be alerted if errors occurred during the 2nd half of the 10 minute window.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I figured out how to do this using timecharts, here's the solution:

SourceName="MyAppLog" Message="Data Sync Error" earliest=-10m@m latest=now

| timechart span=5m count

| where _time>=relative_time(now(),"-10m@m") AND _time < relative_time(now(),"-5m@m")

This returns 3 rows, each representing 5-minute interval over the past 10 minutes.

Assuming this triggered at 00:00:00:

Row1) 00:00:00

Row2) 00:05:00

Row3) 00:10:00 (now)

Then I used the where _time statement to filter out rows 1 and 3.

The result is row 2, which contains the errors that occurred during the 2nd half of the 5 minute window. If errors only occurred during the first 5 minute window and not the 2nd, I would never be alerted because the where _time statement won't return anything at all in that case. I will only be alerted if errors occurred during the 2nd half of the 10 minute window.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jospina2 If your problem is resolved, please accept an answer to help future readers.

If this reply helps you, Karma would be appreciated.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

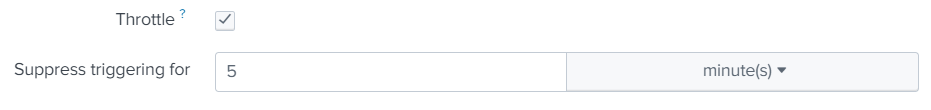

Yes. Use throttling to suppress alert triggering for a specific time period. Save your search SourceName="MyAppLog" Message="Data Sync Error" as alert and configure Throttle under "Trigger Conditions". Any notifications after the 1st one will be suppressed for 5 minutes.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

i wish @splunk has out of the box feature to detect once and check for the recurrence after specified interval before sending the alert similar to how it throttles after the first alert is sent.. @jospina2 thank you for the suggestion

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I don't want it to trigger the first time. I don't want it to trigger at all unless the event has been occurring for more than 5 minutes.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jospina2,

see my answer above, or see also answer here:

https://answers.splunk.com/answers/368413/generate-alert-when-2-consecutive-events-occurred.html

leveraging streamstats

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hello there,

i am not aware on such pre-built function, however, i am positive you can create a custom alert action that will answer your needs.

with that being said, is there a way you can capture in search the real alert condition? are there any indications in the data regarding start and stop of the alert? do you have a unique identifier for each alert?

some ideas that comes to mind taking in consideration a single event every 15 seconds for 5 minutes is acceptable behavior:

... your search ... | stats count as error_count

run your search every 6 or 7 minutes or so. if error_count > 20

or maybe something like that:

... your search ... | stats min(_time) as first_error max(_time) as last_error count as error count

| eval error_duration = last_error - first_error

| eval error_ratio = round(error_count/error_duration*100, 2)

| where error_ratio > 25

again, trying to see if for a period of time you had consecutive errors which will justify the alerts

Hope it helps.

p.s. i am positive others here have cleaner and neater searches to capture your condition. try browsing this portal

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I figured out a different way of doing it by using timechart