Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: What is the Search Processing Language (SPL) '...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

After a The splunk+R search ,

index=pqr host=xyz* NOT TYPE="*ABCDE*" | fields X, Y |timechart limit=0 span=10m count, avg(X) by Y | r " input = data . . . calculations . . . output =mydataframe"

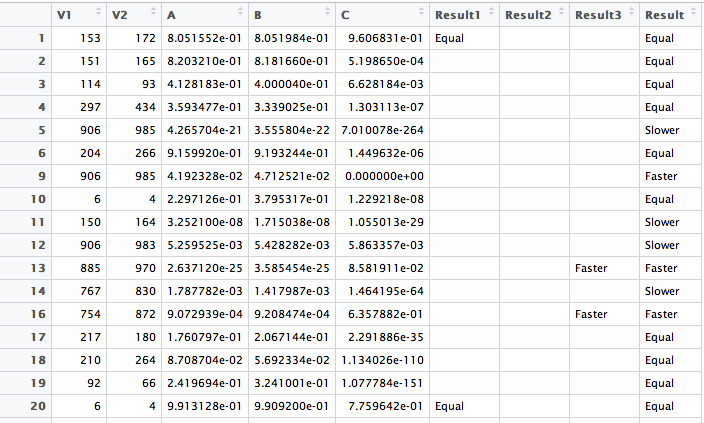

output is nothing but a dataframe that looks like this (dummy values)

V1 V2 A B C

1 2 3 4 5

3 4 2 5 4

1 2 0.5 1 1 2

The operations i want to do are :

D$Result1 <- ifelse(D$A > 0.05 & D$B > 0.05, "Equal", "")

D$Result2 <- ifelse(D$A < 0.05 & D$B > 0.05 & D$C < 0, "Slower", "")

D$Result3<-ifelse(D$A=0.05& D$B> 0.06 & D>0, "Faster", "" ) ,. . . so on

so that I arrive at (look at first image)

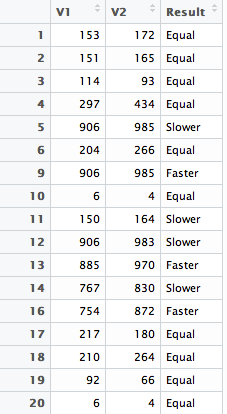

and then combine intermediate results into a final results column

D$Result <- paste(D$Result1, D$Result2,

D$Result3,

sep = "") and arrive at (look at second image)

How do I achieve this in Splunk using Splunk's search processing language?

This is the sample input and output from R Studio:

From the above I want to arrive at this : (Displaying only final and required results):

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi m_vivek,

I do not know R language but here's a run anywhere example might demonstrate what you want to achieve using SPL

|stats count |eval A=1 |eval B=1 |eval C=2 |eval D=3 | fields - count

|eval result1=if((A > 0.05 AND B > 0.05), "Equal", "")

|eval result2=if((A > 0.05 AND B > 0.05 AND C < 0), "Slower", "")

|eval result3=if((A = 0.05 AND B > 0.06 AND D > 0), "Faster", "")

|eval result=coalesce(result1,result2,result3)

|table result

The first line just produces dummy results as per your dataframe example, the if statement is fairly obvious. I'm not sure what you meant by aggregate the results but the coalesce eval function will return on the first non null value it finds. If you could describe the output you want then there are plenty of ways to summarize the results.

Hope this helps.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Each eval should work on each row (event). gcato builds up a "faked up" data set with his first row so that there is data to work on, you will replace that with your base search which will return a bunch of rows.

Note: "" is not what you need to return in the false part of eval if - coalesce takes the first non-NULL it finds, and an empty spot is not null. So, swap that with NULL and it'll work.

index=pqr host=xyz* NOT TYPE="*ABCDE*" | fields X, Y

|timechart limit=0 span=10m count, avg(X) by Y | eval result1=if((A > 0.05 AND B > 0.05), "Equal", NULL)

|eval result2=if((A > 0.05 AND B > 0.05 AND C < 0), "Slower", NULL)

|eval result3=if((A = 0.05 AND B > 0.06 AND D > 0), "Faster", NULL)

|eval result=coalesce(result1,result2,result3)

|table result

You could replace much of that with a single eval case statement too. Those are just if's stuck all together with no if in a "question 1, answer 1, question 2, answer 2 format. You can have a "default" value by using a 1==1, mydefault at the end. Something like:

index=pqr host=xyz* NOT TYPE="*ABCDE*" | fields X, Y

|timechart limit=0 span=10m count, avg(X) by Y

|eval result=case((A > 0.05 AND B > 0.05), "Equal", (A > 0.05 AND B > 0.05 AND C < 0), "Slower", (A = 0.05 AND B > 0.06 AND D > 0), "Faster", 1==1, "WTF?")

|table result

I guess for the latter question on na, that depends on both what they really are and what you want to do with them. You'll have to test a little with how NA is handled by default, those eval statements will probably just be ignored because Splunk won't try to do math on characters. Which may work perfectly for your needs. Or it may not, again only you know your data. You could convert them all to NULL but I'm not sure that gains you anything over Splunk's default handling. You could convert them to 0, but that's probably going to wreck your calculations (NULL != 0!)

To "do something with them", foreach is probably easiest IF the fields are named consistently. Like if they were named test_result_A, test_result_B and so on instead of A, B and C , you could stick something like this near the front (untested):

... | foreach test_result* [eval <<FIELD>=if(<<FIELD>>="na",NULL,<<FIELD>>) | ...

If they are not named consistently, well, either rename them or do them individually like you do with the eval result* above.

An aside: I think gcato's answer is what I would have come up with initially, but I have to say I'm confused about the calculations you provide. They are not clear and they do not match the sample data. This may not be a problem as long as enough of your needs are answered in gcato's answer so that you can just extend it as required, but if they're not this might need clarification.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the update rich7177 and the gotcha with colesce command and NULLS.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@rich7177 thanks very much for the very detailed answer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi m_vivek,

I do not know R language but here's a run anywhere example might demonstrate what you want to achieve using SPL

|stats count |eval A=1 |eval B=1 |eval C=2 |eval D=3 | fields - count

|eval result1=if((A > 0.05 AND B > 0.05), "Equal", "")

|eval result2=if((A > 0.05 AND B > 0.05 AND C < 0), "Slower", "")

|eval result3=if((A = 0.05 AND B > 0.06 AND D > 0), "Faster", "")

|eval result=coalesce(result1,result2,result3)

|table result

The first line just produces dummy results as per your dataframe example, the if statement is fairly obvious. I'm not sure what you meant by aggregate the results but the coalesce eval function will return on the first non null value it finds. If you could describe the output you want then there are plenty of ways to summarize the results.

Hope this helps.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @gcato . In you example I see that it works for single values. How do I extend your example to my case,

So that the if() runs on each and every row of the dataframe. (Please refer updated question)

Also in splunk how should I handle any na's that might be present in columns A B C ?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@gcato Thanks very much. I have updated the question with sample input and sample output.

This is pretty much what I was trying to achieve. By aggregating I meant bringing all the different intermediate results together into one column.