Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Timechart of a percentage using data from X hours ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Timechart of a percentage using data from X hours ago ?

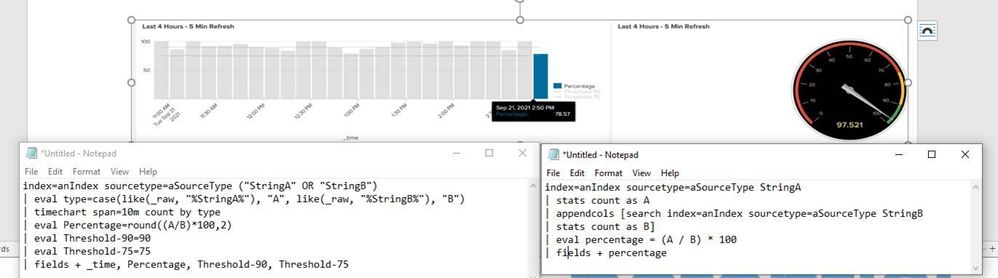

Here is the query I am starting with:

index=anIndex sourcetype=aSourceType ("StringA" OR "StringB")

| eval type=case(like(_raw, "%StringA%"), "A", like(_raw, "%StringB%"), "B")

| timechart span=10m count by type

| eval Percentage=round((A/B)*100,2)

| eval Threshold-90=90

| eval Threshold-75=75

| fields + _time, Percentage, Threshold-90, Threshold-75

First off I am not 100% sure the above query is correct but I do get data that I can chart into a dashboard. Trying to show 'Percentage' over time.

I using this in a dashboard and am using in the chart overlay (Percentage, Threshold-90, Threshold-75) and the resulting timechart graph appears to be showing the calculated Percentage correctly in 10 minute intervals.

What I am wondering if it is possible to make the calculation (Percentage) using data looking back X hours.

From the way I think its working above is that at each percentage calculation for A & B in the graph it is the number of occurrences of "StringA" & "StringB" for the current 10 minutes in the graph.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Firstly, I'm not sure what kind of percentage it's supposed to be. Isn't it simply a ratio of A over B? A percentage would be a/(a+b) or b/(a+b).

Secondly, it will fail if you don't have results of type B during a particular timespan. (You don't divide by 0!)

If you want to compare the timeseries to a past timeseries, you can use | timewrap.

Or if you simply want to get a value from a previous row (or few rows before), you might use autoregress.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I will try my best to explain what I am trying to calculate the best that I can.

In this line: eval Percentage=round((A/B)*100,2)

I want 'A' and 'B' to be the # of occurrences of "StringA" & "StringB" from X hours ago till now.

The attachment shows the graph for the first query, you can see the last timeslice percentage does not match up with the percentage on the right, which is the same percentage but a single calculation based upon the past 4 hours.

Then when shown in the timechart each to be shown in 10 minute intervals.

I am not familiar with timewrap or autoregress but will look into them.

Below are the two graphs I have being generated from each of the queries. If you notice on the right the percentage is 78% while on the left it is 97%. I believe this is happening due to the right percentage calculation is using a 4 hour interval selection, while the right is making percentage calculation using 10 minute intervals.

So basically trying to represent the single calculation on the right into a timechart to graph the % over time.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, that's exactly what it's doing. Timechart is dividing the total timespan into span-sized buckets and is counting the stats independently for each bucket, so you can have time series. In the right search you're simply calculating overall stats over the whole period. So it's only natural that they give two different results.

And you can simplify the second search. There's no need to use appendcols and engage another search when it's not needed. Just do your count with eval. For example:

| stats count(eval(like(_raw,"%StringA%"))) as A count(eval(like(_raw,"%StringB%"))) as

If you have the events parsed and can compare the strings to a single field, that'd be even better.

Anyway - it's natural that you get two different results because you're doing two different things. If you want to have the gauge to show the exact same value that the last value on left timechart, you have do the same stats and simply align the time of the search so it is equal to the last bucket of the timechart.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the confirmation on what I am seeing in the graphs. The gauge is working as I expected.

Im trying to figure out how to get the graph to match what the gauge is showing (opposite of what in your last reply).

i.e. Calculating the % based upon previous 4 hours of data but showing the result (time-slice) in 5 minute intervals.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm sorry, but I still don't understand. You either have a timeseries of small-spanned aggregates (like in the timechart) or a cumulative stats value over whole period. I don't understand how possibly you could achieve "% based upon previous 4 hours of data" (which sounds like a cumulative single value) but showing the result in 5-minute intervals.

You want to have the same value scattered over all timechart buckets? Kinda pointless, but doable.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

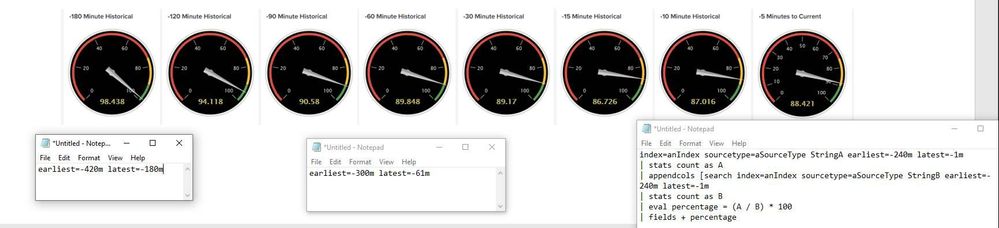

Maybe this will help show what I am trying to accomplish. I have taken the original calculation and added earliest / latest to show the percentage over time. I am trying to show change over time using a graph instead of individual components... Make sense ?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

But that's exactly what timechart is doing. (with the restriction to a constant span instead of variably placed indicators as you have here - if you want to have a timechart with variably "spaced" time points, you can't do it straightforward with timechart command; you'd have to iterate over a set of data points and calculate separate subsearches with earliest/latest values).