Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Splunk dynamic count of lookups in CSV

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We have the following situation / problem:

Some searches having some lookups on CSV files.

Now we wont that we never have to touch the Searches again, just edit the CSV.

But we need to be able to add some more lines/ Valueboarders to de CSV.

Example: in the CSV ist Search 1, Value 50, Score 2

Now search 1 knows that values over 50 have the score 2

Now we add a additional line: Search 1, Value 100, Score 3

Now, search 1 should know that values over 50 have score 2 but over 100 the score is 3.

My primary question is: Is this the way to go to solve this problem?

And if Yes, how can I write the search to heandle this Need and be prepaired for more borders?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi digitalX,

If I understand your request correct, you are after setting threshold in a lookup table. This can be achieved by using the match_type in transforms.conf to specify the field you want to match on as a wildcard, then populate your lookup table just like you've planned to.

transforms.conf:

[score]

filename = score.csv

match_type = WILDCARD(value)

And your score.csv:

mySearch,value,score

foo,5*,2

foo,10*,3

boo,30*,5

If you now use this run everywhere command you can get back the score based on the fake count which was made by the first eval command:

Count is 101

| gentimes start=-1 | eval count="101" | eval mySearch="foo" | lookup score value AS count mySearch AS mySearch

and the results will look like this:

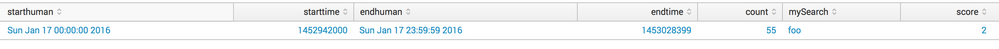

Count is 55

| gentimes start=-1 | eval count="55" | eval mySearch="foo" | lookup score value AS count mySearch AS mySearch

and the result will look like this:

Bear in mind, this has some limits. Because the wild card match for 10* will work from 100 until 109, but also matches 1000 and/or 10000 for example!

Nevertheless I hope this helps and Grüess nach Chur 😉

cheers, MuS

Update after the comments:

Use this as score.csv

Search,lower,upper,score

foo,50,99,2

foo,100,999,3

foo,1000,9999,5

boo,50,99,20

boo,100,999,30

boo,1000,9999,50

and use it with inputlookup instead of lookup in the search:

| gentimes start=-1 | eval count="999" | eval mySearch="foo" | append [|inputlookup score ] | filldown count mySearch | where mySearch=Search AND count>=lower AND count<=upper

This should do what you need.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi digitalX,

If I understand your request correct, you are after setting threshold in a lookup table. This can be achieved by using the match_type in transforms.conf to specify the field you want to match on as a wildcard, then populate your lookup table just like you've planned to.

transforms.conf:

[score]

filename = score.csv

match_type = WILDCARD(value)

And your score.csv:

mySearch,value,score

foo,5*,2

foo,10*,3

boo,30*,5

If you now use this run everywhere command you can get back the score based on the fake count which was made by the first eval command:

Count is 101

| gentimes start=-1 | eval count="101" | eval mySearch="foo" | lookup score value AS count mySearch AS mySearch

and the results will look like this:

Count is 55

| gentimes start=-1 | eval count="55" | eval mySearch="foo" | lookup score value AS count mySearch AS mySearch

and the result will look like this:

Bear in mind, this has some limits. Because the wild card match for 10* will work from 100 until 109, but also matches 1000 and/or 10000 for example!

Nevertheless I hope this helps and Grüess nach Chur 😉

cheers, MuS

Update after the comments:

Use this as score.csv

Search,lower,upper,score

foo,50,99,2

foo,100,999,3

foo,1000,9999,5

boo,50,99,20

boo,100,999,30

boo,1000,9999,50

and use it with inputlookup instead of lookup in the search:

| gentimes start=-1 | eval count="999" | eval mySearch="foo" | append [|inputlookup score ] | filldown count mySearch | where mySearch=Search AND count>=lower AND count<=upper

This should do what you need.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi MuS,

I am curious how to use your search when deal with records ? Gentimes only feth you single record.

Thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for your very fast reply. Good idea, I think I learn every day a Little more about Splunk. 🙂

But in my case this way have to many maybes to mach every constellation correctly.

What if we need the boaders 10, 100, 1000, 1010,... or something other with similar values?

And it has to match for every value between the two borders, not just for the fist 9...

Perhaps there is another technique to achieve this?

Greetings back to Wellington 😉

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Since I like challenging tasks here is the solution 😉

change the lookup file to:

Search,lower,upper,score

foo,50,99,2

foo,100,999,3

foo,1000,9999,5

boo,50,99,20

boo,100,999,30

boo,1000,9999,50

and use it with inputlookup instead of lookup in the search:

| gentimes start=-1 | eval count="999" | eval mySearch="foo" | append [|inputlookup score ] | filldown count mySearch | where mySearch=Search AND count>=lower AND count<=upper | table Search count score

This should do what you need.

cheers, MuS

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, this is the way to go! 🙂

Thank you so much. Now, when I read it, it sounds logical, but havent seen the clou before...

But how can I mark your second answer as accepted? Just see this option for the main answer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Updated the answer, feel free to accept it now - Danke 🙂

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Accepted. Sprichst Du Deutsch? 🙂