Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- How to create an alert for a log that does not upd...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have the following as my search but wanted to see if a log does not update for X hours then send an alert.

If the log is not updating the service has must have stopped.

index=linux_os_machines host="zzcalidm01.x.y.z" sourcetype=zzcalidm01 source=/opt/scripts/logs/output.log.*

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

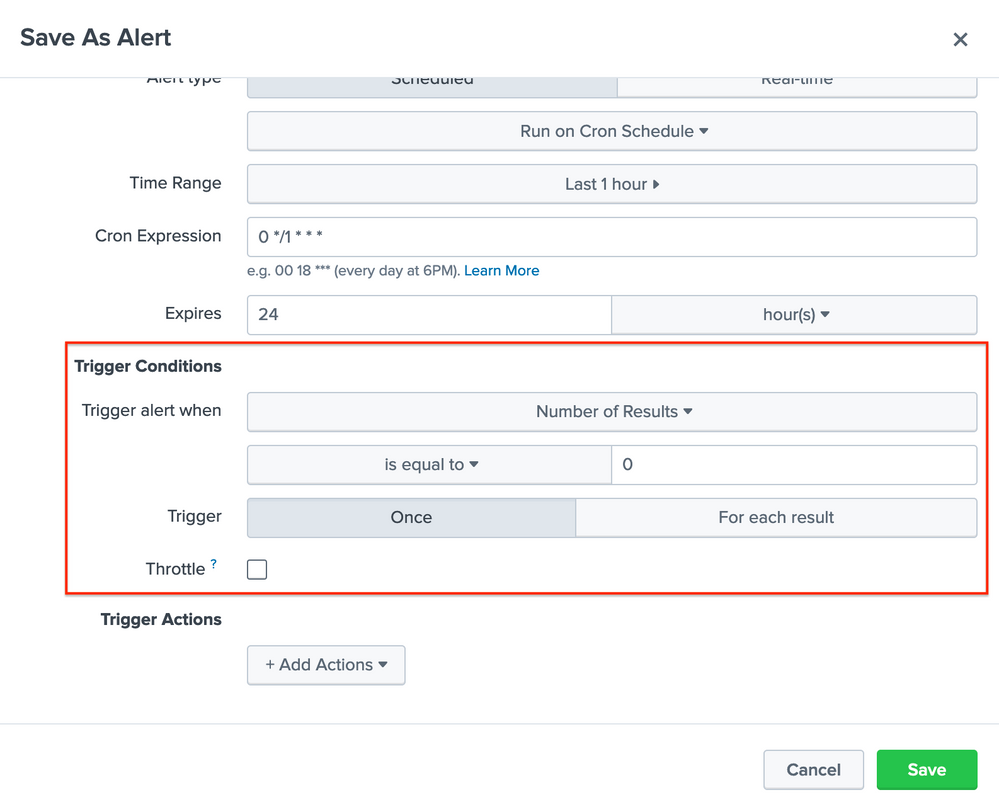

You can save this search query as an alert and schedule it for every X hours. In the alert edit page you can set Trigger Conditions to trigger an alert action when Number of Results is equal to 0.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you. This helps

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ryangillan,

read about this use case in this blog post https://www.duanewaddle.com/proving-a-negative/ it will show and explain how you can use Splunk to search for something that is missing or not has been updated and there no longer has new events.

Hope this helps ...

cheers, MuS

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Are you saying you want to trigger an alert when you do not see a log in that search for a few hours? You can just add an

earliest=-4h where 4 is whatever number of hours you want (or you can look back in the search, but I like a hardcoded token to avoid confusion), and then trigger the alert when results=0, which is one of the alert options. You might also want to run this on a cron schedule, essentially at times when you would expect the log to be there (if you expect it to appear at noon, you shouldn't run it at 11 AM, for example).

Does this help, or did I misunderstand your ask?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thank you. So simple!