- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Do you lose any information between Chain Searches...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you need to return output from one section of a chain search to another, like when writing a function in a programming language

I've assumed that a chained search would, as a user, act in a similar fashion to concatenating both searches, but with a really DRY efficiency - so superb use for dashboarding as often the material being presented shared a common subject.

There are certain queries I am running that break when used in a chained order - am I missing some kind of return function needed?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is an amazing find! My tests show that indeed, when a chain search needs a field that the base search does not pass, it will fail in mysterious ways. In most applications, the base search is not as vanilla as index=abcd, so this behavior would not be revealed. But I consider this a bug because even if the requirement that base search must contain provisions to pass fields used by all chain searches is carefully documented, it is really counterintuitive for users and a slip can affect results in subtle ways that users may end up trusting bad data. Good news is that DS team is aggressively trying to relieve user friction. Bad news is that this is a rather tricky one so I don't expect speedy fix even if they accept it as a bug.

Here is the gist of the problem/behavior: To improve performance, SPL compiler will decide which field(s) to pass through a pipe by inspecting downstream searches. Because base search and chain search are completely separate as far as compiler is concerned, only indexed fields and explicitly invoked fields in the base search will be passed to chain searches. In your example, base search index=_internal will only pass _time, sourcetype, source, host, etc. All search-time fields are omitted. When you change base search to index=_internal useTypeahead=true, the compiler sees that useTypeahead is referenced, therefore it passes this to result cache.

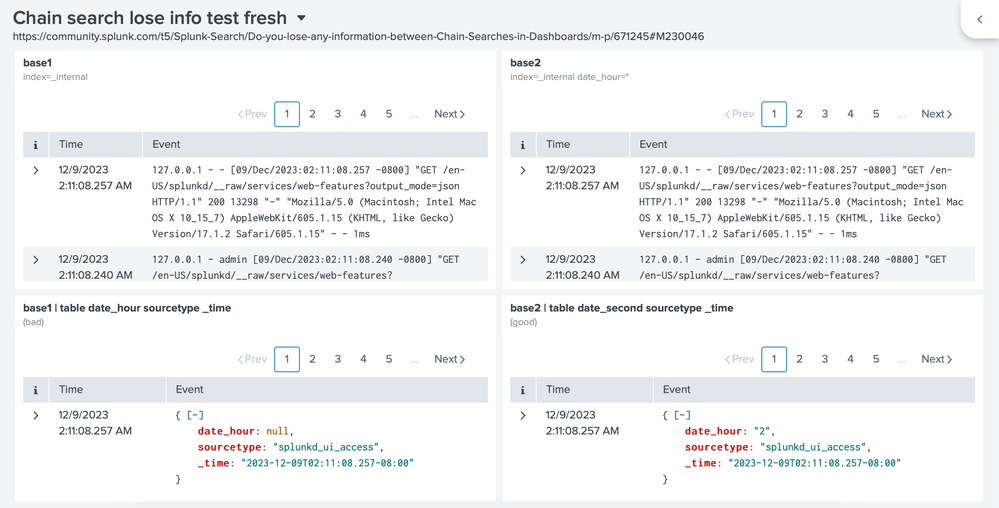

Here is a simpler test dashboard to demonstrate: (I use date_hour because it is 100% populated)

{

"visualizations": {

"viz_AD6BWNHC": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_4EfZYMc8"

},

"title": "base1",

"description": "index=_internal",

"showProgressBar": false,

"showLastUpdated": false

},

"viz_TrPHlPsH": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_561TjAWf"

},

"showProgressBar": false,

"showLastUpdated": false,

"title": "base1 | table date_hour sourcetype _time",

"description": "(bad)"

},

"viz_SiLJUCQc": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_FmGTHy8w"

},

"title": "base2",

"description": "index=_internal date_hour=*"

},

"viz_A0PjYfHd": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_feUCBRcX"

},

"title": "base2 | table date_second sourcetype _time",

"description": "(good)"

}

},

"dataSources": {

"ds_4EfZYMc8": {

"type": "ds.search",

"options": {

"query": "index=_internal",

"queryParameters": {

"earliest": "-4h@m",

"latest": "now"

}

},

"name": "base1"

},

"ds_561TjAWf": {

"type": "ds.chain",

"options": {

"extend": "ds_4EfZYMc8",

"query": "| table date_hour sourcetype _time"

},

"name": "chain"

},

"ds_FmGTHy8w": {

"type": "ds.search",

"options": {

"query": "index=_internal date_hour=*",

"queryParameters": {

"earliest": "-4h@m",

"latest": "now"

}

},

"name": "base2"

},

"ds_feUCBRcX": {

"type": "ds.chain",

"options": {

"extend": "ds_FmGTHy8w",

"query": "| table date_hour sourcetype _time"

},

"name": "chain1a"

}

},

"defaults": {

"dataSources": {

"ds.search": {

"options": {

"queryParameters": {

"latest": "$global_time.latest$",

"earliest": "$global_time.earliest$"

}

}

}

}

},

"inputs": {},

"layout": {

"type": "grid",

"options": {

"width": 1440,

"height": 960

},

"structure": [

{

"item": "viz_AD6BWNHC",

"type": "block",

"position": {

"x": 0,

"y": 0,

"w": 720,

"h": 307

}

},

{

"item": "viz_TrPHlPsH",

"type": "block",

"position": {

"x": 0,

"y": 307,

"w": 720,

"h": 266

}

},

{

"item": "viz_SiLJUCQc",

"type": "block",

"position": {

"x": 720,

"y": 0,

"w": 720,

"h": 307

}

},

{

"item": "viz_A0PjYfHd",

"type": "block",

"position": {

"x": 720,

"y": 307,

"w": 720,

"h": 266

}

}

],

"globalInputs": []

},

"description": "https://community.splunk.com/t5/Splunk-Search/Do-you-lose-any-information-between-Chain-Searches-in-Dashboards/m-p/671245#M230046",

"title": "Chain search lose info test fresh"

}This is the result:

Here, date_hour is null in the chain search using index=_internal as base search.

One recommendation about your workaround: If your base search uses

index=_internal useTypeahead=trueinstead of index=_internal | useTypeahead=true, the indexer will return a lot fewer events, and the search will be much more efficient.

As to the bug/behavior, because the cause is inherent to the compiler, I imagine it to be really difficult for a high-level application like a dashboard engine to influence. Nevertheless, I trust that the DS team will be grateful that you discovered this problem.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm testing the code you posted and indeed something is very strange. It is like base0 is not configured correctly. Any thing chained to it cannot return anything unless it is a semantic noop like "| search *".

I also see the method you experimented in that code using base1. For now you can use that as a workaround. I will continue to see what's wrong with base0 search.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @yuanliu

I think I have the solution, I have pasted my guess work as a response to the original post

I have uploaded a dashboard with a working method, albeit perhaps not an optional one:

https://gist.github.com/niksheridan/d8377778e4c5f1ff3e2e49b0b9899185

I will try and "collapsing" the first and second steps of the chain in order to actually get a pipeline working that can be further extended.

I'd be very grateful in your feelback, if you could be so kind as to review this, if you have the time - thank you again for you help

thanks nik

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can take a look some other time. (It's very late here.) In the meantime, you can see my confirmation of this problem and how to workaround it in general in https://community.splunk.com/t5/Splunk-Search/Do-you-lose-any-information-between-Chain-Searches-in-.... (I also included a specific recommendation about coding. Additionally, I recommend that you report this as bug and/or get support involved even though I don't expect it to be fixed soon.)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

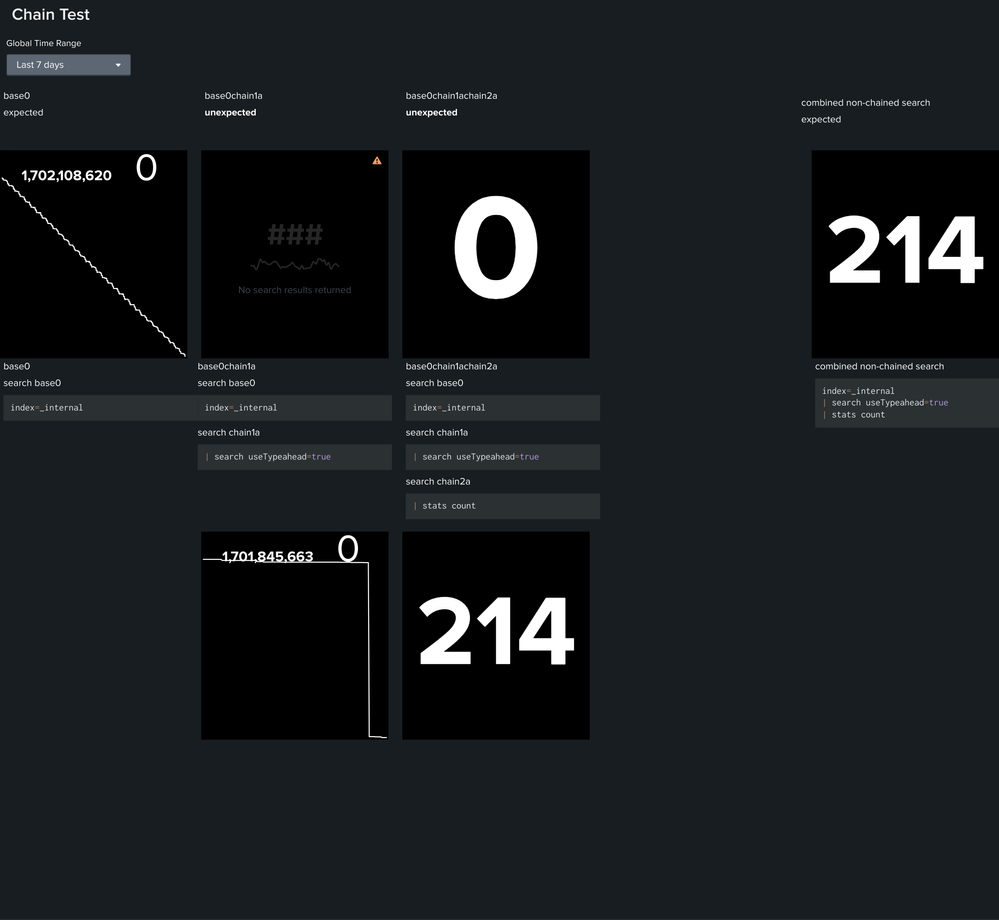

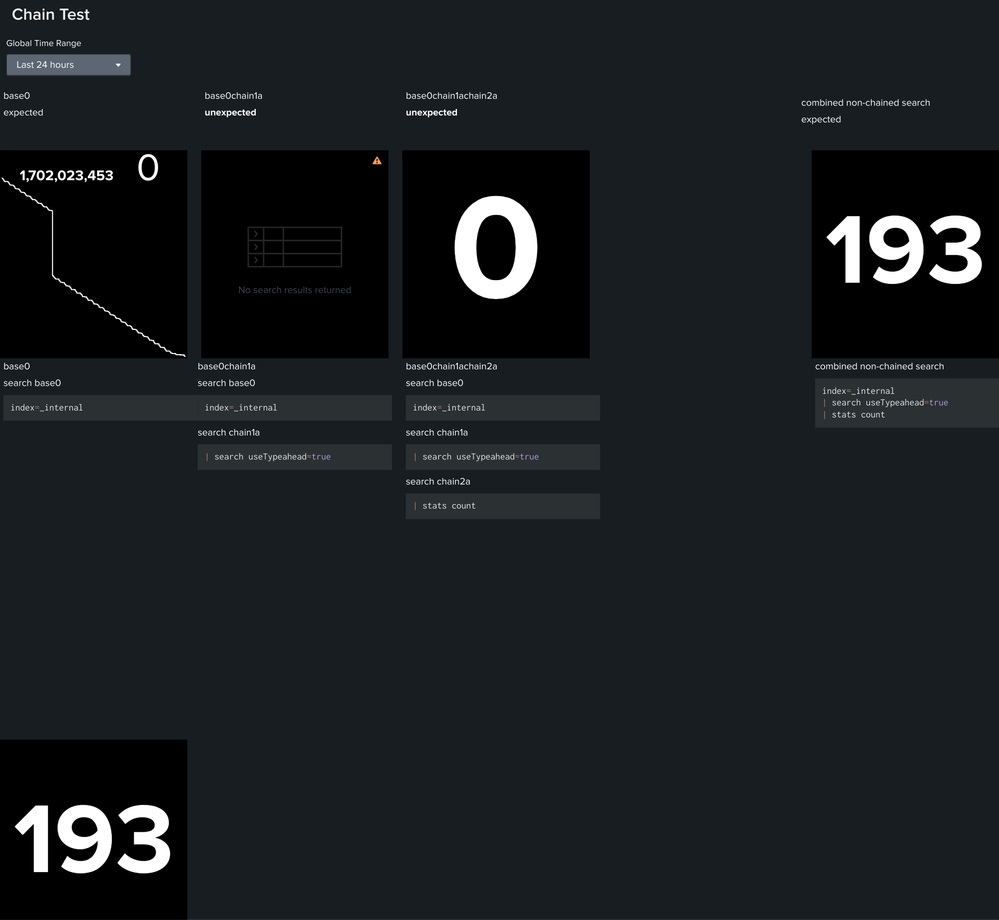

Think I have the solution

Given the base search is in fact not really a search, but a reference to and index:

index=_internalIt seems that as no pipeline exists, this seems to break the chain, as perhaps arguably there is no real search to begin with.

By changing my base (base2) search as follows (which combines base0 and base0chain1a) this creates a pipeline

index=_internal

| search useTypeahead=trueThis then allows be to extend it further with an additional chained search successfully:

| stats countThis provides me with the expected behaviour, shown in the bottom row of graphs.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, it's like keeping your shopping list when you move between store aisles. In dashboards, information stays even if you switch views, so you don't lose any details during your search journey.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do this: In the panel that you suspect information loss, click the magnifying glass ("Open in search"). Run the search again in the new window. Post the two outputs if they are different. (Anonymize as needed.

As @ITWhisperer says, chained search simply uses the results from the main search as if it is the interim output from part of the same search as shown in the new window. The only difference is that the main search runs with its own job ID so multiple chained searches can use the same results. No information should be lost. (Unless there is some memory/disk limits that prevents saving the complete results.)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK - that is a really good call - frustratingly I do get a working search even on the charts that show no data

I'll need to touch base with our cyber team to get a review done before I post anything, sorry

I did create a test dashboard with

chart 1 = index=my_index

(shows data)

chart 2 =

index=my_index (base)

timechart span=30m count(eventClass) by severity (chained search)

(NO DATA)

Really appreciate the time and effort spent here - I have used chained searches elsewhere, I'll check the docs again.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you mean to say that these two together

index=my_index

timechart span=30m count(eventClass) by severityreturns results but when they are respectively the main search and changed search, nothing is shown? Posting actual output will not help in this case. Does chart 1 use the same main search (without chained search)?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi thanks again for your attention

I have reproduced this in my lab (using _internal) and I have noticed differences in the chaining of searching and how it is chained, despite the fact that when you click on the link it effectively concatenates and displays.

I'll structure the findings into what I think is a more coherent way and post the results up later today.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Im sure I am missing a fundamental point

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The screenshot alone will not be sufficient. As this is constructed with _internal, can you post the source code?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Is this what you mean? Please let me know if I have misunderstood and thank you again

{

"visualizations": {

"viz_glNXouAy": {

"type": "splunk.singlevalue",

"options": {},

"dataSources": {

"primary": "ds_gREZNTgj"

},

"context": {},

"showProgressBar": false,

"showLastUpdated": false

},

"viz_1ibEKiXT": {

"type": "splunk.singlevalue",

"options": {},

"dataSources": {

"primary": "ds_PozPBYIA_ds_gREZNTgj"

},

"context": {},

"showProgressBar": false,

"showLastUpdated": false

},

"viz_rYhOWilO": {

"type": "splunk.events",

"options": {},

"dataSources": {

"primary": "ds_Aoy6m25x_ds_PozPBYIA_ds_gREZNTgj"

},

"context": {},

"showProgressBar": false,

"showLastUpdated": false

},

"viz_HS70GboS": {

"type": "splunk.singlevalue",

"options": {},

"dataSources": {

"primary": "ds_n4Q7l7oK"

},

"context": {},

"showProgressBar": false,

"showLastUpdated": false

},

"viz_cFoVJm3n": {

"type": "splunk.singlevalue",

"options": {},

"dataSources": {

"primary": "ds_BgOJ54ak"

},

"context": {},

"showProgressBar": false,

"showLastUpdated": false

},

"viz_Oh0reZaV": {

"type": "splunk.markdown",

"options": {

"markdown": "base0\n\nsearch base0\n```\nindex=_internal\n```"

}

},

"viz_5jz1AX2v": {

"type": "splunk.markdown",

"options": {

"markdown": "base0chain1a\n\nsearch base0\n```\nindex=_internal\n```\n\nsearch chain1a\n```\n| search useTypeahead=true\n```"

}

},

"viz_A5Kcf02B": {

"type": "splunk.markdown",

"options": {

"markdown": "base0chain1achain2a\n\nsearch base0\n```\nindex=_internal\n```\n\nsearch chain1a\n```\n| search useTypeahead=true\n```\n\nsearch chain2a\n```\n| stats count\n```"

}

},

"viz_ymCZMl2z": {

"type": "splunk.markdown",

"options": {

"markdown": "combined non-chained search\n```\nindex=_internal \n| search useTypeahead=true \n| stats count\n```\n"

}

},

"viz_rjA0XgMd": {

"type": "splunk.markdown",

"options": {

"markdown": "base0\n\nexpected"

}

},

"viz_c4r59ekz": {

"type": "splunk.markdown",

"options": {

"markdown": "base0chain1a\n\n**unexpected**"

}

},

"viz_hFoP7IsM": {

"type": "splunk.markdown",

"options": {

"markdown": "base0chain1achain2a\n\n**unexpected**"

}

},

"viz_7lRWKreL": {

"type": "splunk.markdown",

"options": {

"markdown": "combined non-chained search\n\nexpected"

}

}

},

"dataSources": {

"ds_n4Q7l7oK": {

"type": "ds.search",

"options": {

"query": "index=_internal",

"queryParameters": {

"earliest": "$global_time.earliest$",

"latest": "$global_time.latest$"

}

},

"name": "base0"

},

"ds_gQjuR7jY": {

"type": "ds.search",

"options": {

"query": "index=_internal\n| search useTypeahead=true",

"queryParameters": {

"earliest": "$global_time.earliest$",

"latest": "$global_time.latest$"

}

},

"name": "base1"

},

"ds_gREZNTgj": {

"type": "ds.chain",

"options": {

"extend": "ds_gQjuR7jY",

"query": "| stats count"

},

"name": "base1chain2"

},

"ds_PozPBYIA_ds_gREZNTgj": {

"type": "ds.chain",

"options": {

"extend": "ds_Aoy6m25x_ds_PozPBYIA_ds_gREZNTgj",

"query": "| stats count"

},

"name": "base0chain1achain2a"

},

"ds_Aoy6m25x_ds_PozPBYIA_ds_gREZNTgj": {

"type": "ds.chain",

"options": {

"extend": "ds_n4Q7l7oK",

"query": "| search useTypeahead=true"

},

"name": "base0chain1a"

},

"ds_BgOJ54ak": {

"type": "ds.search",

"options": {

"query": "index=_internal \n| search useTypeahead=true \n| stats count"

},

"name": "base0chain1achain2aFull"

}

},

"defaults": {

"dataSources": {

"ds.search": {

"options": {

"queryParameters": {

"latest": "$global_time.latest$",

"earliest": "$global_time.earliest$"

}

}

}

}

},

"inputs": {

"input_global_trp": {

"type": "input.timerange",

"options": {

"token": "global_time",

"defaultValue": "-24h@h,now"

},

"title": "Global Time Range"

}

},

"layout": {

"type": "absolute",

"options": {

"width": 1440,

"height": 1200,

"display": "auto"

},

"structure": [

{

"item": "viz_glNXouAy",

"type": "block",

"position": {

"x": 0,

"y": 940,

"w": 270,

"h": 300

}

},

{

"item": "viz_1ibEKiXT",

"type": "block",

"position": {

"x": 580,

"y": 90,

"w": 270,

"h": 300

}

},

{

"item": "viz_rYhOWilO",

"type": "block",

"position": {

"x": 290,

"y": 90,

"w": 270,

"h": 300

}

},

{

"item": "viz_HS70GboS",

"type": "block",

"position": {

"x": 0,

"y": 90,

"w": 270,

"h": 300

}

},

{

"item": "viz_cFoVJm3n",

"type": "block",

"position": {

"x": 1170,

"y": 90,

"w": 270,

"h": 300

}

},

{

"item": "viz_Oh0reZaV",

"type": "block",

"position": {

"x": 0,

"y": 390,

"w": 290,

"h": 300

}

},

{

"item": "viz_5jz1AX2v",

"type": "block",

"position": {

"x": 280,

"y": 390,

"w": 290,

"h": 300

}

},

{

"item": "viz_A5Kcf02B",

"type": "block",

"position": {

"x": 580,

"y": 390,

"w": 290,

"h": 300

}

},

{

"item": "viz_ymCZMl2z",

"type": "block",

"position": {

"x": 1170,

"y": 390,

"w": 290,

"h": 300

}

},

{

"item": "viz_rjA0XgMd",

"type": "block",

"position": {

"x": 0,

"y": 0,

"w": 290,

"h": 80

}

},

{

"item": "viz_c4r59ekz",

"type": "block",

"position": {

"x": 290,

"y": 0,

"w": 290,

"h": 80

}

},

{

"item": "viz_hFoP7IsM",

"type": "block",

"position": {

"x": 580,

"y": 0,

"w": 290,

"h": 80

}

},

{

"item": "viz_7lRWKreL",

"type": "block",

"position": {

"x": 1150,

"y": 10,

"w": 290,

"h": 80

}

}

],

"globalInputs": [

"input_global_trp"

]

},

"description": "",

"title": "Chain Test"

}- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is an amazing find! My tests show that indeed, when a chain search needs a field that the base search does not pass, it will fail in mysterious ways. In most applications, the base search is not as vanilla as index=abcd, so this behavior would not be revealed. But I consider this a bug because even if the requirement that base search must contain provisions to pass fields used by all chain searches is carefully documented, it is really counterintuitive for users and a slip can affect results in subtle ways that users may end up trusting bad data. Good news is that DS team is aggressively trying to relieve user friction. Bad news is that this is a rather tricky one so I don't expect speedy fix even if they accept it as a bug.

Here is the gist of the problem/behavior: To improve performance, SPL compiler will decide which field(s) to pass through a pipe by inspecting downstream searches. Because base search and chain search are completely separate as far as compiler is concerned, only indexed fields and explicitly invoked fields in the base search will be passed to chain searches. In your example, base search index=_internal will only pass _time, sourcetype, source, host, etc. All search-time fields are omitted. When you change base search to index=_internal useTypeahead=true, the compiler sees that useTypeahead is referenced, therefore it passes this to result cache.

Here is a simpler test dashboard to demonstrate: (I use date_hour because it is 100% populated)

{

"visualizations": {

"viz_AD6BWNHC": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_4EfZYMc8"

},

"title": "base1",

"description": "index=_internal",

"showProgressBar": false,

"showLastUpdated": false

},

"viz_TrPHlPsH": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_561TjAWf"

},

"showProgressBar": false,

"showLastUpdated": false,

"title": "base1 | table date_hour sourcetype _time",

"description": "(bad)"

},

"viz_SiLJUCQc": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_FmGTHy8w"

},

"title": "base2",

"description": "index=_internal date_hour=*"

},

"viz_A0PjYfHd": {

"type": "splunk.events",

"dataSources": {

"primary": "ds_feUCBRcX"

},

"title": "base2 | table date_second sourcetype _time",

"description": "(good)"

}

},

"dataSources": {

"ds_4EfZYMc8": {

"type": "ds.search",

"options": {

"query": "index=_internal",

"queryParameters": {

"earliest": "-4h@m",

"latest": "now"

}

},

"name": "base1"

},

"ds_561TjAWf": {

"type": "ds.chain",

"options": {

"extend": "ds_4EfZYMc8",

"query": "| table date_hour sourcetype _time"

},

"name": "chain"

},

"ds_FmGTHy8w": {

"type": "ds.search",

"options": {

"query": "index=_internal date_hour=*",

"queryParameters": {

"earliest": "-4h@m",

"latest": "now"

}

},

"name": "base2"

},

"ds_feUCBRcX": {

"type": "ds.chain",

"options": {

"extend": "ds_FmGTHy8w",

"query": "| table date_hour sourcetype _time"

},

"name": "chain1a"

}

},

"defaults": {

"dataSources": {

"ds.search": {

"options": {

"queryParameters": {

"latest": "$global_time.latest$",

"earliest": "$global_time.earliest$"

}

}

}

}

},

"inputs": {},

"layout": {

"type": "grid",

"options": {

"width": 1440,

"height": 960

},

"structure": [

{

"item": "viz_AD6BWNHC",

"type": "block",

"position": {

"x": 0,

"y": 0,

"w": 720,

"h": 307

}

},

{

"item": "viz_TrPHlPsH",

"type": "block",

"position": {

"x": 0,

"y": 307,

"w": 720,

"h": 266

}

},

{

"item": "viz_SiLJUCQc",

"type": "block",

"position": {

"x": 720,

"y": 0,

"w": 720,

"h": 307

}

},

{

"item": "viz_A0PjYfHd",

"type": "block",

"position": {

"x": 720,

"y": 307,

"w": 720,

"h": 266

}

}

],

"globalInputs": []

},

"description": "https://community.splunk.com/t5/Splunk-Search/Do-you-lose-any-information-between-Chain-Searches-in-Dashboards/m-p/671245#M230046",

"title": "Chain search lose info test fresh"

}This is the result:

Here, date_hour is null in the chain search using index=_internal as base search.

One recommendation about your workaround: If your base search uses

index=_internal useTypeahead=trueinstead of index=_internal | useTypeahead=true, the indexer will return a lot fewer events, and the search will be much more efficient.

As to the bug/behavior, because the cause is inherent to the compiler, I imagine it to be really difficult for a high-level application like a dashboard engine to influence. Nevertheless, I trust that the DS team will be grateful that you discovered this problem.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @yuanliu this is truly excellent work, thank you so much for your time an determination on finding the root cause of this behaviour

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Chained search simply operate on the events in the pipeline left from the previous search in the chain.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for responding - when I run the search chained I get NULL, whereas when I run it in a single block, i get separation by severity field in severity. (I've obfuscated the search a bit)

Expected behaviour

index=my_index

| spath eventClass

| search eventClass="my.event"

| timechart count(eventClass) by severityUnexpected behaviour (displays graph, but without field separation showing "NULL"

Chained Parent

index=my_indexChained child

| spath eventClass

| search eventClass="my.event"

| timechart count(eventClass) by severityWhat even more confusing is the graph on the dashboard, when view and clicked on to forward to the standard search and reporting, works. So the division of the events seems to fail as it something has been lost, passing from parent to child.

Thanks again for any time or attention given to this. Each event is a JSON document logged via HEC, if that's important know.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When looking at the job inspector there seems to be a massive difference (I am a novice at debugging this)

the normalizedSearch looks very different (due to chaining?).

Im unable to progress this further due to prestats command not being recognised - was hoping to recreate the search step by step to understand where this breaks so I can ask our splunk gurus a focused question