Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Re: Difference calculation different time in diffe...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Difference calculation different time in different column

Hi

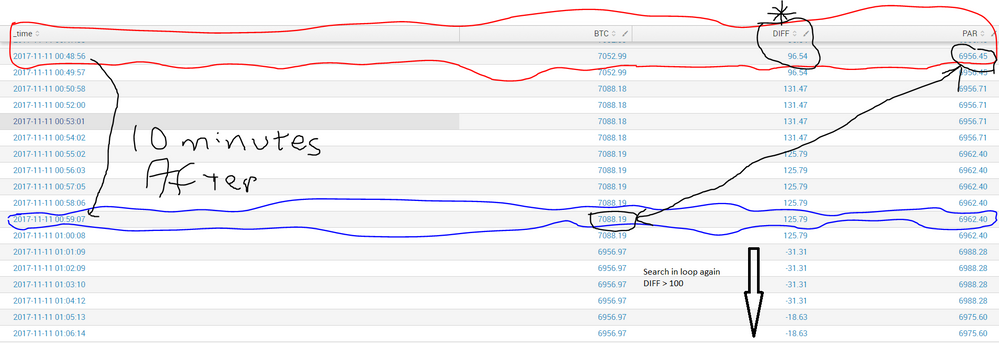

I want to calculate/simulate a data to analysis price difference, my data set in picture,

Process like this

- find first DIFF > 95 and get PAR and _time value

Red line : time is 2017-11-11 00:48:56 PAR is 6956.45

- find after 10 minutes line 2017-11-11 00:48:56 + 10 minutes = 2017-11-11 00:58:56

at 2017-11-11 00:59:07 BTC is 7088.19

- get difference BTC - PAR = 7088.19 - 6956.45 = 132

and continue to search second ,third etc where diff greater than 95 and calculate difference again

How it possible in splunk?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is literally no way that this search could be any less efficient and i'm not 100% sure it's accurate. If you're working with a large dataset, this is probably not an actual option, but maybe it could spark some ideas.

I think if you had a requirement of going to the next event with 10 minutes, this would be easier. And it's probably easier than I'm making it anyway, but since that next event could be 11 minutes away, 30 minutes away, 3 days away, it's kind hard to set a window. Anyway...

I have my sample data in the main index with sorcetype of stash and timestamps in early october - I just used random numbers to create btc, diff, and par and all of the timestamps are a minute apart. And i'm limiting to 100 events for performance sake.

My main goal was to somehow join each event with the first event that >10 minutes away. I had to jump through hoops to do it, so there is probably a better way. And there is a probably a better way to tackle it in general, but this is something I guess.

index=main earliest=-1mon@mon latest=@mon sourcetype=stash

| sort _time

| head 100

| eval current=_time, next=_time+600

| map maxsearches=9999 search="|tstats min(_time) as next where index=main sourcetype=stash _time>=$next$| eval _time=$current$, btc=$btc$, diff=$diff$, par=$par$"

| table _time next btc diff par

| join next

[search index=main earliest=-1mon@mon latest=@mon sourcetype=stash

| rename _time as next, btc as btc2, diff as diff2, par as par2

| fields next, btc2, diff2, par2 ]

| where diff >100

| delta next as diff_secs

| streamstats reset_after="diff_secs_sum>=600" sum(diff_secs) as diff_secs_sum

| where diff_secs_sum >=600 OR isnull(diff_secs_sum)

| eval final_diff = btc2 - par

| table _time btc2 par final_diff

1) I get the events and i create a field for the current timestamp and one for a timestamp in 10 minutes (not sure I really needed to create the current one, but I did)

2) For each of those results, i map to a tstats search. That search is limited to only those events that are 10 minutes away from the current event, and then i grab the first one. So for the current event, I grab the timestamp of the event that we would need to check against if it meets our diff requirements. And so i continue on to map the timestamp, the next field and all of the current values.

At this point, we have fields for _time, next, btc, diff and par

3) Now I join this with the same data set, but where the next field of the current event matches the _time field of the subsearch. And in the subsearch i rename the value fields.

At this point we have fields for _time, next, btc, diff, par, btc2, diff2, par2. Meaning, we have all of the information in one row now to manipulate the data.

4) Next, I remove anything where the diff <=100. If it's not greater than 100, we don't care about it - it's not a possible starting point.

5) But we have more to eliminate, so i get the time difference between each of the remaining events, because we're going to want to remove anything that is included within a previous event's 10 minutes - those would not be starting points either.

6) So once we have those deltas, we do a streamstats to sum the deltas, but then reset the count after it reaches 600s (10 minutes).

7) Now we only keep those records where that fields is >=600 because that marks the start of the next "iteration". Also, the first record will be null and we want to keep that because it's obviously first one that meets the diff requirements

😎 And so now we're left to just do the math between btc2 and par.

I hope that helps and I hope somebody out here can improve on it...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This should be achievable with streamstats which allows you to get the event values X event forward/back depending how you sort the data. E.g.

... | sort - _time | streamstats window=10 latest(PAR) as PAR_10_forward | search DIFF > 95 | eval forward_diff=BTC-PAR_10_forward

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It doesnt work, it calculate every _time sequentaliy, not pass 10 events

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

i'm a bit confused because you say to start where diff > 100, but you start with a diff of 96.54?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I updated question; thx