Are you a member of the Splunk Community?

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Can you help me using dedup and count?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you help me using dedup and count?

I have the following search based on F5 logs that count the HTTP POSTs by src in a five-minute bucket:

index=f5 action!=blocked http_method=POST

| bucket _time span=5m

| stats count by _time, src, website

| sort -count

| stats dc(website) as distinct_website, list(website) as Website, list(count) as count, sum(count) as Total by src

| where distinct_website >= 3

| sort -Total

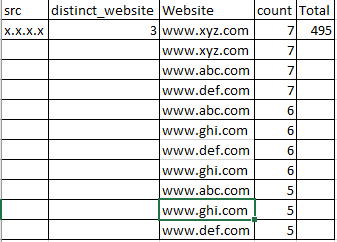

The problem is, I get an output that lists the same websites multiple times:

If I add '| dedup Website' before the 'where' command, I still see the duplicate websites.

if I add '| dedup website' after the '| stats count by _time, src, website' command, the websites are deduped, but I see the following output that contains a different src and websites altogether:

Do I need the dedup command at all for this search?

Thx

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

index=f5 action!=blocked http_method=POST

| bucket _time span=5m

| stats count by _time, src, website

| sort -count

| stats dc(website) as distinct_website, values(website) as Website, list(count) as count, sum(count) as Total by src

| where distinct_website >= 3

| sort -Total

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jwalzerpitt

Try with values(website)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Try this

index=f5 action!=blocked http_method=POST

| bucket _time span=5m

| stats count by _time, src, website

| sort -count

| stats dc(website) as distinct_website, list(count) as count, sum(count) as Total by src, website

| where distinct_website >= 3

| sort -Total

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thx for the reply and search. Using your suggested search returns the same output as my original search with dupes of websites listed.

As I think through this is adding the Total potentially causing an issue? Maybe I need to sum the count of individual POSTs against each website first to get rid of the dupes so that www.abc.com has a total count of 45 POSTs, and then www.def.com has a total count of 34 POSTs, and so on, with the Total being the aggregate number of POSTs from all websites?

Or perhaps because I'm running the search against a 24 hour time period with five-minute buckets that it's listing the dupes for the websites because there are POSTs scattered among the five-minute buckets throughout the 24 hour time period for the same website?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I've edited by original answer. Try that one

It's difficult not testing this on my end, but in a nutshell, we are counting by website. In your second stats, you should have the by website clause.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The edited search returns no results.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's going to take a little massaging on your end... You should remove line by line to identify whats breaking the search. I don't have your query in front of me so I can't do it for you..

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

it's not that the search is broke, just wondering if the search is the most efficient way to find src IPs with a lot of POSTs in a short amount of time.

I believe the line you suggested, "| stats count values(website) AS website by _time, src" provides a more efficient search, and I go back to a previous anaswer of mine in which I speculated that I might not be able to get rid of the duplicate websites as the POSTs are happening in different five-minute buckets.

I'm just trying to figure out how to total the count per website

Thx for the help

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Each field added after the by clause will decrease efficiency. You're correct about better efficiency with the values(website) this will provide multiple websites by src. the src would be deduped while the website field could be multi-valued. If you were to add that website field after the by clause on your first stats, then website would be deduped.

I'm confused, you said the search isn't broke, but you claimed it wasn't returning results.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

My original post showed the results I was getting that had the duplicate websites and I asked if there was a way to get rid of them using the dedup command. The edited search returns no results but rolling back to your first search below returns results:

| bucket _time span=5m

| stats count values(website) AS website by _time, src

| sort -count

| stats dc(website) as distinct_website, list(website) as Website, list(count) as count, sum(count) as Total by src

| where distinct_website >= 3

| sort -Total