Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Splunk Search

- :

- Calculate diff in timings of down and up consecuti...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Calculate diff in timings of down and up consecutive events and show if more than 10 days

Example Logs(ignore time format as it is as expected by splunk :

1 jan neibhor is up

10 jan jan neibhor is down

20 jan neibhor is up

30 jan neibhor is down

1 feb neibhor is up

I will like to see time diff between down log and up log and if its more than 10 days then show when it went down and came up in table . I was able to see last down event and since when but couldnt get it working what i need .

index= "neighbor" AND Up OR Down | rex "neighbor (?\d+.\d+.\d+.\d+) (?\w+") | dedup host | where status = "Down" | eval "Down Since" = toString(date_mday) + " " + toString(date_month) + " " + toString(date_year)| table host ip status "Down Since"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

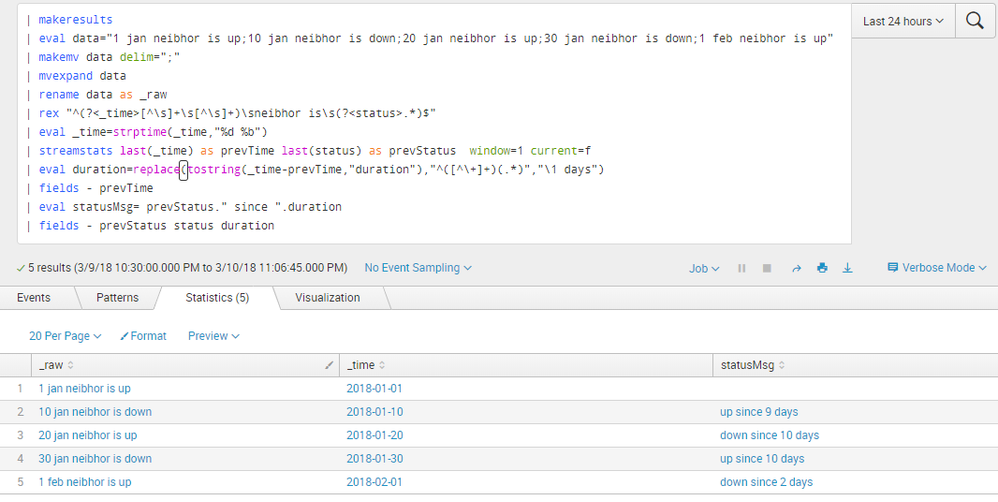

@atulitm, ideally Splunk shows up data in reverse chronological order, however, you sample has it in chronological order. In either case based on the description/clarification, each consecutive event will have a new status and you require the duration for which the status has been up or down.

Following is a run anywhere search which finds the uptime or downtime using streamstats command. Using window=1 you can get the previous event values with last() statistical aggregate function.

PS: streamstats is highly dependent on the sorted data based on time (unsorted data will give inconsistent results and based on the type of sort the calculations might need to be changed/reversed.

Following is based on chronological order of data. If the same is in reverse chronological order you might have to use | reverse, prior to streamstats command.

| makeresults

| eval data="1 jan neibhor is up;10 jan neibhor is down;20 jan neibhor is up;30 jan neibhor is down;1 feb neibhor is up"

| makemv data delim=";"

| mvexpand data

| rename data as _raw

| rex "^(?<_time>[^\s]+\s[^\s]+)\sneibhor is\s(?<status>.*)$"

| eval _time=strptime(_time,"%d %b")

| streamstats last(_time) as prevTime last(status) as prevStatus window=1 current=f

| eval duration=replace(tostring(_time-prevTime,"duration"),"^([^\+]+)(.*)","\1 days")

| fields - prevTime

| eval statusMsg= prevStatus." since ".duration

| fields - prevStatus status duration

PS: Commands from makeresult to rename generate mock data as per question. You would need to add your own query to fetch the data (as stated in your question).

Once you test rex to be working as expected, you should created a Field Extraction using the same Regular Expression for better maintenance and re-usability of the fields.

You can remove pipes with | field - to retain all the fields and understand how query is working.

| makeresults | eval message= "Happy Splunking!!!"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@atulitm does your log always have up and down as consecutive events? Do you want the duration between each Down and Up status as Downtime trending or only if the latest status is Down then how long has it been down?

| makeresults | eval message= "Happy Splunking!!!"

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@niketnilay :

Sorry for confusion , Actually i noticed this query is looking for first down event and then up event which occured after 10 days but instead i would like to have query to check time difference between 2 consecutive logs and see if time difference is more than days to check downtime , As you said i want the duration between each Down and Up status as Downtime trending and check for duration between each cosequetive up and down event for downtime which is greater than 10 days .

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi atulitm,

you could use transpose command but it's very slow:

index=neighbor

| transaction host startswith="is down" endswith="is up"

| where duration > 864000

| table host _time

Bye.

Giuseppe

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually i noticed this query is looking for first down event and then up event which occured after 10 days but instead i would like to have query to check time difference between 2 consecutive logs and see if time difference is more than days to check downtime , i want the duration between each Down and Up status as Downtime trending and check for duration between each cosequetive up and down event for downtime which is greater than 10 days .

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please use strptime command eval MyDate=strptime(date,"date format") and find the difference between the epoch time to get the epoch values then put your condition like diff>15001

i think this will surely help you.

eg:

your base query | eval MyDate=strptime(date,"%d %b %Y %H:%M:%S") | fieldformat StartTime=strptime(MyDate, "%Y-%m-%d %H:%M:%S") | eval Diff=tostring((StartTime-EndTime),"duration") | where Diff>15000

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi atulitm,

You can try transaction command here

index= "neighbor" | transaction startswith="neighbor is up" endswith="neighbor is down" | eval duration = (duration/60) | where duration > 14400 | table host _time

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry , Actually this doesnt work because log exactly is like this which contains which is variable , not sure how to get that variable things

1 jan neibhor up

10 jan jan neibhor down Notification Sent

20 jan neibhor up

30 jan neibhor down Notification Sent