Are you a member of the Splunk Community?

- Find Answers

- :

- Splunk Platform

- :

- Splunk Enterprise

- :

- Forwarder sending data hours later then expected

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

We are seeing a long lag for our forwarders to send in data to Splunk - up to 4 hours!!!

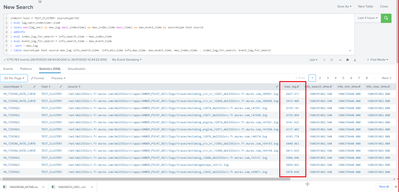

When we run this command we can see the output with a high max_lag in seconds.

We are monitoring a file directory with lots and lots of files (100,000+) we are wondering if this could be the issue and is there some way to know from the forwarder it cant keep up? Or is there another solution?

We are testing this prop now, but we are unsure if it will help, as we are unsure if it is the issue?

ignoreOlderThan = 1d

index=* host = TEST_CLUSTER1 sourcetype!=G1

| eval lag_sec=_indextime-_time

| stats max(lag_sec) as max_lag max(_indextime) as max_index_time max(_time) as max_event_time by sourcetype host source

| addinfo

| eval index_lag_for_search = info_search_time - max_index_time

| eval event_lag_for_search = info_search_time - max_event_time

| sort - max_lag

| table sourcetype host source max_lag info_search_time info_min_time info_max_time, max_event_time, max_index_time, , index_lag_for_search, event_lag_for_search

The image below shows the slowness of some of the files.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HI

We got the answer to this by changes a prop in the forwarder in the end.

We increased a prop in server.conf in the forwarder. From 3 to 6.

[general]

parallelIngestionPipelines = 6

Rob

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HI

We got the answer to this by changes a prop in the forwarder in the end.

We increased a prop in server.conf in the forwarder. From 3 to 6.

[general]

parallelIngestionPipelines = 6

Rob