Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Other Using Splunk

- :

- Reporting

- :

- 97% skipped searches ratio with most count of Skip...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

97% skipped searches ratio with most count of Skipped Reports is from Accelerated Data model uninstalled apps

Have encountered an environmental health issue , where large amounts of searches are being skipped. This large number of skipped searches suggests that the environment is not healthy.

We have deployed apps (myWizard 360) previously to monitor KPIs of production and non production servers that has added the number of searches, and forwarding additional data that also might be the cause of the increase the load on the server.

We have experienced skipped searches on our Search Head since last Mar 15. The latest change we did that time was setting new deployment clients for the myWizard 360 apps, but when we knew about the skipped search issue we have reverted the configuration of the new deployment clients. After the revert, we are still experiencing the skipped searches. Thorough investigation points to these apps below to have been running accelerated datamodels that are being skipped. I have first disabled them and do Splunk restart but still issue persist. Have uninstalled the apps below then restarted Splunk and still issue remains.

aaam-devops-data

aaam-devops-ui-new

aiam-itsm-ticketanalysis-ui-common-new

Lastly is we asked, AWS team to reboot the box but this change does not have significant impact to Splunk skipped searches as well.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As you told that you have disabled and deleted those two apps but still error shows that these two apps are causing accelerated data models. First check whether the searches are still coming from those two apps.if it still coming from those two apps it means the apps are not properly deleted or maybe the apps are present somewhere else in your environment which is also forwarding data from those two apps.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

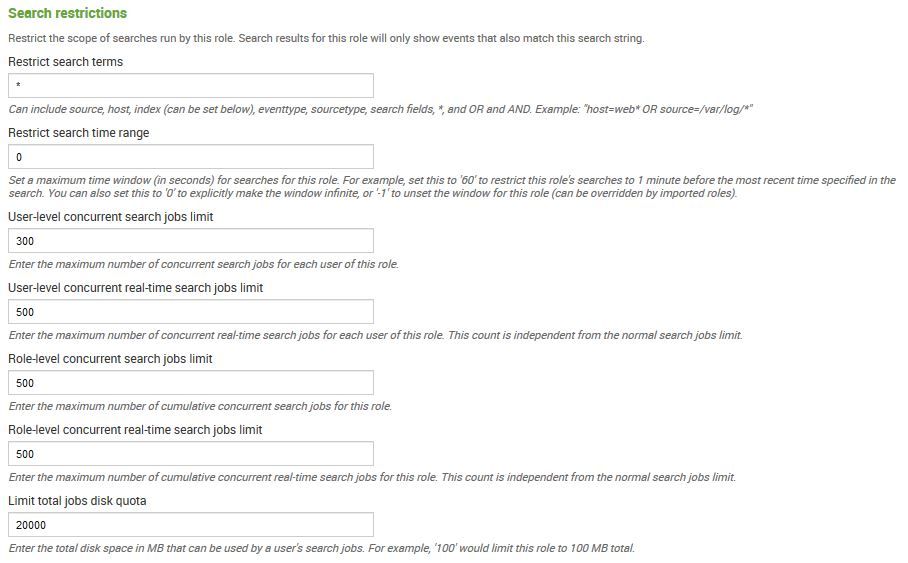

In your search head cluster, the captain also acts as the scheduler. If the number of searches (scheduled or real time) exceeds the concurrency limits, then the scheduler will skip them.

The scheduler will try to re-execute the skipped searches if they fall within the configured window/skew, but if not they will not be attempted again until their next scheduled date/time (if scheduled).

The DMC provides useful insight into this behavior, and you can adjust a number of config files to modify this behavior.

However, one of the easiest tests is to increase the User-level and/or Role-level concurrent search jobs limit.

This can be done from the web UI on any of the search head cluster members by going to Settings » Access controls » Roles » admin (e.g.).

Within that section you will find the concurrency settings.

An upvote would be appreciated and Accept Solution if it helps!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

To clarify, datamodel acceleration is completed small portions at a time and are run as scheduled jobs/searches, which are counted against your concurrency limits. Though they generally have lowest priority and will be the first ones skipped, when necessary.

Have you checked for any orphaned, local versions of datamodels.conf ?

e.g.

$SPLUNK_HOME/etc/users/user-name-here/app-name-here/local/datamodels.conf

$SPLUNK_HOME/etc/apps/app-name-here/local/datamodels.conf

An upvote would be appreciated and Accept Solution if it helps!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Setup a Monitoring Console, run all the Health Checks and resolve all the problems reported. If you still have problem then try these...

On Search Heads in limits.conf:

[scheduler]

#https://www.rfaircloth.com/2017/12/12/tuning-splunk-when-max-concurrent-searches-are-reached

# This lets DMAs use more search allocation

auto_summary_perc = 100

# This lets scheduled searches use more search allocation

max_searches_perc = 75

On Search Heads in savedsearches.conf:

# Also for ALL existing saved searches!

[default]

#https://www.splunk.com/blog/2017/10/10/schedule-windows-vs-skewing.html

schedule_window = auto

allow_skew = 5m

On Search Heads in datamodels.conf:

[default]

#https://conf.splunk.com/files/2017/slides/splunk-search-and-performance-improvements.pdf

acceleration.poll_buckets_until_maxtime = true

# There is a bug in v7.1.* that causes memory leaks for ADMs.

# Setting this to <= "Summary Range" for every ADM avoids it.

# This assumes every ADM has "Summary Range" >= "1 Month".

#https://docs.splunk.com/Documentation/Splunk/7.1.0/ReleaseNotes/Knownissues#Highlighted_issues

acceleration.backfill_time = -1mon

#https://answers.splunk.com/answers/543887/accelerated-data-model-100-complete-even-though-mo.html

# v7.1 and higher

acceleration.allow_skew = 100%

On Search Heads in limits.conf:

[search]

#https://www.rfaircloth.com/2017/12/12/tuning-splunk-when-max-concurrent-searches-are-reached/

# The base value is 6.

# Start at 20 and iteratively increase by 10

# until utilization on IDX or SH is ~60% CPU/memory

# or the you have no more skipped searches.

base_max_searches = 50

If you still cannot get it to work, I suggest you contact somebody about doing a Splunk Health Check project with your team (we @ Splunxter provide such services, as do many others).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

P.S. When I last had this problem, they key thing was base_max_searches.