Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- _indextime is 5 hrs ahead of event time (_time)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

_indextime is 5 hrs ahead of event time (_time)

Hi,

We have Splunk Enterprise 7.2.6 in our environment. I noticed there are latencies (difference between _time and _indextime from 1hr to 10hrs). My Splunk Heavy Forwarders are in GMT timezone, hence I have set TZ = UTC for few of the sourcetypes in props.conf of HF and it worked.

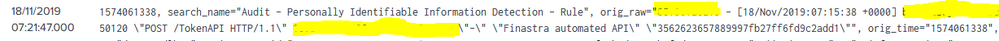

Still I am seeing time difference of 5hrs to 10hrs on few hosts for specific sourcetypes. I am unsure which is creating latency in _indextime. Attached screenshot for reference.

Can someone please assist me to fix this issue?

][1]

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @vijayad,

Where is the data coming from exactly and at what point is it getting cooked ? If it's going via a HF make sure you set the TZ configuration for the host in props.conf.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

As per suggestions I have removed EST and used appropriate TZ definiton. I also tried UTC-05:00 for that speciic host, but it is not working 😞

Please find below screenshots

1. Difference in event timestamp and search time.

2. indextime latency

Is there a way to create standard timezones across all data coming into Splunk? Please assist.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is a little confusing. What you are showing in the first screenshot is not a raw event, that is the output of some scheduled search or a notable event? How exactly is that relevant for this discussion?

Can you perhaps show the search you ran to get the table from the 2nd picture?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

screenshot 1 - is the output of some scheduled search which is categorised as sourcetype stash, and it has latency in _indextime.

Following is the search I used, which triggered output from screenshot2

- | eval timediff=(_indextime-_time) | eval Hoursoff=round(timediff/3600) | search NOT Hoursoff=0 | rename _indextime as Indextime | eval Indextime=strftime(Indextime, “%d%m%y %H:%M:%S”) | eval Time=strftime(_time, “%d%m%y %H:%M:%S”) | dedup sourcetype | table Time, Indextime, Hoursoff, date_zone, host, sourcetype, source

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I would start by checking the timezone config of the originating hosts. Especially if the offset is clearly an (almost) exact nr. of hours.

Quite possibly a US host is sending data with timestamps in its local timezone (US Central, GMT-5) to your HF / Indexer(s) which you configured to interpret the data as GMT, causing this skew.

Ideally source systems are configured to report timezone in the timestamp, to prevent any confusion (and avoid specific config to adjust for offsets). If that is not an option, another solution can be to install a Heavy/Universal Forwarder in the same timezone as the initial collection point. If that is also not an option, you'll need to put specific config in place to assign the correct timezone (e.g. using host based stanzas in props.conf).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have uploaded few more snapshots to this query. I am unsure if you have ever faced this issue. Could you please review and provide your suggestions on what can be done?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Source data is coming from timezone EST, hence I have manually added TZ=EST (also tried GMT-5) definiton for sepcific host on HF. Both are failed 😞

I am still seeing latency in indextime. As I mentioned earlier this is syslog data. Is there any other way I can fix this issue?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Also while defining TZ value, can I define as follows

[host::.... AND sourcetype::syslog]

TZ = EST

is this right defintion?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, you cannot combine host and sourcetype criteria like that. It is either one or the other.

As for using 'EST', not sure if that is ideal. It is listed as deprecated:

https://en.wikipedia.org/wiki/List_of_tz_database_time_zones

This wiki page is referred in Splunk docs as the reference for acceptable time zone names, see: https://docs.splunk.com/Documentation/SplunkCloud/latest/Data/Applytimezoneoffsetstotimestamps

Also: if your source system is using DST during summer, better use for example America/New_York.

Also: make sure to deploy this config on the first HF that touches the data. And make sure the host field is actually populated with the value used in props.conf. Note that for syslog data splunk TAs often override the host value based on event content, so what you see in splunk web may not be the host name with which the data arrives at the HF originally.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you determined if that is a time extraction/time zone issue or a true lag of indexing?

Where the data that has this lag is coming from? Is that windows/linux logs or syslog? Or something else?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

How can I determine if this is true lag of indexing? appreacite any suggestions.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Usually if it is lag caused by some data collection issue, it will fluctuate. Any offsets that are pretty static and also very close to an exact nr of hours offset is likely a timezone config issue.

One way to be sure is to check on the affected host when the event really happened (assuming the host also stores the events locally for a while). Or trigger some specific event on the host and see when that becomes visible in Splunk and with what timestamp.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can see data is coming from syslog. Below are few of the sourcetypes which also has index lagging

- syslog

- stash

I also noticed data_zone associated with these hosts are either "local" or "0".