Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Why am I unable to index contents of a text file b...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Why am I unable to index contents of a text file being monitored by universal forwarder?

Hi,

We are trying to get DNS logs into Splunk. Logs are generated in a .txt file and the goal is to use Splunk Forwarder to parse and Index these. After creating the [monitor: .. ] stanza under inputs.conf, we still do not see Splunk getting the logs from that file. To test, I replicated a similar file setup on my local desktop.

File location: C:\DNS logs\DNS_log.txt

Inputs.conf

[monitor://C:\DNS logs\DNS_log.txt]

disabled = false

sourcetype = win_dns

From splunkd.log:

09-21-2016 11:03:28.886 -0400 INFO TailingProcessor - Parsing configuration stanza: monitor://C:\DNS logs\DNS_log.txt.

09-21-2016 11:03:28.886 -0400 INFO TailingProcessor - Adding watch on path: C:\DNS logs\DNS_log.txt.

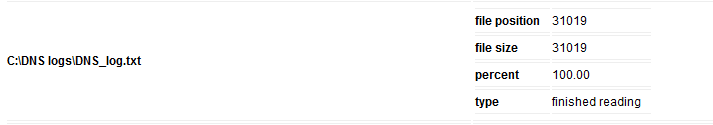

From inputstatus:

What could be going wrong in this setup?

Thanks,

~ Abhi

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The location of your inputs.conf should not matter, although I would not put it in etc/system/local

(I reserve etc/system for true "server-level" settings, like server name, etc. - that's a common practice)

If you put inputs.conf in an app, be sure that the app itself is enabled.

Several of the other folks have made great suggestions. Here is another one. Based on the inputstatus, Splunk believes it has read this file and processed it. If there was some error in your original configuration, but then you corrected it - Splunk will not automatically re-read the file. So you may need to reset the file pointer. There are a couple of ways to do that.

If you are using a forward to get data into Splunk, then here are some troubleshooting steps: Troubleshoot the universal forwarder

You might also find help in the Splunk Troubleshooting Manual: Cant find data

It might be faster to read the manuals, rather than doing a long asynchronous Q&A chain with the community! Although we are happy to answer questions for you!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The DNS log could be locked by the system, or the forwarder does not have permission to read the log file in its natural state.

Check the security properties on the file, and verify that the forwarder service user has access to the file.

You might also check the sourcetype with a search for All Time, because the timestamp might not have been read correctly.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi lukejadamec,

For this testing, logfile is just a regular text file I created manually. I am adding the data manually just to see if forwarder picks it up. I'll check the file permissions on the server side, but as far as my desktop is concerned, file being locked should not be an issue? correct?

Also, if the file is locked, wouldn't splunk complain that under splunkd.log messages..

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm not having any trouble with this log file. My guess is your test log files are to similar to those already indexed.

To handle the header, you probably want to set Should Linemerge to true, and set the timestamp lookahead to exclude the time in the header.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You didn't specify an index name in the monitoring stanza, so the logs should be going to index=main (default index). Are you checking in that index for your data (with correct time range of course)?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi somesoni2,

I added index=main in the query but still the only data we can see is from Windows event logs. There is another custom log we added which is being indexed correctly. Difference is that this custom log is under Event Viewer and not a log file.

Would the location of [monitor..[ stanza matter]? Should it be under /etc/system/local/inputs.conf or under inputs.conf for splunk_TA_windows addon?

Thanks,

~ Abhi

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you post some sample entries from the log file? One reason could be that they are having very old timestamp OR future timestamp, so either Splunk is deleting them after indexing due to data retention period OR setting the _time to future date that it's not coming with regular search time range.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is how the entries are added in the log file.

9/21/2016 12:23:47 PM 0D38 PACKET 0000005FD8D2A1C0 UDP Rcv X.X.X.X 41ea Q [0001 D NOERROR] A (12)xxxxxxx(4)yyy(3)zzz(5)local(0)

9/21/2016 12:23:47 PM 0D38 PACKET 0000005FD8D2A1C0 UDP Snd Y.Y.Y.Y 41ea R Q [8385 A DR NXDOMAIN] A (12)aaaaaaa(4)yyy(3)zzz(5)local(0)

Although, at the beginning of the file, there is the following entry. It is just basic summary about the data, but could this be somehow causing the parsing to break?

DNS Server log file creation at 9/21/2016 12:23:47 PM

Log file wrap at 9/21/2016 12:23:47 PM

Message logging key (for packets - other items use a subset of these fields):

Field # Information Values

------- ----------- ------

1 Date

2 Time

3 Thread ID

4 Context

5 Internal packet identifier

6 UDP/TCP indicator

7 Send/Receive indicator

8 Remote IP

9 Xid (hex)

10 Query/Response R = Response

blank = Query

11 Opcode Q = Standard Query

N = Notify

U = Update

? = Unknown

12 [ Flags (hex)

13 Flags (char codes) A = Authoritative Answer

T = Truncated Response

D = Recursion Desired

R = Recursion Available

14 ResponseCode ]

15 Question Type

16 Question Name

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you check for other error/warning in splunkd.log

From Splunk, run

index=_internal sourceytpe=splunkd host=yourforwarderWhereFileExists NOT log_level=INFO component=Tailing*