Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Splunk enterprise not receive any data from Univer...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Splunk enterprise not receive any data from Universal Forwarder

Hi,

I'm a trial user for Splunk.

I have a setup in Azure: One Azure VM running Splunk Enterprise and four Azure VMs with Universal Forwarders that should send a data to Enterprise server.

I can see those instances listed in Enterprise server in Forwarder Management, but UFs are not sending any data. Ports 9997 and 8089 are open both inbound and outbound in servers with UF and in the server running Enterprise server. Also they are opened in Azure NSG for all VMs.

When looking splunkd in servers with UF, the handshake is done and the enterprise server IP is accessed. When restarting UF, it shows that all is fine - port is open etc.

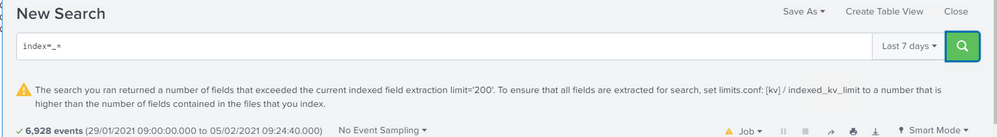

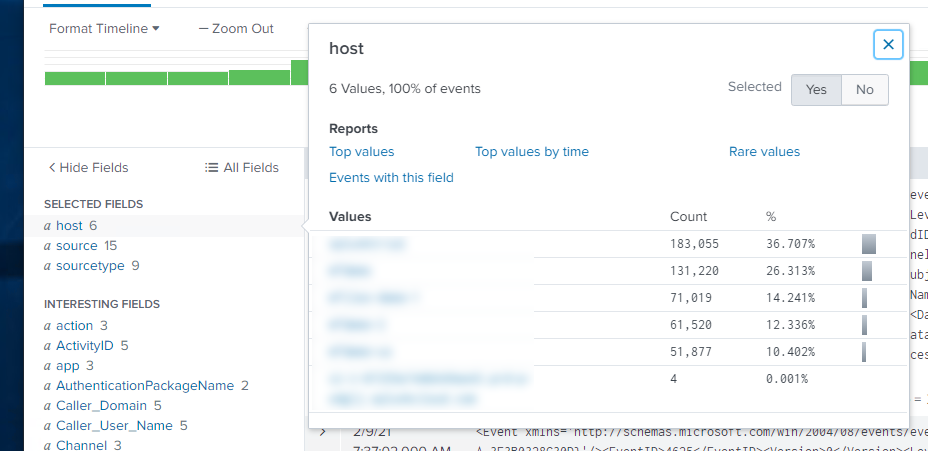

But nothing more is happened. I can't see other VMs with UF as host when searching "index = _*", only the one which is running Enterprise, i.e. itself.

I don't know anymore how to troubleshoot further.

Earlier it gathered events from the server running Enterprise, but not anymore. It captured 6928 events and nothing has happened after that. There is a warning as in the picture attached.

Any ideas?

Thanks!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I got it solved. I was missing the part to send UF configuration (i.e. inputs.conf) from the deployment server to UFs in my sending machines. So I did configuration partly but now it works! Thanks for your hints!

regards,

Jarmo

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If your outputs.conf was set up properly and nothing was blocking it, the default _internal configuration still should have been sending.

Regardless, glad you got it figured out! Please mark your answer as the accepted answer since that resolved the initial question in case anyone else comes across this.

Jacob

If you feel this response answered your question, please do not forget to mark it as such. If it did not, but you do have the answer, feel free to answer your own post and accept that as the answer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JakeK,

are you receinving Splunk internal logs from those UFs (index=_internal) or not?

If not, there's a connection problem to analyze.

If instead you're receiving Splunk Internal logs, it means that there's a problem in inputs configuration:

which Technical Add-ons are you using on those UFs for ingesting?

Ciao.

Giuseppe

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Greetings @JakeK,

It sounds like you're most of the way there!

You clearly have gone through these steps in some way in order to get the deployment clients talking to the deployment server (your Splunk Enterprise instance). That is nice to have, but all the deployment server really does is hands out configurations to the clients and as you can see handles phoning home. This is data that goes mostly to the clients from 8089 (and minimal data comes back from the clients over the same port).

You now need to configure where you want the forwarders' data to go. This will be a one-way trip from the forwarders to Splunk Enterprise over 9997. Here is the general documentation covering this, but I'll provide a quick-and-dirty example that should work with your case.

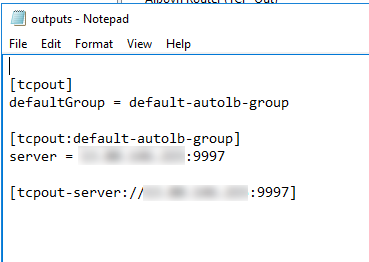

On a test forwarder, create the file $SPLUNK_HOME/etc/system/local/outputs.conf. In it, define the following:

[tcpout-server://10.11.12.321:9997]

Here is the full documentation for outputs.conf: https://docs.splunk.com/Documentation/Splunk/latest/Admin/Outputsconf. You should be able to use any resolvable form (ip address, host name, or FQDN) of your Enterprise instance. I'm not familiar enough with Azure, but I recommend using an internal IP/name just in case Azure charges egress for sending the data "externally."

Once complete, restart splunk with $SPLUNK_HOME/bin/splunk restart. The data should come in quickly after the forwarder is restarted.

P.S. Your warning is unrelated. I'm pretty sure all new Splunk installs show that when searching the internal index.

Jacob

If you feel this response answered your question, please do not forget to mark it as such. If it did not, but you do have the answer, feel free to answer your own post and accept that as the answer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jacobpevans

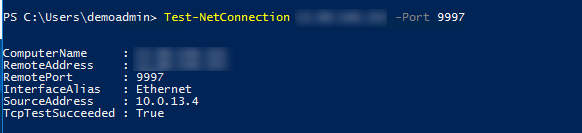

I checked outputs.conf and seems to be ok - it is connected to the Splunk server IP address port 9997. I have tested both IP address and FQDN.

Also when testing with Test-Connection in PowerShell, the server machine IP and Port are accessible

Cheers,

Jarmo

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That all looks good. I don't think it's an issue, but you only need [tcpout] & [tcpout:groupname] OR [tcpout-server://...]. Can you try it with just the last line in your file?

Also, can you manually check the forwarder's logs with that configuration? The crucial log file will be:

C:\Program Files\SplunkForwarder\var\log\splunk\splunkd.log

In particular, search for [ERROR] and [WARN], and TCPOutputProc.

Jacob

If you feel this response answered your question, please do not forget to mark it as such. If it did not, but you do have the answer, feel free to answer your own post and accept that as the answer.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jacobpevans

I installed Wireshark to the server running Splunk Enterprise and the machine receives data thru port 9997 from all the forwarders. But nothing is shown in the Enterprise in Splunk.

Cheers,

Jarmo

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @JakeK,

are you receinving Splunk internal logs from those UFs (index=_internal) or not?

If you're receiving Splunk Internal logs, it means that there's a problem in inputs configuration:

Which Technical Add-ons are you using on those UFs for ingesting?

In addition, check the logs you have on a different date:

- 2nd of may for the logs of 5th of february

- 2nd of april for the logs of the 4th of february

- 2nd of march for the logs of the 3rd of february

Ciao.

Giuseppe