Join the Conversation

- Find Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- INGEST_EVAL replace changes the visible _raw shown...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

INGEST_EVAL replace changes the visible _raw shown in search results but does not impact license/ingestion?

We have been trying to address a problem that exists between our Splunk deployment and AWS Firehose, namely that Firehose adds 250 bytes of useless JSON wrapper to all log events (which when multiplied by millions/billions events increases our storage and license costs enormously).

In order to address this we turned to a combination of INGEST_EVAL on our heavy forwarders which will:

1. Strip the JSON envelope from the event

2. Unescape all of the JSON quotes in the actual log data, making it parse-able JSON once again

3. Assign the logStream/logGroup values to host/source respectively

This is somewhat working and when we look in Splunk it appears our events are showing up with all the appropriate fluff removed... so for example this is what our events used to look like (logGroup, logStream, message and timestamp are all added values from AWS Firehose):

After the processing they now look like this:

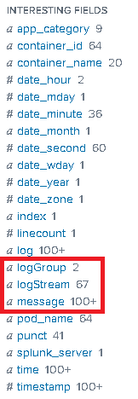

As you can see, the event is much much smaller without losing any necessary information. However, to our surprise this has not had any impact on ingestion levels. It seems to be exactly the same. We also noticed that all of these fields, even though they do not appear in the event view, are actually available and indexed in the "interesting fields" area, which seems to explain why our ingestion/storage has not decreased at all:

For reference, these are the props/transforms I'm using to accomplish this:

Props.conf:

[source::http:AWS2Splunk]

TRANSFORMS-hostname = changehost

TRANSFORMS-sourceinfo = changesource

priority = 100

[aws:firehose:json]

priority = 1000

TRANSFORMS-stripfirehosewrapper = stripfirehosewrapper

Transforms.conf:

[changehost]

DEST_KEY = MetaData:Host

REGEX = \,.logStream...([^\"]+)\"\,\"timestamp

FORMAT = host::$1

[changesource]

DEST_KEY = MetaData:Source

REGEX = \,.logGroup...([^\"]+)\"\,\"logStream

FORMAT = source::$1

[stripfirehosewrapper]

INGEST_EVAL = _raw=replace(replace(replace(replace(replace(_raw,"\{\"message\"\:\"",""),"..\"logGroup\"\:\".*",""),"\\\\\"","\""),"\\\{2}","\\"),"\"stream\":\"\w+\"\,","")

Anyone have any thoughts as to what we're doing wrong? Is this possibly a conflict with doing a DEST_KEY before INGEST_EVAL? Will these two not necessarily play nice together?

UPDATE:

I changed from DEST_KEY to using INGEST_EVAL completely... still seems to be the same issue

[changehost]

#DEST_KEY = MetaData:Host

#REGEX = \,.logStream...([^\"]+)\"\,\"timestamp

#FORMAT = host::$1

INGEST_EVAL = host=replace(replace(_raw,".*\"\,\"logStream\"\:\"",""),"\"\,\"timestamp\".*","")

[changesource]

#DEST_KEY = MetaData:Source

#REGEX = \,.logGroup...([^\"]+)\"\,\"logStream

#FORMAT = source::$1

INGEST_EVAL = source=replace(replace(_raw,".*logGroup\"\:\"",""),"\"\,\"logStream\"\:.*","")

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, is the problem that these extractions don't work, or that you're still smashing the license?

I'm about to do something similar, via a HF - do let me know how you get on. I will probably post back here with results... First time using INGEST_EVAL too!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I may have come upon the solution on my own already... seems this may be caused by INDEXED_EXTRACTIONS = JSON being defined in another props.conf. Removing this definition seems to be fixing the issue so far.

UPDATE: It turns out this did NOT fix the issue, this actually made everything else worse... for some reason we started dropping about 95% of the events coming in.