Are you a member of the Splunk Community?

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- how to read the classification result(confusion ma...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

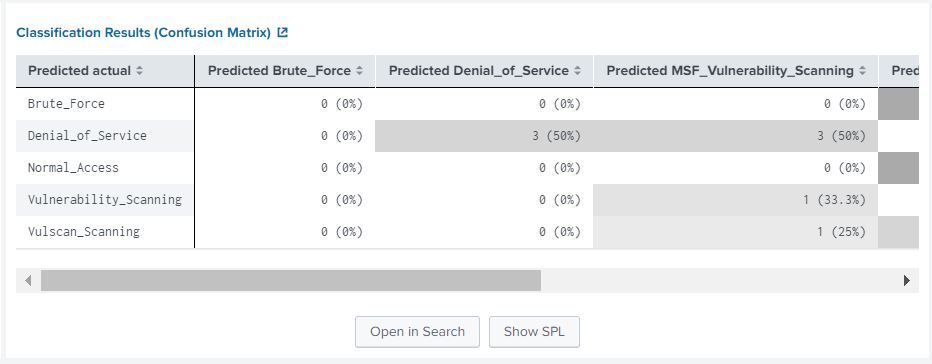

how to read the classification result(confusion matrix) in Machine learning toolkit?

hello,

i am student from suss currently working on my capstone project to research on the machine learning toolkit under splunk

I have a few question regarding the classification result(confusion matrix)

The dataset used for classifcation experiment has 6 class in total.

after number of experiment conducted on different algorithm in Predict Categorical Fields

the classification result(confusion matrix) shown different number of class with different score

example : showing only 2 classes (2 by 2 matrix) with score of 1 for precision, recall, accuracy, f1.

by when open the result in search it show all class has been predicted correctly.

Q1) both the classification result(confusion matrix) and the one open in search are showing 2 different result?

Q2) classification result(confusion matrix) shown the total case predicted are different for every experiment?

none of them add up to the total event

example:

total event 136 use for experiment

none of the classification result(confusion matrix) case add up to 136

Q3) every classification result(confusion matrix) has different total number of case shown?

thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

Please refer to the following link for my past experiences.

result overview https://www.dropbox.com/s/ejir0nsdyae5bqf/result%20overview.JPG?dl=0

confusion matrix result https://www.dropbox.com/s/4sa45ouou5yuggo/confusion%20matrix%20result.JPG?dl=0

confusion matrix result open in search

https://www.dropbox.com/s/he5rqh9oz3ekpy2/confusion%20matrix%20open%20search%20result.JPG?dl=0

Thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi . There are 3 confusion matrixes in the MLTK

| confusionmatrix("DiskFailure","predicted(DiskFailure)")

| classificationreport("DiskFailure","predicted(DiskFailure)")

| score confusion_matrix DiskFailure against predicted(DiskFailure)

https://docs.splunk.com/Documentation/MLApp/4.2.0/User/Scorecommand#Confusion_matrix

The workflow for the Assistant is pre configured , you can customize the results depending on what you are looking for in other searches (by clicking the "open in search" for example)

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

the following links are result from my past experiences.

please refer to

https://www.dropbox.com/s/ejir0nsdyae5bqf/result%20overview.JPG?dl=0 for result overview.

https://www.dropbox.com/s/4sa45ouou5yuggo/confusion%20matrix%20result.JPG?dl=0 for confusion matrix result.

https://www.dropbox.com/s/he5rqh9oz3ekpy2/confusion%20matrix%20open%20search%20result.JPG?dl=0 for confusion matrix open in search result.

Thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I wrote something related a while ago:

https://discoveredintelligence.ca/predict-spam-using-classification/

Used spl to add row_total and column_totals to count all the data points easily:

| `confusionmatrix(...,"predicted..")")

| addtotals labelfield="Predicted actual" | rename Total as "row_total"

| addcoltotals label="col_total" labelfield="Predicted actual"

Before answering any of the questions what do the categorical fields look like? Any spaces/ trailing characters/different formatting that may be causing an issue?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The file was converted to CSV with the following header:

"TCP_DestinationPort","TCP_StreamIndex","TCP_SequenceNumber","TCP_AcknowledgmentNumber","TCP_Header_Length","TCP_Flags_Acknowledgment","TCP_Flags_Push","TCP_Flags_Reset","TCP_Flags_Synchronization","TCP_Flags_Finish","TCP_Window_Size_Value","TCP_Analysis_Acknowledgment_RoundTripTime","TCP_Segment_Length","TCP_Options_TimeStamp_TSvalue","TCP_Options_Timestamp_TSechoreply","TCP_Analysis_Initial_RoundTripTime","TCP_Time_Relative","TCP_Time_Delta","Category"

have done testing remove all _ the result remain the same for classification result(confusion matrix)

matrix showing for every test will be different

Thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

here a image of the classification result(confusion matrix) result and it will shown 1 for precision, recall, accuracy, f1.