Are you a member of the Splunk Community?

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- Re: TrackMe App: how to adjust "max allowed laggin...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Do you know if there is a way to modify the data source maximal allowed lagging value automatically depending of its priority? For example 600 seconds for 'high', 3600 for 'medium' and 86400 for 'low'.

Thanks for the help.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @woodentree

This actually looks a good idea for an enhancement request, in the future feel free to open an issue in GitHub:

https://github.com/guilhemmarchand/trackme/issues

To answer your question, given that TrackMe stores everything in KVstore based lookups, this is very straightforward, you would create a report in TrackMe that you schedule to run on the schedule of your choice (the schedule cost will be near null), for example for data sources:

| inputlookup trackme_data_source_monitoring | eval keyid=_key

| eval data_max_lag_allowed=case(priority="low", 86400, priority="medium", 3600, priority="high", 600)

| outputlookup append=t key_field=keyid trackme_data_source_monitoring

| stats c

Schedule this as a new report, say "TrackMe - custom lagging policy maintenance", and this will update the lagging value based on your rule.

Note that with this, any change operated in the UI regarding the lagging max value would be overriden by your rule.

The following search would maintain this for all data sources / hosts in one operation:

| inputlookup trackme_data_source_monitoring | eval keyid=_key

| eval data_max_lag_allowed=case(priority="low", 86400, priority="medium", "3600", priority="high", 600)

| outputlookup append=t key_field=keyid trackme_data_source_monitoring

| stats c

| inputlookup append=t trackme_host_monitoring | eval keyid=_key | where isnull(c) | fields - c

| eval data_max_lag_allowed=case(priority="low", 86400, priority="medium", "3600", priority="high", 600)

| outputlookup append=t key_field=keyid trackme_data_source_monitoring

| stats c

I will most certainly look at extending the lagging class feature to add a class to be created based on the priority.

Let me know if you have any question.

Guihem

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @woodentree

This actually looks a good idea for an enhancement request, in the future feel free to open an issue in GitHub:

https://github.com/guilhemmarchand/trackme/issues

To answer your question, given that TrackMe stores everything in KVstore based lookups, this is very straightforward, you would create a report in TrackMe that you schedule to run on the schedule of your choice (the schedule cost will be near null), for example for data sources:

| inputlookup trackme_data_source_monitoring | eval keyid=_key

| eval data_max_lag_allowed=case(priority="low", 86400, priority="medium", 3600, priority="high", 600)

| outputlookup append=t key_field=keyid trackme_data_source_monitoring

| stats c

Schedule this as a new report, say "TrackMe - custom lagging policy maintenance", and this will update the lagging value based on your rule.

Note that with this, any change operated in the UI regarding the lagging max value would be overriden by your rule.

The following search would maintain this for all data sources / hosts in one operation:

| inputlookup trackme_data_source_monitoring | eval keyid=_key

| eval data_max_lag_allowed=case(priority="low", 86400, priority="medium", "3600", priority="high", 600)

| outputlookup append=t key_field=keyid trackme_data_source_monitoring

| stats c

| inputlookup append=t trackme_host_monitoring | eval keyid=_key | where isnull(c) | fields - c

| eval data_max_lag_allowed=case(priority="low", 86400, priority="medium", "3600", priority="high", 600)

| outputlookup append=t key_field=keyid trackme_data_source_monitoring

| stats c

I will most certainly look at extending the lagging class feature to add a class to be created based on the priority.

Let me know if you have any question.

Guihem

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @woodentree

TrackMe 1.2.27 was published today and allows you to create builtin lagging classes based on the priority for data sources:

https://trackme.readthedocs.io/en/latest/userguide.html#lagging-classes

Let me know if you have any question.

Kind regards,

Guilhem

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

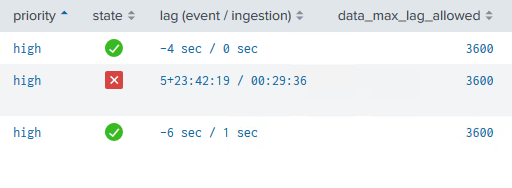

Hi @guilmxm

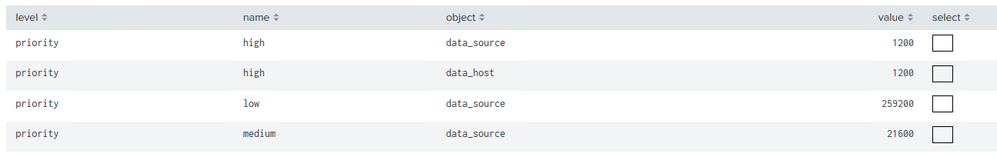

We’ve set up the lagging classes definitions based on priorities, but it looks like we have an issue with it. We configured 4 lagging classes in total: 3 for data sources and 1 for data hosts.

It works great for ‘medium’ and ‘low’ priorities, but not for ‘high’: the value of 'data_max_lag_allowed' does not change (for data sources as well as for data hosts).

We’ve tried recreate ‘high’ lagging classes, change their order, we’ve checked their the orthography as well, but nothing seems to be useful.

Do you have an idea what could be the issue’s root cause?

Thanks for the help.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @woodentree

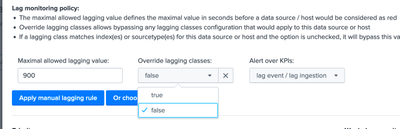

This should just work fine for high priority too, most likely these entities you are looking at (those in high priority) have a the override lagging class field set to true:

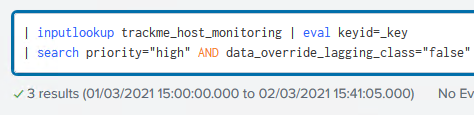

You can check this out the following way too:

For data sources:

| inputlookup trackme_data_source_monitoring | eval keyid=_key

| search priority="high" AND data_override_lagging_class="true"

And for data hosts:

| inputlookup trackme_host_monitoring | eval keyid=_key

| search priority="high" AND data_override_lagging_class="true"

You could change (bulk change) this the following way:

For data sources:

| inputlookup trackme_data_source_monitoring | eval keyid=_key

| search priority="high" AND data_override_lagging_class="true"

| eval data_override_lagging_class="false"

| outputlookup key_field=keyid trackme_data_source_monitoring

And for data hosts:

| inputlookup trackme_host_monitoring | eval keyid=_key

| search priority="high" AND data_override_lagging_class="true"

| eval data_override_lagging_class="false"

| outputlookup key_field=keyid trackme_host_monitoring

Of course if there are some entities that should override lagging classes, you need to either exclude these from the commands above, or check it out within the UI.

Then after you run the trackers (or wait a bit) and this should be applied.

Let me know

Guihem

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @guilmxm,

Thanks for the help.

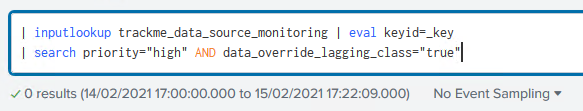

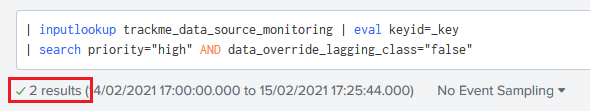

Actually, the “override lagging classes” option was the first thing we checked. And for all data sources and data hosts it’s set to “false”. Here’s the search you provided for data sources:

And the same for 'false':

Regards

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@woodentree

Hum it's actually weird, there should have been a value, remember which which version you're one?

Might have been a thing that was fixed later on, the macro define a default value in case the field would null.

Can you run:

| inputlookup trackme_data_source_monitoring | eval keyid=_key

| eval data_override_lagging_class=if(isnull(data_override_lagging_class), "false", data_override_lagging_class)

| outputlookup trackme_data_source_monitoring key_field=keyid

Then run the tracker and verify? This would fix any null value and set to false (the default)

This is safe to do, only this value will get updated and this won't change a thing for other entities.

If this fixes the issue you can apply for data hosts too.

I will check how it is expected in TrackMe logic too 😉

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @guilmxm,

It didn’t fly 😞

We executed the provided request but it doesn’t changed a thing. We also tried to rewrite the “data_override_lagging_class” value from Lookup editor App, but it wasn’t helpful.

The TrackMe version we use in our test environment is 1.2.28

Regards.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @woodentree

Ok, I will check this out deeply and verify, then share some additional things to verify.

First you can please upgrade the app to its latest version, especially since you are in testing, there has been many bug fixes since 😉

Then eventually please verify after the update is the behaviior persists and let me know

Thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @guilmxm ,

Yes, sure. I’ll ask our Tool team to update the app to its last version.

Thanks for the help!

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @guilmxm ,

I asked our Tool Team update TrackMe version. We also performed a few tests.

Good news, there is no more issue with “unapplied” lagging classes for data source.

Unfortunately, there are few bad news as well.

- We still face the same issue with hosts

2. Second issue, It looks like after the update the loading time is waaay higher than before.

We also discovered one more thing we’d like to ask you about. We you logical groups a lot and we’d like to be able to create lagging classes based on it. My colleague from Toole Team just create a feature request related to it: https://github.com/guilhemmarchand/trackme/issues/272

P.S. N’hésitez pas à nous répondre en français si vous le préférez 🙂

Regards.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Bonjour @woodentree !

Ha ha my keyboard doesn't have any of the French things I'd need not to become mad quickly enough lol

So, to answer, great the data sources issue is fixed, ole!

Then, regarding:

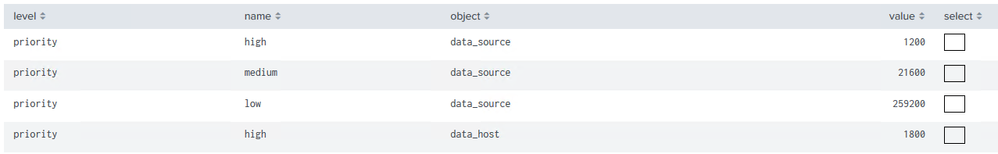

- Data hosts

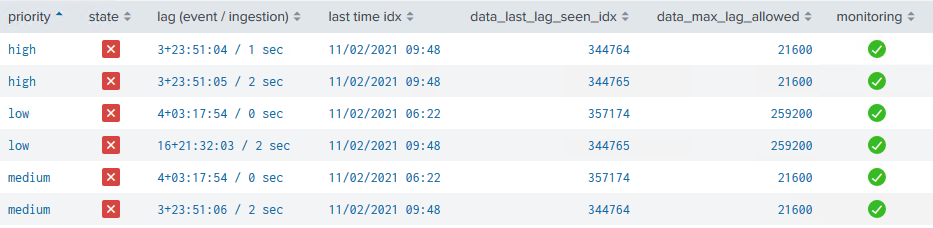

This I think is actually working, the thing is that for hosts it behaves a little bit differently and it cannot be lower the higher value between all the sourcetypes idenfitied for the host.

The reason is specific to data hosts, which can behave on a per sourcetype, or globally (the global alerting mode) so I had to come with a compromise.

The proof in image:

Because the default is 3600 secs, to go bellow this value, this would mean that the sourcetypes themselves go bellow this value, data hosts will take whatever the biggest value.

Let me know your thinking, I know this part is a bit tricky.

- Loading performance:

Gotcha, it's in my plan to work on this and review the UI globally, which will take time, thus there shouldn't have been such an increase so I will investigate!

Received for the Git issue, in da back log!

Cheers

Guilhem

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @guilmxm ,

Ok, got it for data host. Yep, it's a bit tricky, but it makes sense after the explanation.

Thanks for the help and for your engagement!

Regards.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content