Are you a member of the Splunk Community?

- Find Answers

- :

- Apps & Add-ons

- :

- All Apps and Add-ons

- :

- Kinesis Firehose - InvalidEncodingException - Cant...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

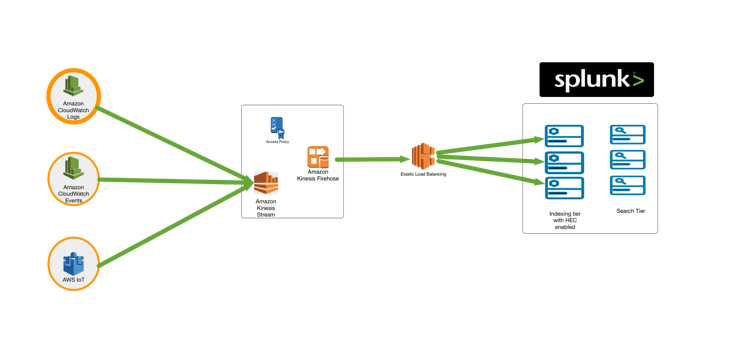

Wanted to see if anyone else has been able to get Cloudwatch logs into Splunk via Kinesis and Kinesis Firehose.

We currently stream all our logs from Cloudwatch to Splunk via Kinesis and the Kinesis Input in the AWS Technical Add-on. Overtime this has become incredibly resource hungry and Splunk have suggested we move to the new Kinesis Firehose integration.

Unfortunately we have yet been able to configure Firehose with a Kinesis Stream as the input.

Current architecture is Cloudwatch Log Group streams to a Kinesis Stream using a Subscription Filter. Aforementioned Kinesis stream is then configured as the input for the Firehose Delivery Stream. No logs ever get to Splunk and the Splunk logs in Cloudwatch are reporting InvalidEncodingException.

InvalidEncodingException, The data could not be decoded as UTF-8.

Anyone else seeing similar or been able to fix this? or does it even work? This image  in this article https://www.splunk.com/blog/2017/11/29/ready-set-stream-with-the-kinesis-firehose-and-splunk-integra... suggest it should but I have been unable to get things flowing.

in this article https://www.splunk.com/blog/2017/11/29/ready-set-stream-with-the-kinesis-firehose-and-splunk-integra... suggest it should but I have been unable to get things flowing.

Thanks

Terry

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Terry,

Try preprocessing your logs an AWS Lambda function to decompress and decode the data before sending it to Splunk. See https://www.splunk.com/blog/2016/11/29/announcing-new-aws-lambda-blueprints-for-splunk.html for information on new lambda blueprints that were shipped with the Kinesis Firehose integration. The CloudWatch logs to Splunk blueprint can be found here: https://console.aws.amazon.com/lambda/home?#/create/configure-triggers?bp=splunk-cloudwatch-logs-pro...

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

At the time of posting the question I could not find this article.

Now having found it, it clearly states compressed data from CW->Kinesis needs be decompressed.

Data coming from CloudWatch Logs is

compressed with gzip compression. To

work with this compression, we need to

configure a Lambda-based data

transformation in Kinesis Data

Firehose to decompress the data and

deposit it back into the stream.

Firehose then delivers the raw logs to

the Splunk HTTP Event Collector (HEC).

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Terry,

Try preprocessing your logs an AWS Lambda function to decompress and decode the data before sending it to Splunk. See https://www.splunk.com/blog/2016/11/29/announcing-new-aws-lambda-blueprints-for-splunk.html for information on new lambda blueprints that were shipped with the Kinesis Firehose integration. The CloudWatch logs to Splunk blueprint can be found here: https://console.aws.amazon.com/lambda/home?#/create/configure-triggers?bp=splunk-cloudwatch-logs-pro...