Join the Conversation

- Find Answers

- :

- Using Splunk

- :

- Other Using Splunk

- :

- Alerting

- :

- Splunk Alert Creation for threshhold monitoring ?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Splunk Alert Creation for threshhold monitoring ?

Hi Friends ,

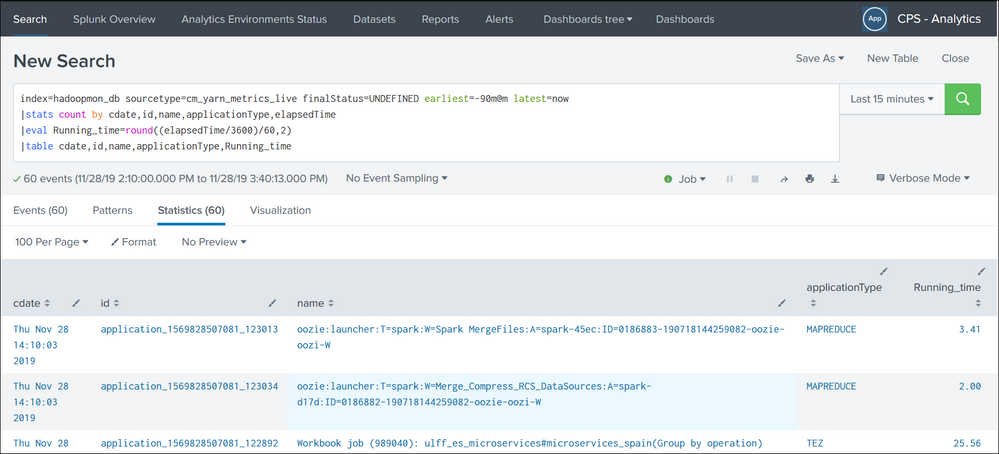

I want to create an alert for my Hadoop Job Monitoring and trigger an alert mail to team notifying or hihglighting only for jobs which has been running for more than 90mins based on which action can be taken.I am attaching the screenshot of my query .Please help me in modifying and fine tuning the query changes if needed. Before i proceed to set an alert for monitoring .

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

index=hadoopmon_db sourcetype=cm_yarn_metrics_live finalStatus=UNDEFINED elapsedTime > 5400 earliet=-90m@m latest=now

To alert, if there is more than one result.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@bapun18

Below are my suggestions for your scenario. As per your requirement, you want a jobs which has been running for more than 90mins, so for this 1) you can filter event in search also. 2) You have to set threshold on id field for specific period. It will helps you to restrict alert flooding for single id.

Check below links for Alert Configurations:

https://docs.splunk.com/Documentation/Splunk/8.0.0/Alert/Aboutalerts

https://docs.splunk.com/Documentation/Splunk/8.0.0/Alert/ThrottleAlerts

Alert scheduling tips: https://docs.splunk.com/Documentation/Splunk/8.0.0/Alert/AlertSchedulingBestPractices