Are you a member of the Splunk Community?

- Find Answers

- :

- Using Splunk

- :

- Other Using Splunk

- :

- Alerting

- :

- Alert triggers action on only some events and not ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Alert triggers action on only some events and not all falling in the timeframe.

Hi,

I'm using a Splunk alert on a cron schedule of every 5 minutes to trigger two actions on each event:

1. writing to lookup , and

2. service-now incident generation using Splunk App for service now.

The issue is that there is not action on more than half of the events.

For example, I have an action to write each event on lookup, and 5 events fall in that specific time frame. When I check my lookup, I find 2 or 3 of them and not all written on the lookup, and same is the case with ticketing actions.

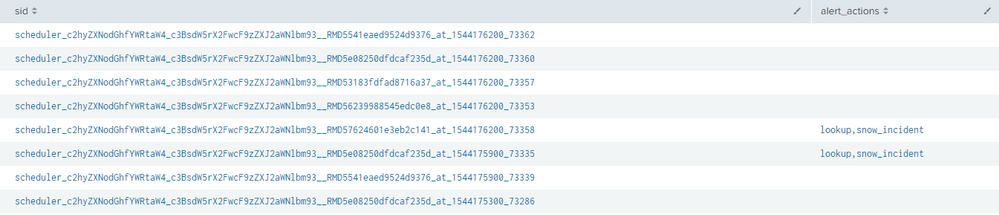

When I check scheduler logs for the same, the alert_actions shows blank for them, is it a bug ?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

As you are running Alerts, "Trigger Conditions" by default apply to that search and default Trigger Condition is Number of Results greater than 0, when schedule search trigger and in output there will be no events then alert_actions will not trigger and you will see alert_action with blank however schedule search ran at every 5 minutes.

So you need to refine your query and check result_count as well and match it with your Trigger Condition, if result count satisfy trigger condition then more troubleshooting require that why alert_action didn't trigger.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi harsmarvania57,

as i checked the blank action was where the result_count was 0, but now the next thing is that if I have result_count of 10 , but the actual action takes place on only few of them and not all of them.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you please let us know what query are you running to check this ? If you run below query it will provide you status for each sid and if you are running Search Head cluster and to know more about what different values in status indicate then read this answer https://answers.splunk.com/answers/449024/search-head-cluster-scheduled-searches-what-are-th.html

index=_internal host=<SEARCH HEAD> sourcetype=scheduler savedsearch_name=* | table sid, status, alert_action

Based on this you will able to figure it out.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The exact issue is, we are running a alert on a cron schedule of 5 minutes, that reads data for the last 5 minutes, with two actions i.e. Writing to Lookup File and Service Now Ticketing, now there are two issues being faced :

1. If i see a list of events for a particular trigger and i see 10 events , but when i look into the lookup or snow , i find that not all of those events are there and always some of them keep getting missed.

2. Sometimes when i see triggered results,but if i re run the search for the same time range there are many other events too.

3. Sometimes the alert does not trigger at all even when there are events.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please check if your data is not throttled at the source, what i mean by that is the event might have happened 2 minutes back but since it could not be indexed at the right time, your alert search didnt find it at that time because it was never indexed. But when you run the alert search you could have seen more number of events because by that time it was already indexed. Splunk finds _time from the events itself. Lets say the event happened at 10:00 AM and was written to the log file with the same time stamp, but due to some issues on the forwarder or network it didnt reach the indexer you Alert trigger will not find it in last 5 minutes data set. Then lets say at 10:06 that event made it to the indexer, so it will be backfiled in the indexer as it finds _time as 10:00 AM . Hope you got what i am trying to say. Check the difference in _time and _index_time to see if there is any lag. if yes then that is the issue. Because if both SNOW and lookup are not getting the events, then chances are likely that your index didnt get it on time.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hey thanks, i guess this was my issue, i have big difference in my indextime and _time. I'l need to fix this.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If my suggestion helped you, can you mark my answer as the solution and upvote ?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

First it is not good to fetch last 5 min data at every time schedule search run because based on Indexer load there might be possibility that event which arrive on Indexers, will process and write little bit late and due to this schedule search will not able to find those events which deplayed writing by Indexers and when you rerun search after few minutes you will able to see multiple events.

So change your query to fetch little bit older data, for example at every 5 min do not search for last 5 min data but change earliest time to -10m@m and latest to -5m@m

and then monitor whether alert_actions will trigger or not

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

yes i tried to check if that was the issue, as a cover up if any event misses the alert, i tried up with a backup one that runs -12m@m to -6m@m, though the misses were reduced greatly, but still there is a miss like 1 in 10 , which is as much as 5-6 in 10 in -5m@m to now.

But the issue is I have a SLA that i need to trigger the alert within 6 minutes.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As I mentioned earlier it is not good idea to run query on latest data due to various factors (Load on Indexers, Network latency from forwarder to Indexer) so it will good to go to management that 6 minute SLA is not good or add more indexers in your environment to distribute load so that Indexers will parse and store data quickly.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We already have 4 indexers, to handle a load of less than 10000 events a day , which is almost nothing to what this setup can take care of, and I'm really not able to understand what is the issue.